Luma launched Agents for creative work, with creator tests focused on keeping characters, lighting and environments coherent across multi-scene sequences. Use it to cut file juggling and lock image generation to Uni-1 when you need tighter control.

Luma is positioning Agents as an autonomous layer across the creative pipeline rather than a single image or video model. In the launch thread, the pitch is "one canvas, one conversation," with the agent handling workflow handoffs that normally happen across separate prompting, editing, and reference-management tools. The linked Luma site frames that as AI agents for creative work rather than a point solution for one medium.

The strongest practical detail so far is that routing is not fixed to one model. Luma's own Uni-1 note says Agent requests can move across models unless the user explicitly selects Create Image → Uni-1, asks the agent to use Uni-1, or checks the output label afterward.

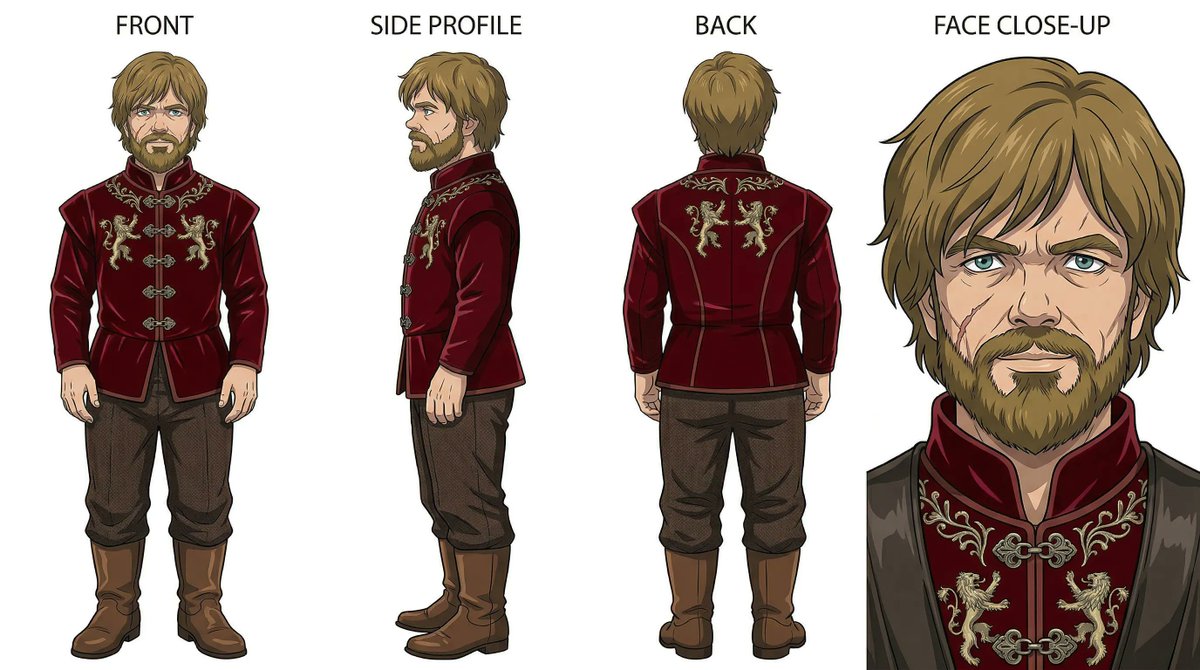

The creator test here is less about raw spectacle than continuity. In the character setup, Lloydcreates defines a recurring person with platinum hair, freckles, tattoos, and a green trucker cap, then says the agent kept that face stable across scenes without extra cleanup. In the multi-room sequence, the same character and outfit persist from hallway to living room to kitchen to a window shot even as the lighting changes by room.

The harder stress test is environmental variety. According to the scene stress test, a green marble kitchen, a Porsche 911 GT1, and an upscale farmers market still held together through shared lighting, color, wardrobe, and environment logic. The driving example in the car scene adds motion blur, shifting reflections, and a drone-to-tracking-shot progression that the creator says was inferred rather than directly specified.

The launch material does not describe a one-prompt movie machine. In the control caveat, Lloydcreates says the human still made the key choices on character design, wardrobe, environment, and color grade, with the agent acting as a multiplier for taste rather than a replacement for it.

That matches the workflow claim in the file-juggling post: the win is less time spent re-uploading references, switching apps, and reconstructing old prompts. The core creative decisions still sit with the person steering the project.

SentrySearch uses Gemini's native video embeddings to index footage without transcription, find matching scenes fast, and trim clips automatically. Editors can move from natural-language search to selects, rough cuts and future EDL exports with less manual logging.

breaking

breakingOpenAI said it is shutting down the Sora app and will share timelines for the app and API, plus instructions for preserving work. Creators should export assets and test replacement tools now if they built remix-heavy video workflows on Sora.

prompt

promptCreators are turning Nano Banana 2 prompting into reusable playbooks built around grids, reference turnarounds, effect templates and product-shot skeletons. That matters because repeatable prompt systems make ads, posters and styled social assets easier to scale without losing consistency.

release

releaseKimi Slides turns prompts or uploaded files into editable decks, then exports them as PPT or images with dense consulting-style layouts intact. Brand, sales and product teams can draft structured presentations fast and keep refining them in familiar slide tools.

update

updateTopview is promoting a 47% discount on its Business Annual plan, which includes unlimited Seedance 2.0 generations, while creator tests highlight multi-scene continuity and seamless music. If you want to stretch Seedance from short clips into longer, more coherent film workflows, this is the plan to watch.

We are loving the energy around Uni-1! Quick note since we’re seeing questions: With Luma Agents, requests can route across models. If you want to make sure you’re using Uni-1, here’s how: - Select Create Image → Uni-1 - Or, explicitly ask the agent to use Uni-1 - Check the Show more

A short film usually means hours bouncing between 5 tools Prompt here, edit there, lose context everywhere @LumaLabsAI Agents work autonomously across your entire workflow, keeping every scene consistent One canvas, one conversation You direct, the agent builds the rest ↓

@LumaLabsAI Agents just launched and it's worth 10 minutes of your time to go look Especially if you're a creative who's exhausted by logistics lumalabs.ai #LumaPartner