KittenTTS 0.8 ships new 15M, 40M and 80M models, including an int8 nano model around 25MB that runs on CPU without GPU. It is a fit for narration, character voices and lightweight assistants that need offline or edge-friendly speech.

Posted by rohan_joshi

KittenTTS is an open-source, lightweight text-to-speech library built on ONNX with models from 15M to 80M parameters (25-80 MB), enabling high-quality CPU-based voice synthesis without GPU. Version 0.8 released with new models: mini (80M), micro (40M), nano (15M, int8 25MB). Features text preprocessing, Python API, demo on Hugging Face Spaces. Apache 2.0 licensed, developer preview. Commercial support available via Stellon Labs.

KittenTTS 0.8 ships as an Apache 2.0, ONNX-based text-to-speech library with a Python API, text preprocessing, and a Hugging Face demo, per the project page. For creators, the key update is the model spread: an 80M mini, 40M micro, and 15M nano, with the smallest int8 build coming in around 25MB. That makes the release unusually compact for voice workflows that need local synthesis instead of cloud calls.

The project positioning in the launch thread also leans toward usable expressive speech, not just bare intelligibility. The stated focus on prosody and pronunciation is what makes this more interesting for narration, character voices, and embedded voice agents than a generic “small TTS model” drop.

Posted by rohan_joshi

Thread discussion highlights: - baibai008989 on edge deployment barrier: The dependency chain issue is a real barrier for edge deployment... anything that pulls torch + cuda makes the whole thing a non-starter. 25MB is genuinely exciting for that use case. - bobokaytop on latency tradeoff: the practical bottleneck for most edge deployments isn't model size -- it's the inference latency on low-power hardware and the audio streaming architecture around it. - altruios on expressive control: One of the core features I look for is expressive control... How does it handle expressive tags?

The first practical read from the Hacker News discussion is that package size solves only part of the problem. In the thread summary, one commenter calls a 25MB model genuinely exciting for edge deployment because it avoids the usual Torch-and-CUDA dependency chain, while another says inference latency on low-power hardware and audio streaming design are still the real bottlenecks.

There is at least one concrete integration datapoint: a commenter cited in the main thread says they wired the repo into Discord voice messages within minutes and saw about 1.5x realtime on an Intel 9700 CPU using the 80M model. The same discussion also raises open questions about expressive tags and fine-grained delivery control, which are still the make-or-break details for creative voice work.

Posted by rohan_joshi

For creators, the interesting part is that these tiny models are aimed at usable expressive speech rather than just bare synthesis. The thread focuses on voice quality, prosody, pronunciation of numbers, and how much control users get over expressive delivery—useful context for anyone building voice content, narration tools, or character/assistant voices.

A new creator tutorial says ComfyUI now has a simpler App-style mode and pairs it with Z-Image for fast local image generation. Local workflows are getting easier to start, so try it if you want to avoid node-heavy graph building on day one.

breaking

breakingOpenAI said it is shutting down the Sora app and will share timelines for the app and API, plus instructions for preserving work. Creators should export assets and test replacement tools now if they built remix-heavy video workflows on Sora.

prompt

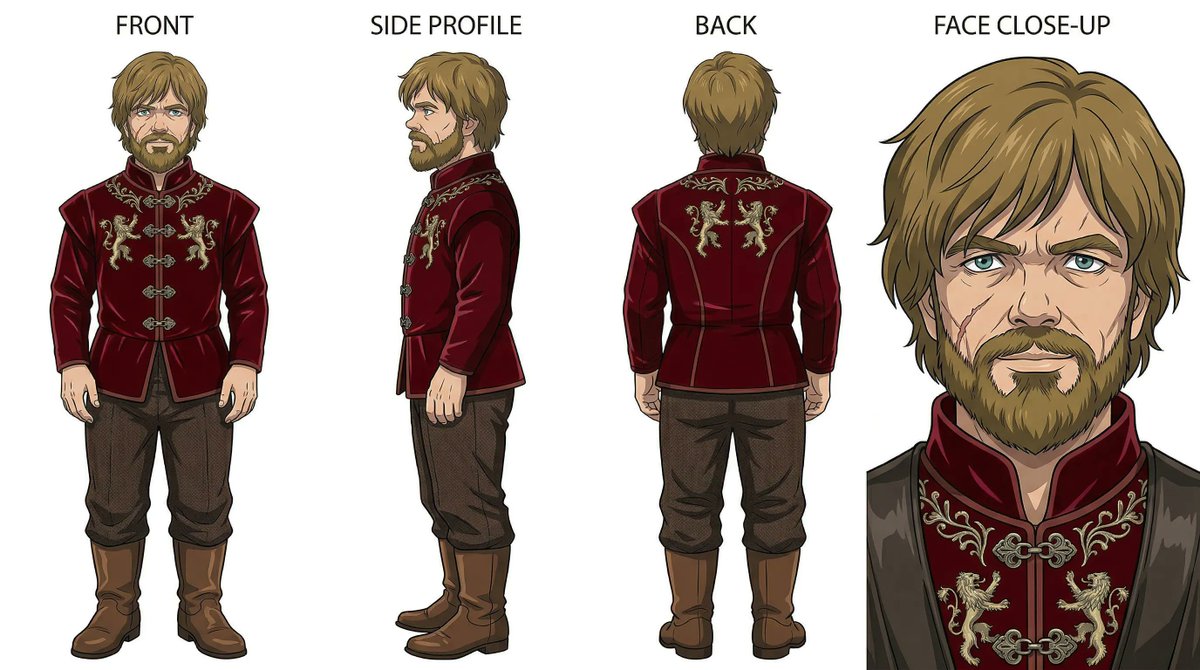

promptCreators are turning Nano Banana 2 prompting into reusable playbooks built around grids, reference turnarounds, effect templates and product-shot skeletons. That matters because repeatable prompt systems make ads, posters and styled social assets easier to scale without losing consistency.

release

releaseLuma launched Agents for creative work, with creator tests focused on keeping characters, lighting and environments coherent across multi-scene sequences. Use it to cut file juggling and lock image generation to Uni-1 when you need tighter control.

release

releaseKimi Slides turns prompts or uploaded files into editable decks, then exports them as PPT or images with dense consulting-style layouts intact. Brand, sales and product teams can draft structured presentations fast and keep refining them in familiar slide tools.