OpenAI says Responses API requests can reuse warm containers for skills, shell, and code interpreter, cutting startup times by about 10x. Faster execution matters more now that Codex is spreading to free users, students, and subagent-heavy workflows.

OpenAI says it "added a container pool to the Responses API," which lets requests reuse warm infrastructure instead of paying full container creation cost on every session. In the same post, OpenAI says container startup for skills, shell, and code interpreter is now "about 10x faster" launch details.

That is an infrastructure change, not a new agent primitive. The practical shift is lower cold-start overhead for tool-using agents that need an execution environment before they can run code or shell steps, and OpenAI framed it as making "agent workflows" faster rather than changing model behavior repost.

The timing lines up with broader Codex distribution. A developer post says "You can use Codex in your free ChatGPT account" free-tier note, and a separate OpenAI promotion for students, surfaced in the repost, offers college students in the U.S. and Canada $100 in Codex credits.

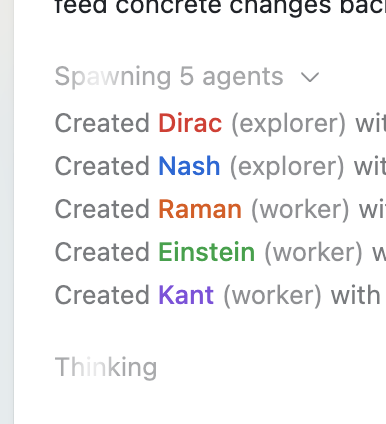

That wider access increases the odds that more users will hit execution latency in real workflows, especially when one request fans out into several workers. The shared screenshot shows a concrete example: one run starts by "Spawning 5 agents" and then creates two "explorer" agents and three "worker" agents, which is exactly the kind of subagent-heavy pattern where warm container reuse can shave visible setup time.

Vercel Emulate added a programmatic API for creating, resetting, and closing local GitHub, Vercel, and Google emulators inside automated tests. That makes deterministic integration tests easier to wire into CI and agent loops without manual setup.

release

releaseOpenClaw shipped version 2026.3.22 with ClawHub, OpenShell plus SSH sandboxes, side-question flows, and more search and model options, then followed with a 2026.3.23 patch. Teams get a broader plugin surface, but should patch quickly and review plugin trust boundaries as the ecosystem grows.

release

releaseCursor shipped Instant Grep, a local regex index built from n-grams, inverted indexes, and Bloom filters that drops large-repo searches from seconds to milliseconds. Faster candidate retrieval shortens the coding-agent loop, especially when ripgrep-style scans become the bottleneck.

breaking

breakingChatGPT now saves uploaded and generated files into an account-level Library that can be reused across conversations from the web sidebar or recent-files picker. It removes repetitive re-uploading and makes past PDFs, spreadsheets, and images part of a persistent working context.

breaking

breakingEpoch AI says GPT-5.4 Pro elicited a publishable solution to one 2019 conjecture in its FrontierMath Open Problems set, with a formal writeup planned. Treat it as an early milestone worth reproducing, not blanket evidence that frontier models can already automate math research.

Agent workflows got even faster. You can spin up containers for skills, shell and code interpreter about 10x faster. We added a container pool to the Responses API, so requests can reuse warm infrastructure instead of creating a full container creation each session. Show more