Expect wraps browser QA for Claude Code, Codex, or Cursor into a CLI that records bug videos and feeds failures back into a fix loop. It gives coding agents a tighter UI validation cycle without requiring a custom browser harness.

init command, while an amplified recommendation shows early developer reaction centering on just installing it first.annualCost filter in a CSV export path and attaching a replay link.Expect packages browser-based app QA into a command-line workflow instead of requiring teams to wire up their own browser harnesses. In the announcement, Aiden Bai describes the flow as: run Claude Code or Codex to QA your app, "watch a video of every bug found," then "fix and repeat until passing." The same post says it runs as either a CLI or an agent skill, and links to the GitHub repo.

The project is positioned as a wrapper around tools engineers already use rather than a new coding agent. In the follow-up demo, Bai says Expect uses "your existing Claude Code, Codex, or Cursor under the hood," which makes the integration story more about inserting browser validation into an existing agent loop than switching stacks. The project page adds that it scans unstaged changes or branch diffs, generates a test plan with AI, and asks for approval in the terminal before executing tests in a live browser.

The distinctive feature is the debugging artifact. Bai's demo post says Expect "generates a highlight reel for every test" and, when something fails, provides "context for another agent to fix." That turns UI regression checking into something closer to an agent-readable bug report with a browser replay attached, not just a pass/fail test log.

A shared practitioner example shows the kind of issue this can catch. The failure screenshot reports that a CsvExportButton filter interface was missing an annualCost field, FilterBar was not passing it through, and the export request therefore ignored the Annual filter; the run failed on "verify export API request" after 13 steps and produced a replay link. That lines up with the launch pitch that Expect can find bugs without manual hunting, and with an early repost urging developers to "probably go ahead and just install this" rather than treat it as another browser-testing demo.

OpenCode is adding remote sandboxes, synced state across laptop, server, and cloud, and more product surface inside its plugin system. That makes long-running off-laptop workflows more practical, but operators should still review telemetry, sandbox, and exposure defaults.

breaking

breakingMalicious LiteLLM 1.82.7 and 1.82.8 releases executed .pth startup code to steal credentials and were quarantined after disclosure. Rotate secrets, audit transitive AI-tooling dependencies, and add package-age controls before letting agents install packages autonomously.

breaking

breakingTurboQuant claims 6x KV-cache memory reduction and up to 8x faster attention on H100s without retraining or quality loss on long-context tasks. If those results hold in serving stacks, teams should revisit long-context cost, capacity, and vector-search design.

release

releaseOpenCode is adding remote sandboxes, synced state across laptop, server, and cloud, and more product surface inside its plugin system. That makes long-running off-laptop workflows more practical, but operators should still review telemetry, sandbox, and exposure defaults.

release

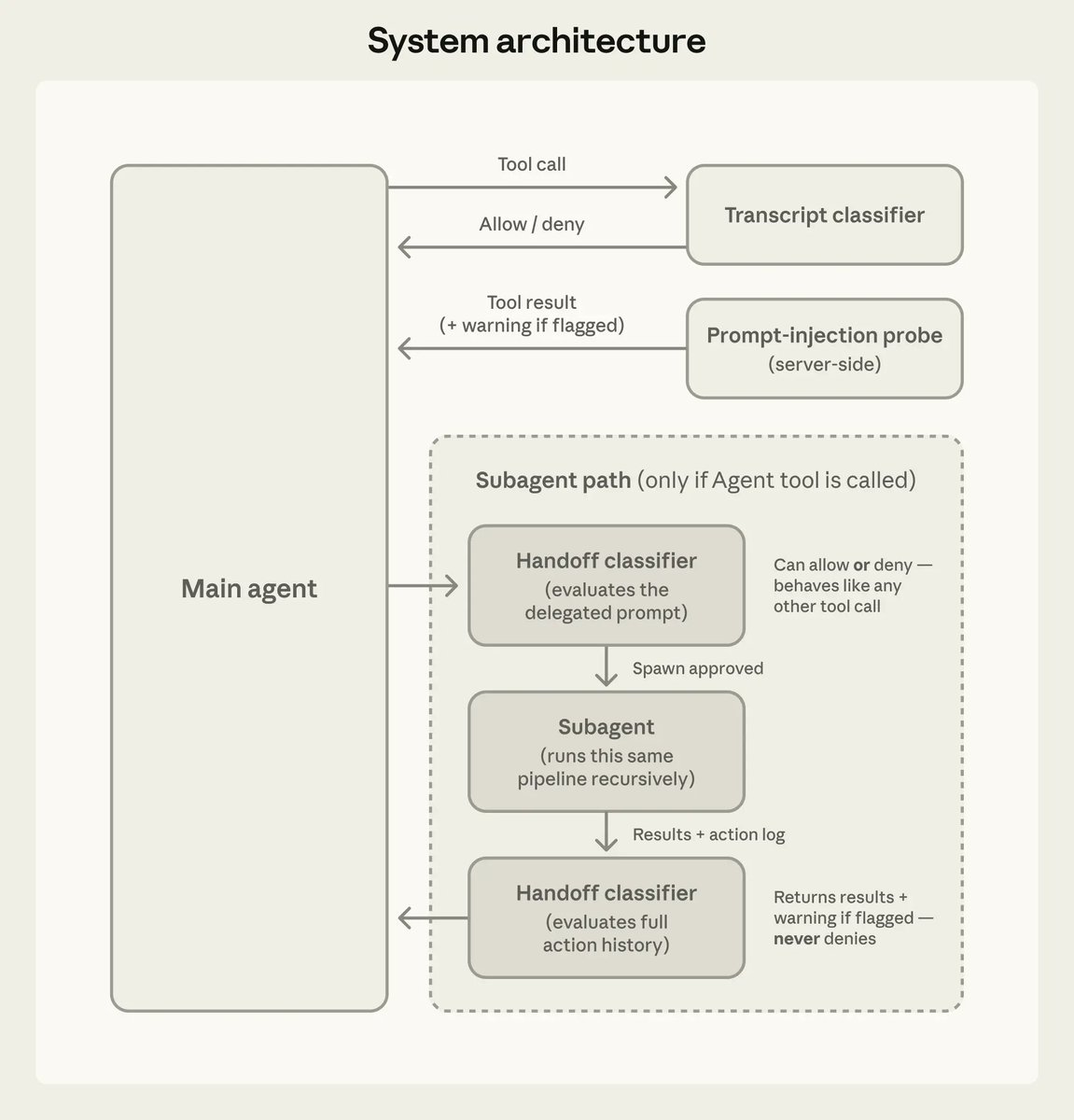

releaseClaude Code 2.1.84 adds an opt-in PowerShell tool, new task and worktree hooks, safer MCP limits, and better startup and prompt-cache behavior. Anthropic also documented auto mode’s action classifier and added iMessage as a channel, so teams should review permissions and remote-control workflows.

Introducing Expect Let agents test your code in a real browser 1. Run Claude Code / Codex to QA your app 2. Watch a video of every bug found 3. Fix and repeat until passing Run as a CLI or agent skill. Fully open source

Expect generates a highlight reel for every test If tests fail, it gives you context for another agent to fix demo: expect.dev

Holy moly. Caught a bug without me having to look for it.

Introducing Expect Let agents test your code in a real browser 1. Run Claude Code / Codex to QA your app 2. Watch a video of every bug found 3. Fix and repeat until passing Run as a CLI or agent skill. Fully open source