Claude Code 2.1.84 adds an opt-in PowerShell tool, new task and worktree hooks, safer MCP limits, and better startup and prompt-cache behavior. Anthropic also documented auto mode’s action classifier and added iMessage as a channel, so teams should review permissions and remote-control workflows.

TaskCreated and HTTP WorktreeCreate hooks, and model-override env vars for pinned third-party backends, according to the 2.1.84 changelog.owner/repo#123 references, and the removal of an explicit top-level “Avoid over-engineering” rule.claude --enable-auto-modeThe biggest functional addition is the changelog's opt-in PowerShell preview for Windows, which exposes PowerShell commands from the CLI for Windows-side automation and scripting; Anthropic also linked dedicated tool docs in the PowerShell reference. The same release adds TaskCreated hooks, HTTP WorktreeCreate hooks that can return hookSpecificOutput.worktreePath, and x-client-request-id request headers for timeout debugging.

For teams running pinned models on Bedrock, Vertex, or Foundry, the release notes add ANTHROPIC_DEFAULT_{OPUS,SONNET,HAIKU}_MODEL_SUPPORTS overrides plus _MODEL_NAME and _DESCRIPTION variables to control capability detection and the /model picker label. The CLI surface diff in the follow-up thread also lists new idle-threshold env vars, allowedChannelPlugins as a managed setting, and a VS Code rate-limit banner with usage percentage and reset time.

A lot of the practical work here is reliability. Anthropic says the changelog fixes a startup path where partial-clone repos could trigger “mass blob downloads,” resolves an MCP tool/resource cache leak on reconnect, and fixes subagents failing with API 400 when both outer and inner sessions used --json-schema. It also says startup is about 30 ms faster, claude "prompt" now renders before MCP servers finish connecting, /stats screenshots are 16× faster, and global system-prompt caching now works when ToolSearch is enabled.

The prompt changes are small on paper but meaningful in harness behavior. The diff summary says Claude no longer has an explicit top-level “Avoid over-engineering” rule, even though narrower instructions remain: no extra features, no unnecessary refactors, and no one-off abstractions. The same update standardizes GitHub references as owner/repo#123, matching a release note that bare #123 links no longer auto-render.

The stronger shift is around parallelism. Screenshots in

show commit and PR playbooks now explicitly marking which bash commands should run in parallel, with language that “multiple tools can run in one response” for “optimal performance.” At the same time, the prompt diff says Claude is no longer explicitly told to “speculatively” fan out extra Glob and Read calls, which should reduce some broad prefetch behavior.

That tradeoff is already visible in user reaction. One practitioner complained in a user report that lazy-loading tools “adds latency and error opportunity,” while Anthropic's own release notes focus on prompt-cache gains and faster startup. The net effect looks less like a capability jump than a recalibration of when Claude should parallelize aggressively and when it should keep context lean.

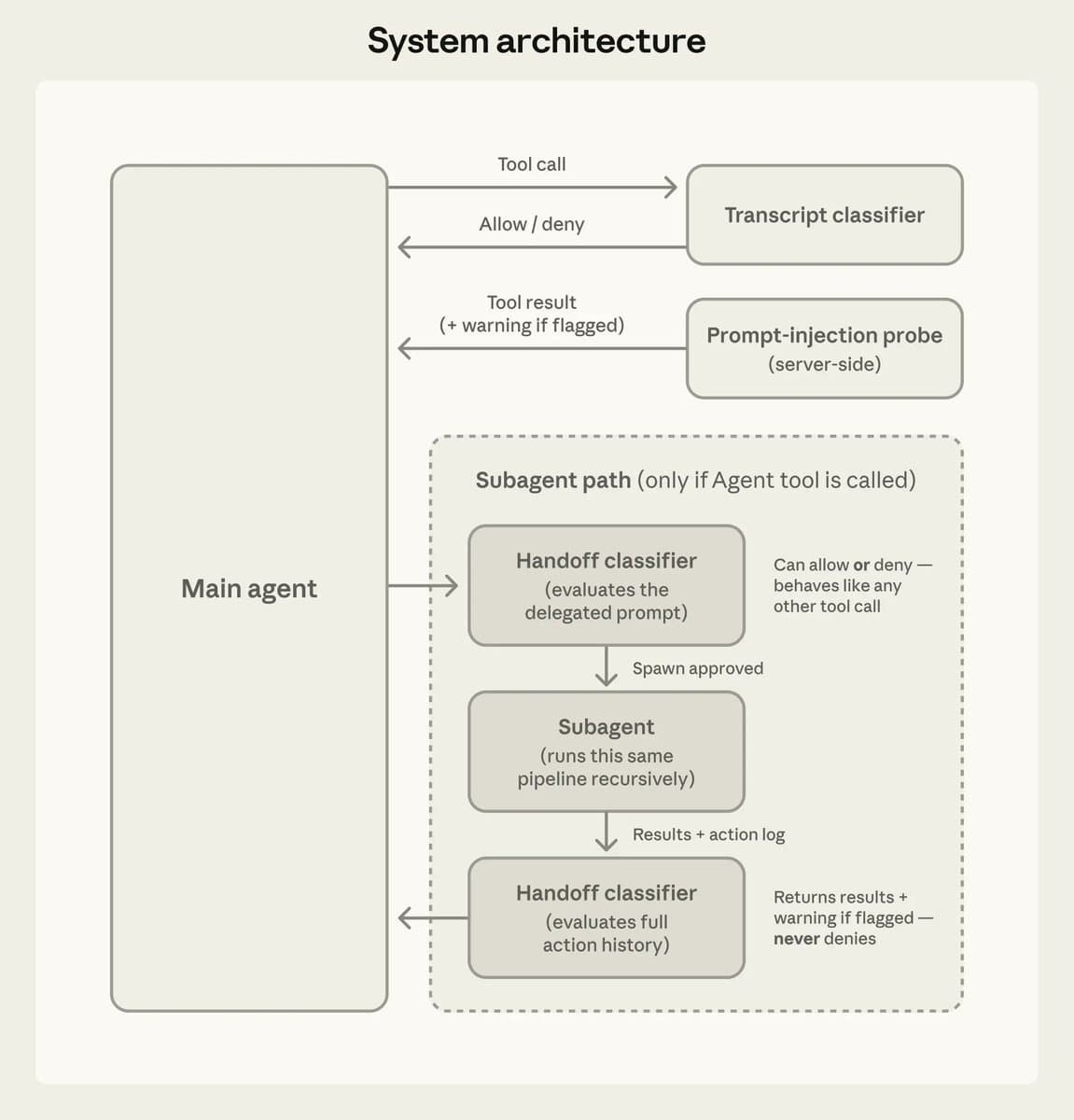

Anthropic's new engineering write-up explains auto mode as a middle layer between manual approvals and --dangerously-skip-permissions. According to the engineering post, the system uses a server-side prompt-injection probe on tool output plus a transcript classifier that decides whether each action should be allowed or denied before execution.

Auto mode is already rolling out beyond individuals. Anthropic engineer Cat Wu said in the Team rollout post that “almost everyone on our team uses this as a daily driver,” and that Team users can enable it with claude --enable-auto-mode and enter it with Shift+Tab. A practitioner reading the design details called out a “17% false-negative rate on real overeager actions” in a reaction post, alongside an architecture diagram showing that subagents run the same classifier pipeline recursively.

The channel surface is expanding too. An official plugin announcement says iMessage is now available as a channel, and that lands in the same release window as allowedChannelPlugins for team and enterprise admins in the 2.1.84 notes. Put together, 2.1.84 is not just a CLI patch: it widens where Claude can act, adds more hooks around task creation and worktrees, and adds more policy controls for how those actions are exposed.

Expect wraps browser QA for Claude Code, Codex, or Cursor into a CLI that records bug videos and feeds failures back into a fix loop. It gives coding agents a tighter UI validation cycle without requiring a custom browser harness.

breaking

breakingMalicious LiteLLM 1.82.7 and 1.82.8 releases executed .pth startup code to steal credentials and were quarantined after disclosure. Rotate secrets, audit transitive AI-tooling dependencies, and add package-age controls before letting agents install packages autonomously.

breaking

breakingTurboQuant claims 6x KV-cache memory reduction and up to 8x faster attention on H100s without retraining or quality loss on long-context tasks. If those results hold in serving stacks, teams should revisit long-context cost, capacity, and vector-search design.

release

releaseOpenCode is adding remote sandboxes, synced state across laptop, server, and cloud, and more product surface inside its plugin system. That makes long-running off-laptop workflows more practical, but operators should still review telemetry, sandbox, and exposure defaults.

release

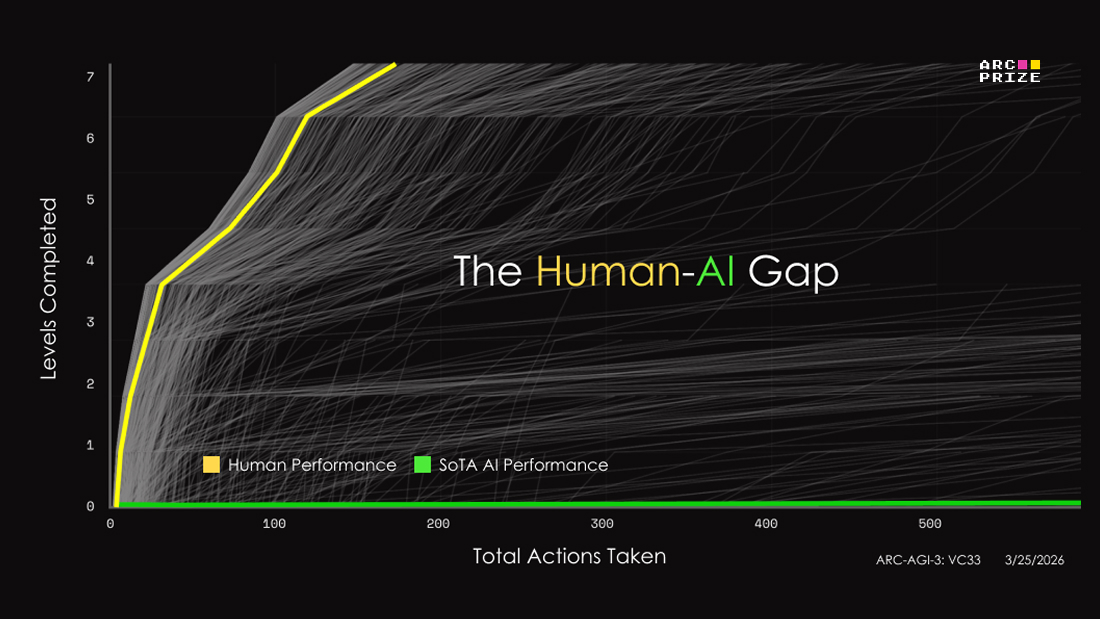

releaseARC-AGI-3 swaps static puzzles for interactive game-like environments and posts initial frontier scores below 1%, with Gemini 3.1 Pro at 0.37%. Teams can use it to inspect agent reasoning, but score interpretation still depends heavily on the human-efficiency metric and no-harness setup.

Claude Code 2.1.84 has been released. 8 flag changes, 40 CLI changes, 5 system prompt changes Highlights: • Added opt-in PowerShell tool for Windows, enabling PowerShell commands from the CLI for Windows automation • Critical-files output lists only file paths, removing brief Show more

New on the Engineering Blog: How we designed Claude Code auto mode. Many Claude Code users let Claude work without permission prompts. Auto mode is a safer middle ground: we built and tested classifiers that make approval decisions instead. Read more: anthropic.com/engineering/cl…

imessage is now available as a channel!

i bought a mac mini so i could have blue bubbles when texting claude and it started roasting me... try the imessage plugin for claude code today with /plugin install imessage@claude-plugins-official

Claude Code 2.1.84 system prompt updates Notable changes: 1) Claude no longer gets an explicit “Avoid over-engineering” top-level rule. The remaining guidance (no extra features/refactors, no impossible-case validation, no one-off abstractions) stays, but loses the overarching Show more