OpenCode is adding remote sandboxes, synced state across laptop, server, and cloud, and more product surface inside its plugin system. That makes long-running off-laptop workflows more practical, but operators should still review telemetry, sandbox, and exposure defaults.

opencode serve, multi-backend WebUI setups, and local-model support.The new capability is distributed execution. In thdxr's description, agents can run "on your laptop, on a remote server, in a cloud sandbox provider," and if you "shut your laptop" the work keeps running; when you reopen it, "all the data syncs" back distributed OpenCode. A follow-up post extends that model to multiple controller devices, including a phone as a remote controller or a cloud-hosted "home server" coordinating other clients device configs.

That matters because OpenCode already spans terminal, IDE, and desktop usage, with support for LSP, multi-session work, shareable session links, and 75-plus model providers including local models, according to the product page. In the Hacker News thread, practitioners also describe opencode serve as reachable "from anywhere," working well with Tailscale, and letting the WebUI connect to multiple backends at once through the remote-workflow comment.

OpenCode is also refactoring around plugins instead of treating them as an edge feature. Thdxr says the team now has "most features" in place and is doing a pass to decompose them into plugins because, since the team "didn't have to use the plugin api," it "never become very good"; from here, "all new features" are expected to ship as internal plugins plugin pass. An OpenCode repost adds the operational detail that features in OpenCode itself can be activated or deactivated at runtime, the same way as external plugins internal plugins.

That design already has real user signals behind it. In the Hacker News thread, one developer says OpenCode is their primary harness for llama.cpp, Claude, and Gemini, and that they built a self-modifying hook system over IPC via a plugin in the extensibility comment. Another says a plugin that prunes and retrieves conversation history feels like an "infinite context window" in the memory plugin comment.

Posted by rbanffy

OpenCode looks most relevant as an AI agent harness: it supports many providers, local models, LSP, remote access, and plugin-based workflow customization. The thread’s useful signals for engineers are around deployment patterns (Tailscale, homelabs, containers), safety boundaries (bubblewrap sandboxing), and operational concerns like telemetry, proxying, breakage across releases, and memory use.

The product story is stronger than the operational story right now. The public demo language around remote sandboxes stresses that the current UX is "temporary" and only demonstrates the primitives distributed OpenCode, so deployment details still look in flux.

Operators also have a few concrete checks to make. The Hacker News discussion surfaces praise for deployment flexibility, including homelabs, containers, and bubblewrap sandboxing, but it also raises a telemetry concern: one commenter says OpenCode sends telemetry to its own servers "even with local models," with "no option to disable it," as summarized in thread highlights. Separately, the next desktop release is broadening the review surface with visible Git and branch changes in the review panel, shown in review panel demo and the attached review panel video.

Posted by rbanffy

OpenCode is an open source AI coding agent that assists developers in writing code via terminal, IDE, or desktop app. Key features include LSP support, multi-session capability, shareable session links, integration with GitHub Copilot and ChatGPT Plus/Pro, compatibility with 75+ LLM providers including local models, and privacy-first design with no code or context storage. It boasts 120,000 GitHub stars, 800 contributors, 10,000+ commits, and 5M monthly users. Offers free models or Zen for curated, benchmarked coding models. Installation via curl, npm, brew, etc.

Posted by rbanffy

Thread discussion highlights: - everlier on remote/web workflows: opencode serve can be accessed from anywhere, works well with Tailscale, and the WebUI can connect to multiple backends at once. - anonym29 on telemetry and privacy: OpenCode is said to send telemetry to its own servers even with local models, with no option to disable it. - khimaros on plugins and extensibility: Uses OpenCode as a primary harness for llama.cpp, Claude, and Gemini, and built a plugin for a self-modifying hook system over IPC.

Expect wraps browser QA for Claude Code, Codex, or Cursor into a CLI that records bug videos and feeds failures back into a fix loop. It gives coding agents a tighter UI validation cycle without requiring a custom browser harness.

breaking

breakingMalicious LiteLLM 1.82.7 and 1.82.8 releases executed .pth startup code to steal credentials and were quarantined after disclosure. Rotate secrets, audit transitive AI-tooling dependencies, and add package-age controls before letting agents install packages autonomously.

breaking

breakingTurboQuant claims 6x KV-cache memory reduction and up to 8x faster attention on H100s without retraining or quality loss on long-context tasks. If those results hold in serving stacks, teams should revisit long-context cost, capacity, and vector-search design.

release

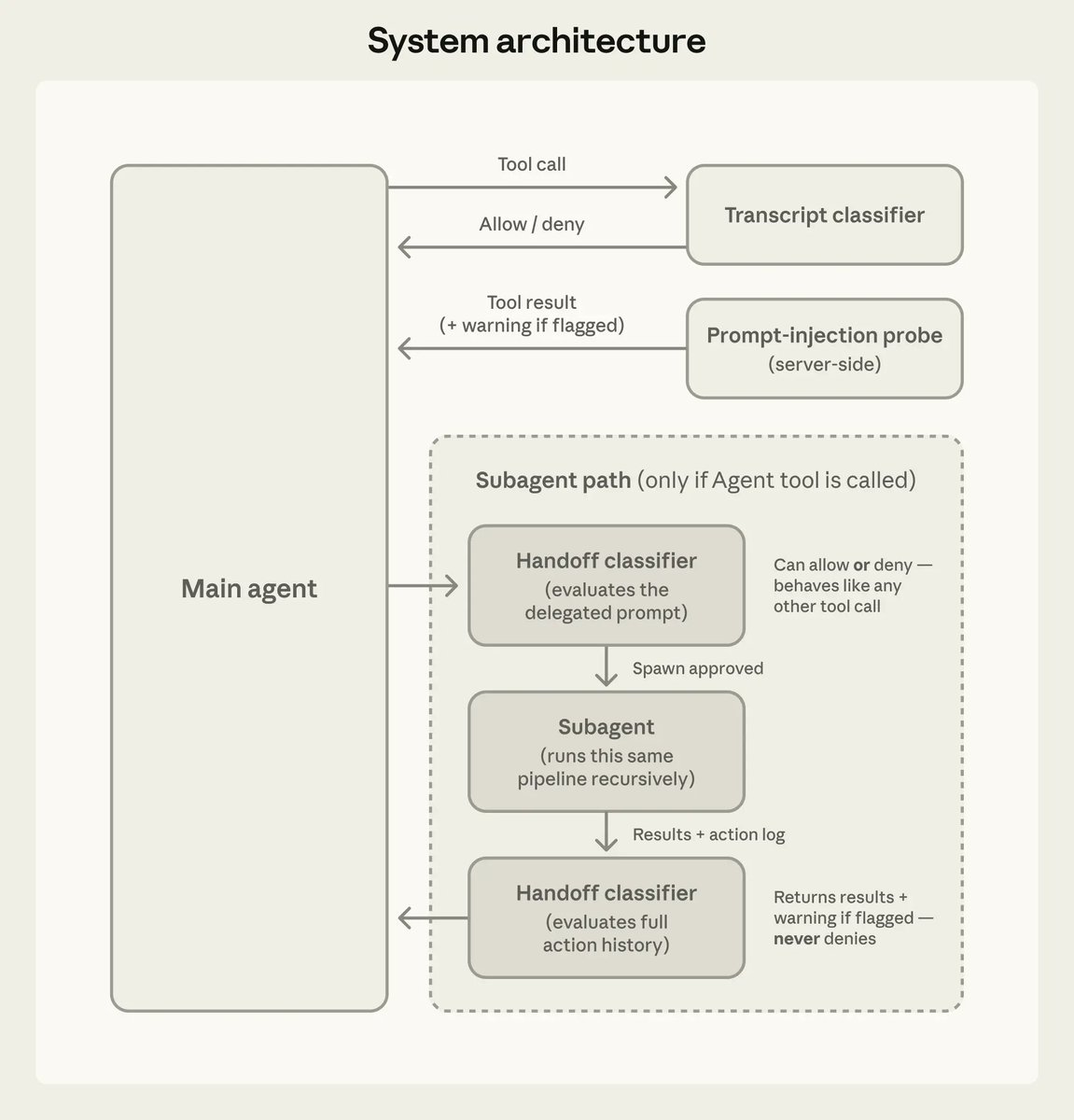

releaseClaude Code 2.1.84 adds an opt-in PowerShell tool, new task and worktree hooks, safer MCP limits, and better startup and prompt-cache behavior. Anthropic also documented auto mode’s action classifier and added iMessage as a channel, so teams should review permissions and remote-control workflows.

release

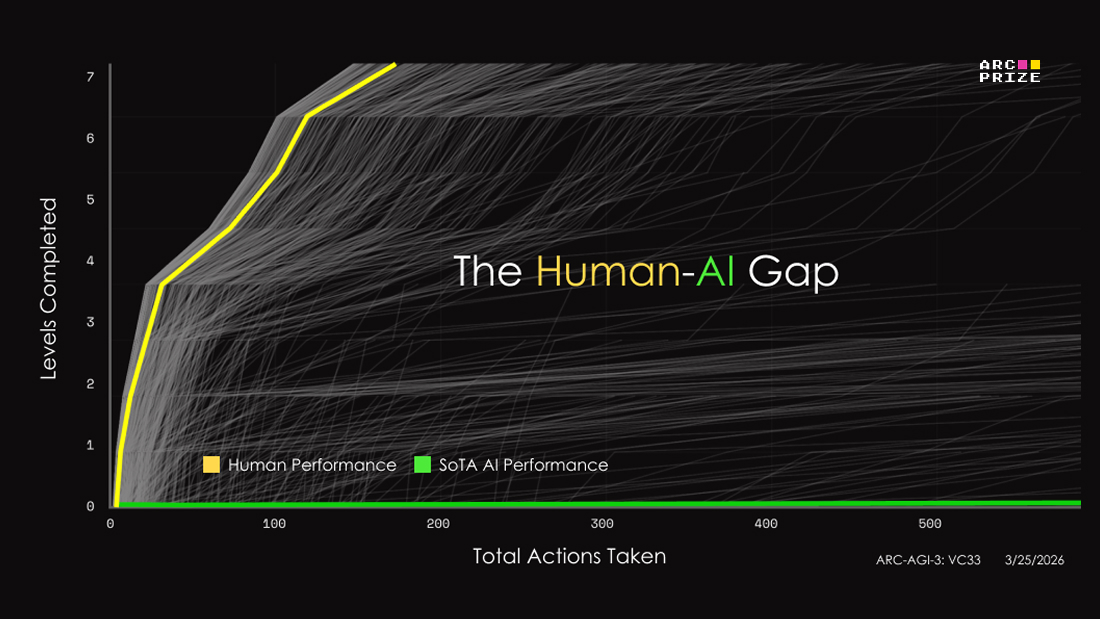

releaseARC-AGI-3 swaps static puzzles for interactive game-like environments and posts initial frontier scores below 1%, with Gemini 3.1 Pro at 0.37%. Teams can use it to inspect agent reasoning, but score interpretation still depends heavily on the human-efficiency metric and no-harness setup.

james has achieved distributed opencode agents can run on your laptop, on a remote server, in a cloud sandbox provider shut your laptop and things keep running open it back up and all the data syncs delete the sandbox nothing is lost

OpenCode is about to get more powerful with remote sandboxes I showed a brief demo before, but here's a much more in-depth demo. it's not hard to add basic support for a remote env, but handling all the edge cases like when a remote env gets deleted is difficult. especially if

now that we have most features we want implemented, we're doing a pass to decompose them all into plugins since we didn't have to use the plugin api, it never become very good now all new features we want to build will be internal plugins, so the plugin api will have to be good

Features in OpenCode itself will be internal plugins and can be activated/deactivated at runtime. Same as external plugins. This will allow for reloading plugins at runtime. Trying to tweak the DX a little more. Almost ready to go.