Malicious LiteLLM 1.82.7 and 1.82.8 releases executed .pth startup code to steal credentials and were quarantined after disclosure. Rotate secrets, audit transitive AI-tooling dependencies, and add package-age controls before letting agents install packages autonomously.

litellm_init.pth file that runs on Python startup and can steal SSH keys, environment variables, and cloud credentials, with exfiltration reportedly sent to models.litellm.cloud according to the disclosure issue..pth file and rotate credentials because the incident report treats all exposed secrets as compromised.pip install: the HN discussion notes LiteLLM is often a transitive dependency in AI tooling, and one commenter described it as being pulled in by a Cursor MCP plugin.Posted by dot_treo

GitHub issue #24512 discloses a supply chain attack in LiteLLM PyPI package versions 1.82.7 and 1.82.8. A malicious litellm_init.pth file (SHA256: ceNa7wMJnNHy1kRnNCcwJaFjWX3pORLfMh7xGL8TUjg) executes automatically on Python startup, stealing credentials like SSH keys, env vars, cloud creds, and exfiltrating to models.litellm.cloud. Attributed to TeamPCP via compromised maintainer account from prior Trivy attack. PyPI quarantined the package; users urged to check versions, delete .pth files, rotate all credentials. Follow-up issue #24518 provides team response and timeline.

The core disclosure says versions 1.82.7 and 1.82.8 on PyPI included a malicious litellm_init.pth file with a published SHA256 hash, and that the payload "executes automatically on Python startup" disclosure issue. That matters because .pth execution turns a normal dependency install into code execution before an application even imports LiteLLM.

According to the core summary, the reported target data included "SSH keys, env vars, cloud creds," making this a broad workstation and CI secret exposure event rather than a narrowly scoped library bug. The maintainers attributed the attack to TeamPCP via a compromised maintainer account from the earlier Trivy incident, though that attribution is still reported through the incident thread rather than an independent public forensic writeup disclosure issue.

The immediate response is also unusually blunt. The maintainer issue says the package was quarantined on PyPI and urges users to check versions, delete the .pth file, and rotate all credentials. OpenHands said its production environments were unaffected but that it was still investigating developer exposure, adding that open source contributors who "bypassed the lockfile" during dependency installs should check whether they were affected OpenHands exposure note.

Posted by dot_treo

Today’s new discussion shifted from the initial breach report toward mitigation and blast-radius questions. People shared package-age controls for npm/pnpm/uv/bun, pointed to analysis and checking tools for determining exposure, and noted that LiteLLM is often a transitive dependency pulled in by other AI tooling rather than a direct install. There was also renewed focus on agent risk: commenters tied the incident to autonomous systems that can run installs on their own, and one commenter noted the thread appears to have been spam-flooded by the attacker to obscure information sharing, similar to the earlier Trivy incident.

The most useful engineering takeaway from the follow-on discussion is that this was not treated as just another Python package incident. The core thread summary frames package supply-chain risk as part of the AI tooling stack itself, especially where model gateways, MCP servers, plugins, and local coding environments pull in fast-moving dependencies.

That shows up in two practical ways. First, the discussion summary says LiteLLM is often installed transitively; one commenter wrote that it was "pulled in by a Cursor MCP plugin," which is exactly the kind of dependency chain many teams will not audit line by line. Second, the fresh thread says commenters pushed release-age controls across npm, pnpm, uv, and bun, with one suggestion to "set min release age to 7 days."

The agent angle is the sharper warning. In the same discussion, a commenter wrote, "A compromised package is bad. An agent that autonomously runs pip install with that package is a different problem" discussion summary. That captures why this incident lands squarely in AI operations: the risk is not only what developers install directly, but what semi-autonomous tooling is allowed to fetch and execute inside dev boxes, ephemeral sandboxes, and CI jobs.

Posted by dot_treo

Thread discussion highlights: - postalcoder on Dependency hygiene / release-age controls: npm/bun/pnpm/uv now all support setting a minimum release age for packages... set min release age to 7 days - Bullhorn9268 on Incident analysis and detection tools: we did a small analysis here ... and also build this mini tool to analyze the likelihood of you getting pwned through this - n1tro_lab on Transitive AI dependencies: LiteLLM is a transitive dependency... pulled in by a Cursor MCP plugin... these packages get pulled in as dependencies of dependencies

Posted by dot_treo

For AI engineers, the key takeaway is that package supply-chain risk is now part of the model/tooling stack, not just general Python security. The discussion highlights mitigations like release-age gating, tighter dependency review, and being careful with transitive installs in agentic or MCP-based workflows.

PlayerZero launched an AI production engineer and claims its world model can simulate failures before release, trace incidents to exact PRs, and beat existing tools on real production test cases. If those numbers hold, the interesting shift is from code generation to debugging, testing, and observability after code ships.

breaking

breakingTurboQuant claims 6x KV-cache memory reduction and up to 8x faster attention on H100s without retraining or quality loss on long-context tasks. If those results hold in serving stacks, teams should revisit long-context cost, capacity, and vector-search design.

release

releaseOpenCode is adding remote sandboxes, synced state across laptop, server, and cloud, and more product surface inside its plugin system. That makes long-running off-laptop workflows more practical, but operators should still review telemetry, sandbox, and exposure defaults.

release

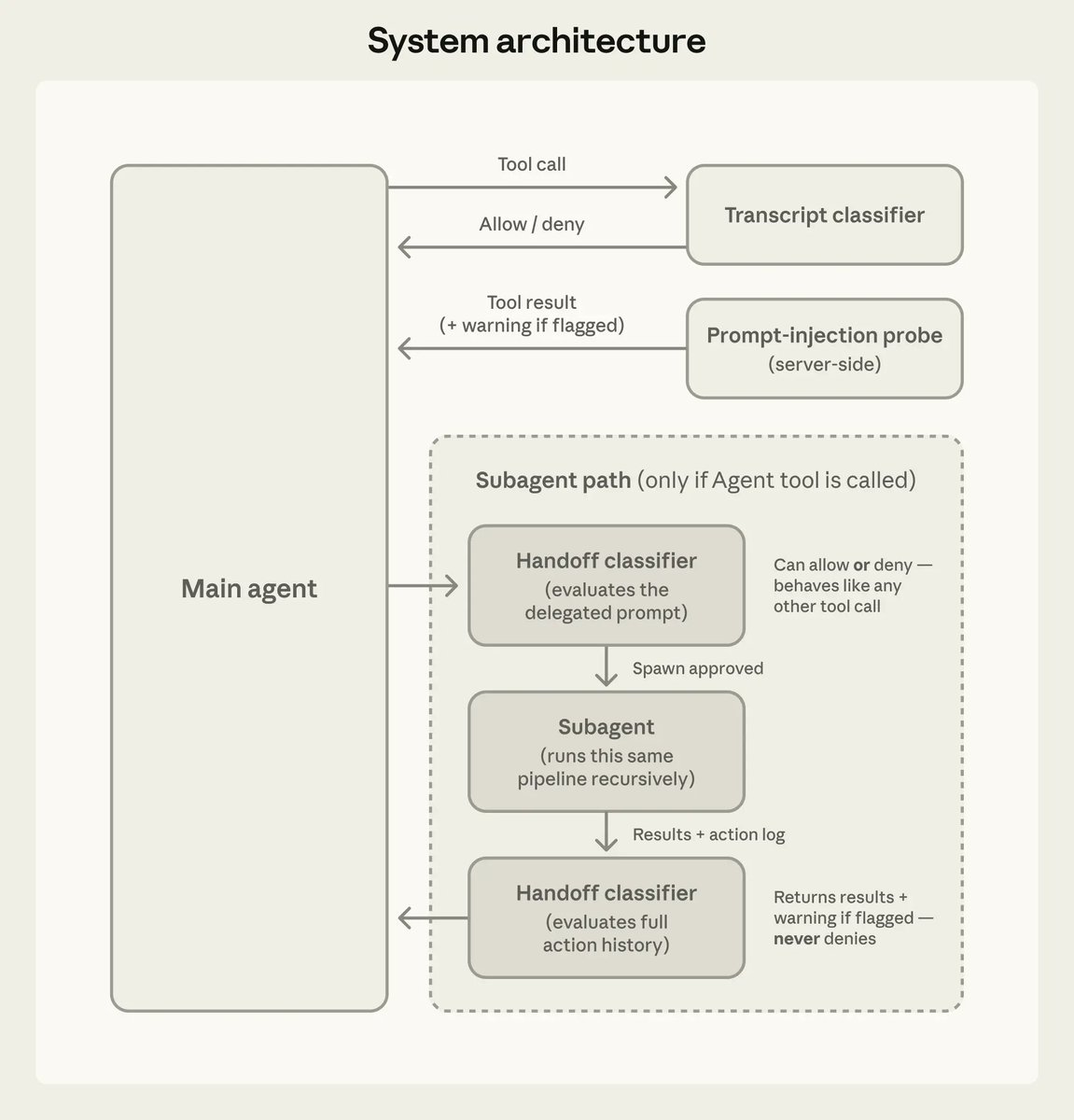

releaseClaude Code 2.1.84 adds an opt-in PowerShell tool, new task and worktree hooks, safer MCP limits, and better startup and prompt-cache behavior. Anthropic also documented auto mode’s action classifier and added iMessage as a channel, so teams should review permissions and remote-control workflows.

release

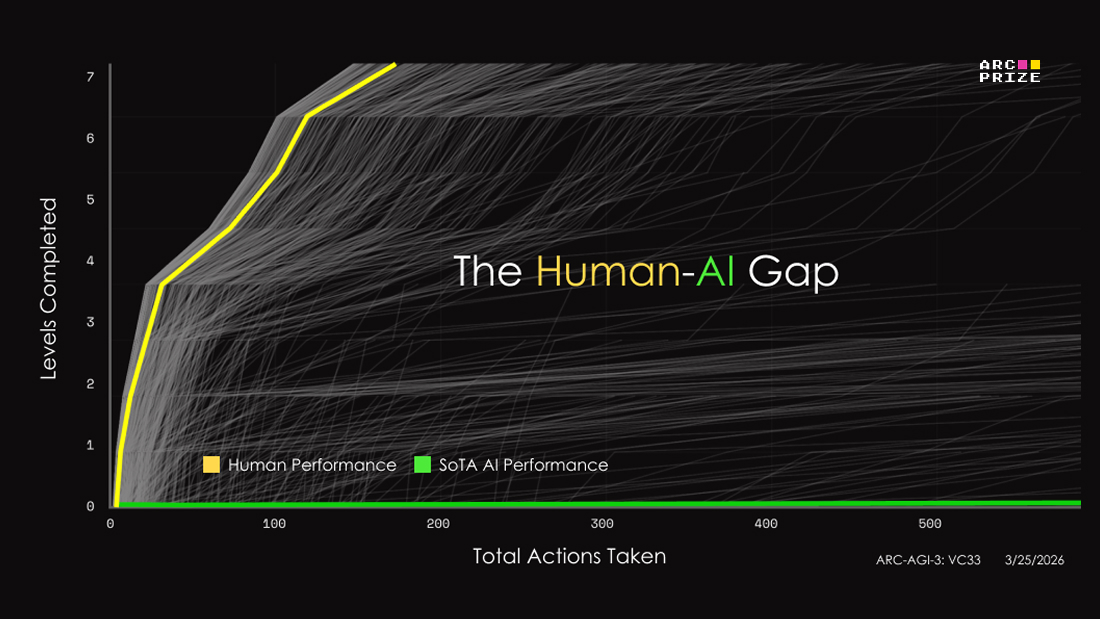

releaseARC-AGI-3 swaps static puzzles for interactive game-like environments and posts initial frontier scores below 1%, with Gemini 3.1 Pro at 0.37%. Teams can use it to inspect agent reasoning, but score interpretation still depends heavily on the human-efficiency metric and no-harness setup.

AI can generate thousands of lines of code in minutes. Shipping code that actually works is the hard part. Next Tuesday, Ray Myers from OpenHands is hosting a webinar on high-quality agentic coding: how to use agents without drowning in bugs. RSVP link in reply.