Claude Code prompt kits surface – 20+ tool system prompts circulate

Stay in the loop

Free daily newsletter & Telegram daily report

Executive Summary

Claude Code’s ecosystem tilted toward “open” copy/paste infrastructure: a community best-practice repo bundles Agents, slash Commands, persistent Memory, event-triggered Hooks, and installable Skills into a reusable convention; in parallel, a separate GitHub repo is being circulated claiming leaked internal system prompts for 20+ coding tools (Cursor, Windsurf, Devin, Replit, Claude Code, v0, Manus, Perplexity-style agents). The leak framing is unverified from the posts alone; usefulness hinges on authenticity and recency, but the meta-signal is teams reverse-engineering agent behavior via prompt archaeology rather than vendor docs.

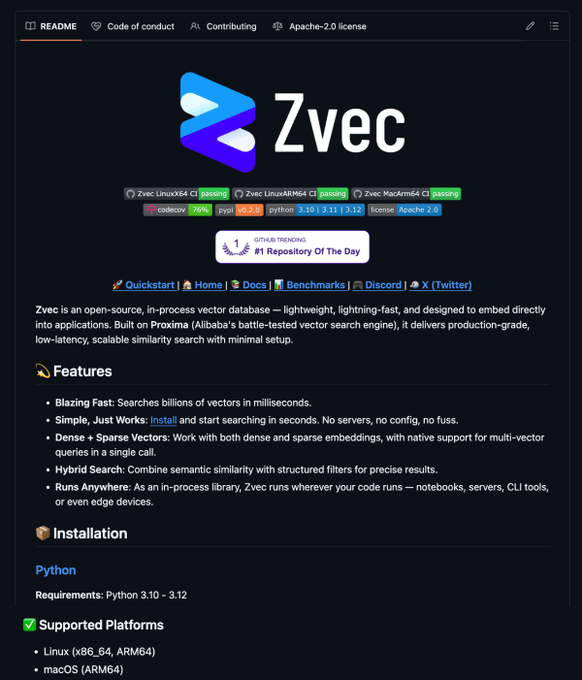

• Alibaba/Zvec: pitches an embedded vector DB (pip install zvec) with dense+sparse+hybrid search; claims “billions of vectors in milliseconds,” but no independent benchmark artifact appears in-thread.

• OpenClaw × Obsidian: memory routed into a note store for daily news/forum scans; output framed as ~10 loglines/day with genre tags and source links.

• Adoption stats meme: “84% never used AI; 0.3% pay for premium” circulates without methodology; repeated anyway as a market-timing shorthand.

Across coding agents and creator workflows, the common pattern is packaging: durable scaffolds, reusable prompts, and external memory layers; provenance and eval receipts remain the missing piece.

While you're reading this, something just shipped.

New models, tools, and workflows drop daily. The creators who win are the ones who know first.

Last week: 47 releases tracked · 12 breaking changes flagged · 3 pricing drops caught

Top links today

- Claude Code best practices repo

- Leaked system prompts for AI coding tools

- Zvec embedded vector database repo

- Typeless voice-to-writing app

- fal serverless AI inference platform

- MakerWorld 3D printing models library

- Meshy text-to-3D product page

- Stages AI creative platform product page

- Pictory AI video for L&D overview

- Hugging Face papers weekly roundup

- World Building Codex assets library

- Freepik Spaces AI timelapse workflow tool

Feature Spotlight

AI coding tools go “open”: Claude Code best-practice kits + internal prompt leaks

A creator arms race: Claude Code playbook repos + leaked system prompts make agent behaviors reproducible—helping filmmakers/designers ship tools, pipelines, and autonomous “coding systems” faster (and understand why they break).

Continues the creator-coder wave from earlier this week, but today the emphasis shifts to copy/paste infrastructure: a Claude Code production playbook repo, plus a separate repo claiming leaked system prompts for Cursor/Windsurf/Devin/Claude Code and others—useful for building your own agents and understanding failure modes.

Jump to AI coding tools go “open”: Claude Code best-practice kits + internal prompt leaks topicsTable of Contents

🧑💻 AI coding tools go “open”: Claude Code best-practice kits + internal prompt leaks

Continues the creator-coder wave from earlier this week, but today the emphasis shifts to copy/paste infrastructure: a Claude Code production playbook repo, plus a separate repo claiming leaked system prompts for Cursor/Windsurf/Devin/Claude Code and others—useful for building your own agents and understanding failure modes.

Repo claims leaked internal system prompts for Cursor, Windsurf, Devin, Claude Code, more

AI coding agents (system prompt collection): A GitHub repo is being circulated claiming to contain internal “system prompts” for 20+ coding tools—explicitly naming Cursor, Windsurf, Devin, Lovable, Replit, Claude Code, v0, Manus, and Perplexity-style agents in the post Leak claim and tool list, with a direct repo pointer repeated later Repo link repost.

• What creators use it for: reverse-engineering why certain tools fail (task framing, tool-calling rules, safety rails) and borrowing proven agent instructions when building in-house helpers, as framed in the original thread Leak claim and tool list.

Treat the “leaked” framing as unverified from these tweets alone; the artifact’s usefulness depends on authenticity and freshness.

Alibaba’s Zvec pitches an embedded vector DB: pip install, hybrid dense+sparse search

Zvec (Alibaba): A new open-source vector database is being promoted as running “inside your application” with no server setup; the thread claims you can pip install zvec and search quickly, with dense + sparse + hybrid retrieval in one API, built on Alibaba’s Proxima engine Zvec feature claims and echoed again with the repo pointer Repo repost.

The thread also claims “billions of vectors in milliseconds” and “searching in under 60 seconds” Zvec feature claims, but no independent benchmark artifact is included in these tweets.

Claude Code best-practice repo packages Agents, Commands, Memory, Hooks, Skills

Claude Code (community repo): A new open-source repository is being shared as a “production-ready” starter kit for turning Claude Code into a more structured agent system—bundling reusable Agents, slash Commands, persistent Memory, event-triggered Hooks, and installable Skills, as described in the repo walkthrough thread Repo feature rundown and the follow-up share Repo link repost.

The practical value here is less “new capability” and more copy/paste scaffolding: a concrete convention for how to name, store, and reuse agent behaviors across projects, without rebuilding your setup each time.

OpenClaw memory routed into Obsidian: daily news scan → genre tags → ~10 loglines

OpenClaw + Obsidian (memory pipeline): A creator describes routing an OpenClaw agent’s “memory” into Obsidian so the agent can do daily story research (news/forums), capture links, pitch premises, and categorize by genre—resulting in “10 new loglines every day,” according to the workflow description Obsidian memory routing and the quantitative follow-up Ten loglines per day.

This is a concrete pattern for making agent output durable and reviewable across devices: use a human-readable note store as the long-term scratchpad instead of keeping everything inside the chat tool.

“Vibecoding a CRM with Claude” becomes a repeatable build-in-public format

Claude (vibecoding format): A short clip frames the workflow as “Me and Claude vibecoding a CRM,” leaning into the build-in-public trope where the assistant is treated like a paired builder rather than a search box, as shown in the post Vibecoding a CRM.

The notable part is the packaging: “CRM” (a concrete, shippable internal tool) as the canonical demo app for showing agent-assisted iteration, UI wiring, and quick utility-building.

Tool preference debate: “Claude > Codex for OpenClaw” and “ChatGPT is furniture”

Coding assistant UX (sentiment): Preference talk is getting more blunt: one post asserts “claude > codex for openclaw” Preference screenshot, and the same screenshot thread includes the jab that “chatgpt is like talking to a piece of furniture” Preference screenshot.

This is less about benchmark deltas and more about perceived conversation feel and agent follow-through in longer build sessions—exactly the axis that tends to decide a team’s default assistant.

First paying customer for a vibecoded app: quick proof that small AI-built utilities sell

Vibecoded apps (creator monetization): A builder posts a simple milestone—“first paying customer of my vibecoded app”—as evidence that the fast-build loop can translate into revenue without a long runway, per the post First paying customer.

There aren’t product details in the tweet itself, but the signal is the same pattern repeating across creator-coders: ship a narrow tool, collect payment, then iterate in public.

“B.C. = Before Claude” meme signals Claude as the default coding co-pilot

Claude (culture signal): A one-liner—“B.C. refers to ‘Before Claude’”—is getting shared as a shorthand for how quickly Claude has become a default mental model for building software with an assistant, per the meme post Before Claude quote.

It’s not a feature update, but it’s a clean read on creator-coder identity shifting from “using AI sometimes” to “AI is the baseline tool in the loop.”

🎬 Text-to-video realism sprint: Seedance 2.0 microfilms, Grok Imagine motion tests, and Kling flexes

Heavy creator volume again—Seedance 2.0 remains the dominant clip engine in this sample, while Grok Imagine shows up as a surprising motion/impact contender and Kling 3.0 gets positioned as ‘better than VFX’ for certain shots. (Excludes coding-agent prompt leaks, covered in the feature.)

Grok fight choreography: creators say physical contact is improving (still imperfect)

Grok (xAI): A creator posts multiple fight clips and claims they were made with Grok (not Seedance), saying there are “tons of errors” but that Grok has been “quietly” improved—especially on physical contact in action scenes—while also mentioning Grok “start frames,” as written in Grok fight commentary.

This is one of the clearer head-to-head framings in today’s sample: Seedance remains the default reference point, but Grok is being positioned as closing the gap on contact-heavy motion, per the comparison language in Grok fight commentary.

Seedance 2.0 action stress test: Bubble Sorceress vs Stone Giant

Seedance 2.0 (Dreamina): A 15s action clip—“Bubble Sorceress vs Stone Giant”—is being used as a motion/scale stress test (fast projectiles, dodges, heavy impacts, large character size differences), as shown in Action stress test clip.

Creators are treating these short fights as capability probes: can the model keep readable staging, consistent character intent, and convincing mass over a single continuous shot, as illustrated by the pacing and blocking in Action stress test clip.

Seedance 2.0 micro-short turns a dating-app notification into an emotional beat

Seedance 2.0 (Dreamina): A ~64s text-to-video micro-short builds an entire emotional arc off a single phone notification (“Your match with Alex has expired”), showing how Seedance clips are getting paced like punchline/beat reels rather than “showreels,” as posted in the Seedance micro-short post.

The creative move here is the structure: lead with a universally readable UI moment, then let the generated visuals escalate the feeling instead of explaining story context upfront, as shown in the Seedance micro-short post.

Grok Imagine posts keep using geometric motion-design loops as capability proof

Grok Imagine (xAI): A short geometric “diamond” animation is shared as evidence that Grok Imagine “keeps getting better,” with the output leaning into crisp line-to-form transformations that read like motion-graphics practice footage, as shown in Diamond animation post.

This use case is notable because it avoids the hardest realism problems and instead tests controllable transforms, timing, and visual clarity—the things motion designers actually ship, as demonstrated in Diamond animation post.

Kling 3.0 flex shot: robotic face assembly framed as “better than VFX”

Kling 3.0 (Kling AI): A robotic face/eye assembly clip is being held up as a VFX benchmark—“Not even the best VFX can beat Kling 3.0”—which is exactly the kind of single-shot transformation people use to sell a model’s realism and micro-detail, per Kling VFX comparison.

There’s no technical breakdown (settings, seeds, or shot controls aren’t shared), but the shot choice is telling: reflective surfaces, fine mechanical parts, and coherent re-assembly are the stress points, as demonstrated in Kling VFX comparison.

Seedance 2 skit format: “AI hater grandma” as a reusable character beat

Seedance 2 (Dreamina): Anima_Labs shares a repeatable comedy template—a scolding “AI hater grandma” character reacting to the creator’s work—positioning it as a tutorial-able character pipeline (Midjourney + Leonardo “Nano Banana,” then animation in Seedance 2), per the workflow callout in Grandma skit workflow.

This matters because it’s one of the clearer “series-able” formats emerging around Seedance: a consistent character with a single recurring emotional beat that can be remixed across many prompts, as demonstrated in Grandma skit workflow.

Seedance 2.0 “Pixar-style short” prompt genre keeps spreading

Seedance 2.0 (Dreamina): Pixar-format prompting is showing up as a reusable genre: awesome_visuals posts “Make a Pixar film about Punch” as a pattern prompt in Pixar prompt example, while a separate share frames a longer Pixar-style short as “big-screen impact, zero big budget” in Pixar-style short share.

The consistent thread is that “Pixar” is being used less as a look reference and more as a shorthand for shot language (clear acting beats, readable lighting, family-film blocking), as implied by the repeated prompt framing in Pixar prompt example and Pixar-style short share.

Seedance 2.0 demos shift from visuals to “special effects + score build” claims

Seedance 2.0 (Dreamina): A Seedance clip is being pitched not only on visuals but on the idea that raw text-to-video output can deliver “special effects” plus a rising music bed and sound design, per the creator framing in Special effects claim.

Treat the audio conclusions as creator-reported (the tweet explicitly tells you to listen), but the broader signal is that Seedance bragging rights are expanding from motion/realism into full “scene feel,” as described in Special effects claim.

Sora 2 short-movie share frames model limitations as story-shaping constraints

Sora 2: A creator posts “#1 Short movie. Sora 2,” describing how Sora’s instability (identity consistency and changing environments shot-to-shot) isn’t just a flaw but something that shaped the idea and mood, per the craft reflection in Sora 2 short movie note.

The line that stands out is the thesis—“the sound of the instrument shapes the song”—which is basically a production heuristic for working within model constraints rather than fighting them, as written in Sora 2 short movie note.

Seedance 2.0 beta access economy: creators publicly credit access-givers

Seedance 2.0 (Dreamina): Access is being treated as a distribution bottleneck—awesome_visuals explicitly thanks a third party for beta access in Beta access shout-out, which doubles as provenance (“how I got this output”) and networking (“who can get you in”).

It’s a small post, but it’s a recurring shape: clip performance + public credit + access reciprocity, as shown by the framing in Beta access shout-out.

🧾 Prompt packs & aesthetic cheat codes: Midjourney SREFs, Nano Banana design systems, and copy/paste JSON

High-density prompt sharing today: multiple Midjourney --sref codes with art-direction notes, plus Nano Banana “design system” prompts and structured JSON recipes (product void renders, poster systems, and object re-styles).

Midjourney --sref 1848964435 for retro-noir romantic sketch illustrations

Midjourney (--sref 1848964435): A retro, cinematic sketch style reference described as mid‑century noir with European graphic-novel sensibility plus a stylized classic-anime edge, as shown in the Retro noir sref.

The look in the Retro noir sref reads well for poster pairs (two-character close-ups), “camera/photographer” motifs, and limited-palette character sheets when you want consistent line character across a series.

Midjourney --sref 4046977263 for medieval dark-fantasy illustration boards

Midjourney (--sref 4046977263): A style reference shared as a reliable “dark fantasy illustration” look—European graphic-novel linework, organic inking, and an aged/antique palette—positioned for character key art, cover-like scenes, and moody environment plates in the Style reference drop.

The examples in the Style reference drop skew toward readable silhouettes and prop-heavy staging (staffs, armor, skull motifs), which makes it a practical SREF when you need story clarity rather than pure texture.

Nano Banana “sports icon poster system” prompt template (auto color + layout)

Nano Banana (prompt template): A copy/paste “poster system” prompt is framed as a reusable art-director workflow—swap the athlete name and the prompt tells the model to adjust composition style, colorway, and typography treatment accordingly, as outlined in the Poster system prompt (and repeated verbatim in the Template repost).

A compact, reusable core (verbatim structure preserved) from the Poster system prompt:

Long-form “mirror selfie realism” prompt spec with explicit constraints

Nano Banana Pro (prompt-spec pattern): A long, structured prompt spec is shared as a reusable architecture for “smartphone mirror selfie” realism—explicit must_keep constraints (authentic mirror geometry, phone occlusion, messy hair, background clutter) paired with avoid and negative_prompt lists to suppress studio polish, as shown in the Prompt spec and example.

What stands out in the Prompt spec and example is the emphasis on controlled imperfections (high-ISO grain, uneven overhead lighting, partial face occlusion). That’s a repeatable way to get ‘unposed’ framing without relying on luck across rerolls.

Midjourney --sref 4111512992 for dark neo-gothic cyberpunk key art

Midjourney (via Promptsref, --sref 4111512992): A “dark futuristic” cheat code framed around high-contrast neon reds/cyans against deep blacks—aimed at sci‑fi posters, dystopian game concepts, and fashion/album art—per the Dark futuristic cheat code.

The examples in the Dark futuristic cheat code emphasize aggressive rim lighting and graphic negative space, which tends to survive multiple rerolls without losing the overall mood.

Nano Banana Pro “RetroCarbon Fitted” JSON for void-floating product renders

Nano Banana Pro (structured JSON prompt): A full JSON prompt template is shared for centered, void-floating “industrial minimal” product renders—matte volcanic black + carbon fiber + exposed joints, with orange internal glow—and an aggressive negative prompt to keep out studio gear, text, and cropping, as provided in the RetroCarbon JSON and followed up with more iterations in the More testing.

The key practical detail in the RetroCarbon JSON is how hard it leans into constraints ("no stands", "no pedestals", "no reflections of studio gear"), which is the difference between a clean catalog-style frame and a ‘photo of a render in a studio’ failure mode.

Promptsref’s daily “most popular SREF” post is becoming a trend radar

Promptsref (daily SREF leaderboard): A “most popular sref” daily post bundles multiple --sref codes and then writes a full style analysis (here: “Dynamic Blur & Moody Lighting,” with Gerhard Richter / Wong Kar-wai comparisons), as shown in the Most popular Sref report.

Beyond the ranking, the Most popular Sref report functions like a mini art-direction memo—use cases, prompt inspiration, and what visual cues to expect (motion blur, soft focus, film grain), which is more actionable than a bare code dump.

Wax-seal transform prompt for turning logos into embossed wax impressions

Image-to-image prompt (wax seal effect): A concise transform prompt turns an uploaded image/logo into a glossy wax seal impression—melted drip edges, embossed stamp detail, single rich color, studio lighting, and soft shadow—shared with multiple brand examples in the Wax seal prompt.

The exact prompt text from the Wax seal prompt:

Midjourney --sref 2031898952 for holographic iridescent “liquid light” surfaces

Midjourney (via Promptsref, --sref 2031898952): A style code positioned for iridescent “soap-bubble / oil-film” holographic aesthetics—Op Art meets neo-futurism—pitched for music covers, sci‑fi branding, and cosmetic concept visuals in the Holographic aesthetics code.

The write-up in the Holographic aesthetics code is essentially an art-direction shortcut for spectral reflections; it’s most useful when the subject is simple (product, mask, abstract form) and you want the surface treatment to carry the image.

Midjourney Sref 2085577252 for dark fantasy game-art lighting

Midjourney (via Promptsref, Sref 2085577252): A “dark fantasy game art” style blend—gothic darkness plus cyberpunk neon plus manga-like linework—shared as an instant mood preset for characters and cover art in the Dark aesthetic code.

The description in the Dark aesthetic code leans on heavy contours and saturated red/purple lighting; it’s a good match for portraits where you want readable line hierarchy, not painterly softness.

🛠️ Workflows that creators can copy today: Firefly Boards character dev, Freepik Spaces timelapses, and Reve layer stacks

The most actionable posts are process-first: character development inside Firefly Boards, reusable node/workflow building in Freepik Spaces, and layered prompting in Reve to art-direct backgrounds/FX without redoing the subject.

Firefly Boards is becoming a pre-pro character sheet tool for AI animation

Adobe Firefly Boards (Adobe): Creators are using Boards as the “before any motion happens” step—building a reusable character by iterating outfits/poses from their own photos, then carrying that consistent design into animation, as shown in the character-board walkthrough Character dev workflow.

A notable tactic is deliberately cycling models during the same iteration loop (not treating one model as “the” answer): Kris calls out trying a “GPT model and Flux” before committing to a final look in the Model rotation tip, while another screenshot shows edits happening via a “Gemini 3 (w/ Nano Banana Pro)” generation slot inside the same workflow UI in Character editing screenshot.

The pacing angle is practical: the character-edit phase can stretch until you’re “100% happy,” and bad-weather downtime becomes a built-in pre-pro window, as described in Character editing screenshot.

Freepik Spaces timelapse workflow: build once, then rerun for endless variants

Freepik Spaces (Freepik): A full thread breaks down a reusable workflow for cinematic timelapses—start with three references (place + face + vibe), generate a 3×3 grid, then keep iterating inside the same Space so the whole setup becomes rerunnable, as demonstrated in Spaces timelapse workflow.

The same workflow leans on a prompt-extraction loop: generate candidates, extract a working prompt using a list plus an “extraction prompt,” then patch missing details by editing the prompt in the same node, as described in the follow-up steps linked from Workflow link follow-up.

A key detail is that they reuse “the same prompt for every single one” and push differentiation via start/end frames plus small final edits, per the summary claim in Workflow link follow-up.

Reve’s layer stack pattern: protect the subject, then iterate backgrounds fast

Reve (Reve): A creator shared a repeatable layered prompting workflow: import a character/object, use layers to separately prompt background and light streaks, resize/reposition the subject, then hit Vary to batch out alternatives, as shown in the Voltron example walkthrough Reve workflow steps.

The same thread includes a copy-paste prompt template intended for “any subject,” plus explicit FX-layer settings (heat distortion intensity, neon night strength, sun ray decay) and an HD upscale step, as documented in FX layer settings and the prompt block in Reusable subject prompt.

This reads like a practical separation-of-concerns recipe: keep the hero asset stable, and spend iteration budget on environment/FX variations rather than re-rolling the whole image each time, per the step order in Reve workflow steps.

Sora 2 is showing up inside Adobe Firefly’s Generate Video workflow

Adobe Firefly app (Adobe): A creator shared that they’re now “experimenting with Sora 2” directly inside the Firefly app’s Generate video screen, framing multi-shot work as a new skill they’re learning in Sora 2 in Firefly.

The UI screenshot in Sora 2 in Firefly surfaces concrete knobs that matter for production planning: 720p output; Vertical (9:16) aspect; 24 FPS; 8 seconds duration; and an Audio: On setting. The same post positions this as part of an animation workflow in progress rather than a one-off test.

What’s still unclear from today’s posts is rollout scope (tiers/regions) and whether Sora 2 in Firefly behaves differently than other Sora 2 surfaces; the evidence here is the in-product settings panel shown in Sora 2 in Firefly.

Silent visual-metaphor ads are being operationalized as an AI-first format

Ad creative pattern: A post argues that high-earning creative output (positioned as “$40k+/month creatives”) increasingly starts with visual metaphors—a product behaving like a character, a simple object demonstrating the problem, and a scene that explains the benefit “without a single person speaking,” with AI used to generate many scene variants at scale in Visual metaphor ad system.

The core mechanics are spelled out as: “simple scripts guide the action,” “AI builds the scenes,” and “volume does the scaling,” per the format description in Visual metaphor ad system. The attached domino clip functions as the canonical example: a clean physical chain reaction ending on “Problem: Solved.” visible in Visual metaphor ad system.

Meshy creators are marketing the loop from AI 3D model to physical print

Meshy (MeshyAI): Meshy highlighted the “screen to reality” workflow—community members generating digital 3D assets and then turning them into real 3D prints—positioning the physical output as proof and shareable content, as shown in Model to print showcase.

The demo is straightforward: a rendered model spins on-screen, then cuts to the printed object, reinforcing the pitch that AI-generated 3D isn’t only for renders but can become tangible artifacts for creators’ portfolios and product storytelling, per the before/after sequence in Model to print showcase.

🧩 Studios & platforms: CASTING/WORLDZ editors, parallel creator production, and avatar vendors

Platform posts skew toward ‘end-to-end’ creation environments: editor UIs that promise precise casting/objects, plus early-access platforms onboarding creators to work in parallel. Also includes AI avatar vendors targeting brand content teams.

STAGES AI previews CASTING module for precise objects/characters tied to CUE

STAGES AI CASTING (STAGES AI): A new “CASTING” surface is being shown inside stages_ai, pitched as generating “any object” or “any character” “built to precision” and then deploying those assets across the platform, as demonstrated in the Casting demo clip. It’s also described as connected to CUE for autonomous generation/tasks across the product in that same preview, per the Casting demo clip.

• What’s new in platform terms: This frames “casting” as a reusable asset primitive (object/character) rather than a one-off generation; the demo copy emphasizes “deployable anywhere” and “no bottlenecks,” as shown in the Casting demo clip.

Details like pricing, availability, and supported export formats aren’t stated in the clip.

ARQ says its platform is live for first users and namechecks fal integration

ARQ (ARQ): ARQ says it “just released” its platform to first users and calls out fal for integration, following up on parallel production (five creators iterating one video) with a more explicit “product is in users’ hands” milestone in the First-users announcement. A usage/activity chart is also shared as proof-of-life for the early period, as shown in the First-users announcement.

• Parallel-creator model stays central: The launch post sits in the same thread context about onboarding creators in parallel and using that process to learn who to hire, echoed again in the Moving fast claim.

The posts don’t name pricing, waitlist criteria, or which gen-video models are wired through fal.

STAGES AI shares WORLDZ blueprint as a foundation layer before rebuild

WORLDZ (STAGES AI): The builder behind stages_ai posted an “early design” of WORLDZ, saying it has since been “fully remapped and rebuilt” but they’re sharing the foundations now because “ideas get copied,” as described in the WORLDZ blueprint post. The shared footage looks like a graph-style world/interface layer that’s meant to sit underneath other modules (including CASTING from the same product), with CASTING separately previewed in the CASTING module preview.

• Why it matters for studios: The posture is “blueprint vs final product,” i.e., showing enough UI/structure to communicate direction while implying the real editor has already moved on, per the WORLDZ blueprint post.

There’s no public spec yet for scene interchange, engine compatibility, or collaboration controls.

Pictory pitches AI avatar video for L&D teams as a 2026 scaling workflow

Pictory AI (Pictory): Pictory is pushing the idea that training/L&D teams are under pressure to update content faster and that AI video creation (including avatar-style content) helps them scale updates and “drive real performance,” as stated in the L&D positioning. The creative takeaway is less about a single feature drop and more about where demand is moving: internal training content as a high-volume AI video lane.

The post doesn’t include concrete benchmarks (cost per module, turnaround time, completion lift), so treat it as market positioning rather than a measured case study.

Zeely teases a new AI avatar drop framed as “brand face + voice”

Zeely avatars (Zeely): Zeely teases “new AI avatars” positioned around giving a brand a recognizable face and a memorable voice, with the announcement implying a time window (“for the next 12…”) in the Avatar drop tease. The tweet text in this dataset is truncated, so the exact terms (free credits vs discount vs access window) aren’t verifiable from today’s capture.

No demo media or plan details are included in the provided snippet.

📣 AI ads in the wild: real estate video, product-storytelling formats, and “keyboard is dead” copy ops

Marketing-oriented creator talk centers on where AI video is already converting (real estate) and on ad formats that feel native to feeds (visual metaphors, silent scenes). Also includes voice-to-polished-copy tooling used for posts/emails/docs.

Real estate marketing is leaning hard into AI video for “product-style” listings

Real estate AI video (format shift): Real-estate creators are reporting that listing promos are starting to look like social product ads, with AI used to “bring properties to life” and to visualize upgrades/renovations before they exist, as described in the adoption note from Real estate adopting AI video.

The practical implication is that the “listing photo set” is getting wrapped in short, scroll-native motion—less about cinematic craft, more about stopping power and clarity in-feed—per the examples and framing in Real estate adopting AI video.

Visual-metaphor ads are scaling: silent scenes, simple scripts, lots of variants

Feed-native ad creative (silent storytelling): A creator ad playbook argues that high-earning creative output is increasingly built from visual metaphors—“a product behaving like a character” and silent scenes that demonstrate the problem/benefit—then scaled via AI-generated scenes plus volume testing, as written in Visual metaphor ad system.

• Why it holds attention: The format claims you stop “because it’s different,” not because it’s high production, with the retention hook coming from “your brain wants to see what happens next,” per Visual metaphor ad system.

• What to copy: Use simple object-driven scripts (no talking head), generate many scene variants with AI, and iterate on the metaphor clarity—an approach summarized directly in Visual metaphor ad system.

“Develop the plot” videos use AI to pitch multiple build options for the same land

Real estate concept visualization (format): A specific real-estate video pattern is gaining traction: take a plot of land and use AI to “develop” it into several plausible outcomes (for example, single-family vs a larger project), turning entitlement/architecture ambiguity into a concrete set of options, as outlined in Develop the plot format.

This reads like a sales enablement asset more than a traditional render—fast iteration, multiple variants, and a clearer story for buyers/investors—based on the format description in Develop the plot format.

Typeless pitches “RIP keyboard” voice-to-polished copy for posts and emails

Typeless (voice-to-writing workflow): Typeless is being pitched as a voice-first copy tool that turns spoken thoughts into polished text—positioned as “4x faster than typing”—with concrete examples across rewriting posts, rewriting emails, summarizing paragraphs, and auto-formatting notes, as described in RIP keyboard pitch and demonstrated in Rewrite X post demo.

• Email cleanup: The workflow described in Email rewrite demo is “speak the email, ship a clean version,” emphasizing formatting and tone.

• Research ops: The summarize/translate/checklist flow in Summarize web paragraph positions Typeless as a capture-and-structure layer when you can’t edit the source text.

• Notes formatting: The auto-bullets/numbering behavior in Auto-format notes is framed as reliability in transcription plus structure.

IKEA’s sold-out toy moment shows how fast meme demand can outpace marketing

IKEA virality loop (demand signal): A “Punch the monkey’s orangutan toy” meme reportedly pushed an IKEA plush/toy to sell out, framed as “winning without spending a dollar on marketing” in Zero-marketing framing.

The screenshot embedded in Zero-marketing framing shows 732K views and 5.7K likes on the claim, which creators are treating as a reference point for how quickly product narratives can spike demand even without conventional paid spend.

🖼️ Image lookdev & aesthetics: Nano Banana realism, Midjourney taste libraries, and architectural refs

Less about new model releases and more about what people are actually generating: hyperreal lifestyle shots, curated Midjourney aesthetics, and architecture/material references that creators use as style targets for gen workflows.

Nano Banana Pro mirror-selfie realism gets a reusable “constraint spec” prompt

Nano Banana Pro: A creator shared a long, copy-pasteable JSON prompt that’s explicitly engineered for “smartphone mirror selfie” realism—tight mirror geometry rules, “must keep” constraints, and a robust negative list—framed as an update to their prompt library in the Nano Banana Pro prompt dump.

The interesting part for lookdev is how it treats realism like a checklist: keep the phone occluding part of the face, keep messy flyaway hair, demand imperfect indoor lighting and grain, and forbid “studio” cues and symmetry, as detailed in the Nano Banana Pro prompt dump.

Blue-and-orange film-grain lighting becomes a brandable lookdev system

Look system (film-grain + hard light): A creator is iterating a consistent photography-like grade across different subjects—deep blue shadows with narrow orange highlights, visible grain, and occasional chromatic aberration—using the framing “Information asymmetry” in the Portrait and sneaker set and “Search party on the way” in the Desert car triptych.

• Range of subjects, same grade: The look holds across face close-ups, product-ish sneaker frames, and even a spacey shoe render in the Portrait and sneaker set, suggesting it’s being treated as a reusable brand treatment.

• Motion/energy variant: The car frames lean on dust + rainbow fringing for speed cues while staying inside the same blue/orange palette, as shown in the Desert car triptych.

Brutalism meets terracotta becomes a shared material palette for prompts

Architecture lookdev: A small set of facade references got shared as “what happens when you blend brutalism with terracotta,” highlighting chunky massing, rounded corners, perforated brick/jali screens, and warm interior lighting against wet streets and greenery in the Architecture reference set.

For AI image workflows, these kinds of refs tend to translate into promptable building blocks (terracotta lattice, rough stone, deep-set balconies, tropical planting), and the Architecture reference set give enough coverage to keep a series visually consistent across generations.

“Pastoral Glitchcore” emerges as a repeatable Midjourney series look

Midjourney aesthetics: A grid of eight outputs was posted as “Pastoral Glitchcore,” with consistent motifs—fields of flowers, human subjects, dusk-to-daylight skies—plus heavy digital artifacting (horizontal smears, chromatic separation) in the Pastoral Glitchcore grid.

The set reads like an intentional visual system rather than a one-off: same setting, same distortion language, and enough variation to sequence into a carousel or title package, as shown in the Pastoral Glitchcore grid.

Daily style discovery as a moat: Artedeingenio reaches 50K followers

Creator practice signal: Artedeingenio marked reaching 50,000 followers and claimed a streak of posting “every single day for three and a half years,” positioning early adoption + relentless output as the differentiator in the 50K milestone post.

It’s not a tool update, but it’s a clear data point on how image-gen accounts are competing: volume plus ongoing style discovery becomes the product, per the framing in the 50K milestone post.

🦾 3D, shaders, and “send it to factory” pipelines (from concept to print)

3D content here is practical and maker-adjacent: AI-to-3D asset creation, blueprint-led mech lookdev, WebGPU shader experiments, and community signals around printing and physical fabrication.

Meshy creators keep closing the loop from generated 3D to physical prints

Meshy (MeshyAI): A community spotlight shows the “screen → reality” workflow in one cut—first the digital model, then the same asset as a finished 3D print—framing Meshy as a practical bridge from AI-made meshes to physical objects, as shown in the Screen to reality clip.

• Why it matters for creatives: This kind of before/after is becoming its own deliverable (portfolio proof + product teaser) rather than “just” a modeling step, with the physical print serving as the credibility anchor in the Screen to reality clip.

0xInk pairs a hoverbike render with a labeled SKY-SPEEDER Mk. IV blueprint

0xInk (send-it-to-factory pattern): A hoverbike concept is presented alongside a matching blueprint sheet labeled “PROJECT: SKY-SPEEDER Mk. IV,” including component callouts like “front thrusters,” “armored cowling,” and “rear propulsion,” giving a clean concept-to-spec reference in the Hoverbike and blueprint post.

The point is the pairing: a cinematic hero render plus a readable spec sheet makes it easier to keep proportions consistent across future iterations (animation, kitbashing, or fabrication) while staying anchored to one “source of truth,” as seen in the Hoverbike and blueprint post.

0xInk’s Model Type-X post shows blueprint-to-render consistency for hard-surface design

0xInk (hard-surface consistency): Following up on Blueprint continuity (blueprint paired with a matching render), a new “HEAVY ASSAULT MECHA – MODEL TYPE-X” sheet shows multiple orthographic views plus close-up mechanism callouts, paired with a full-body 3D render for alignment checks, as documented in the Mecha blueprint and render.

• What’s notably reusable: The sheet’s explicit labels (e.g., head sensors, shoulder thrusters, leg hydraulics) create a checklist for “did the render match the spec,” which is the practical part of keeping a mech design coherent across shots and revisions, per the Mecha blueprint and render.

Collectible-style figure shots are being used as lookdev targets for AI characters

0xInk (character-to-figure lookdev): A post shows high-detail figure photography—sweat texture, denim stitching, and a transparent prosthetic limb with visible wiring—which functions like a “manufacturable realism” target for AI character rendering (and later merch/print workflows), as shown in the Action figure closeups.

The same account also shares another prosthetics-heavy character concept (“only fights with his feet”), reinforcing that the reference style is about readable mechanics and materials, not just silhouette, per the Feet-fighter character render.

A fluid-sim grid + CRT shader stack gets shared as a WebGPU motion look

three.js / WebGPU (TSL): A short demo combines a fluid-simulation-style deformation shader with a CRT overlay (scanlines/retro display feel), showing a fast way to get “analog screen” motion texture directly in real time, as shown in the Shader demo clip.

This is the kind of shader pairing that can sit behind titles, UI diegetics, or music-visual loops without round-tripping through offline compositing, per the Shader demo clip.

A home print setup update highlights the unglamorous parts of shipping physicals

Home 3D-print production: A creator shares an in-progress “dedicated tinkering section” setup—pegboards, filament storage, and bench organization—showing the physical ops layer behind maker workflows in the Workbench setup photo.

They also describe a failed “10-hour print” and the surprise of wasted filament, which is the practical constraint that tends to dominate throughput once you start doing real prints at home, according to the 10-hour print heartbreak and the follow-up Filament wasted note.

MakerWorld shows up as the “printable fix” feed for everyday annoyances

MakerWorld (functional print distribution): A creator calls out MakerWorld as the place where “every minor inconvenience” already has a printable solution, illustrated with a model for an IKEA Söderhamn backrest Siri Remote holder in the MakerWorld example.

The signal for AI-adjacent creators is demand: once you can generate or adapt 3D parts quickly, these libraries become the fastest path from “problem noticed” to “thing in hand,” as implied by the “found my people” framing in the MakerWorld example.

✍️ Story engines & creator psychology: premise-mining agents, ‘post-work’ futures, and AI-as-therapist tone

Storytelling posts focus on using agents to mine premises (loglines/day), plus broader narrative frames creators are writing with: productivity shifts, housing as coordination failure, and the increasingly recognizable ‘ChatGPT reassurance’ voice.

OpenClaw to Obsidian: a daily “premise miner” that outputs loglines you can browse

OpenClaw → Obsidian (Rainisto): A creator describes routing an OpenClaw agent’s long-term memory into Obsidian, so the agent can read news/forums daily, extract story premises, categorize them by genre, and save links back to sources as it works, as detailed in the workflow note in Obsidian memory pipeline. It’s positioned as a continuous story-intel feed you can review across devices, with output volume described as “10 new loglines every day” in Daily logline output.

• What the agent does each day: Reads news and online forums; collects possible story premises; pitches them as premises/loglines; tags by genre; stores the original URL, per Obsidian memory pipeline.

• Why Obsidian is the storage layer: Notes show up on all devices (Obsidian sync), turning the agent into an always-on research assistant you can skim like a writer’s room backlog, as described in Obsidian memory pipeline.

This is less about “research” and more about building a repeatable premise inventory you can pull from when you actually want to write.

Worldbuilding as product: ship an archetype pack, then prove it with a short film

World Building Codex 3.0 (VVSVS): A creator shares a short film assembled from “Dreamcore” archetype assets and says World Building Codex 3.0 will be released “next week,” positioning the drop as reusable building blocks rather than a single finished piece, as described in Dreamcore archetype teaser.

• Asset-first proof: The film is presented as a demo of what you can build from the archetype pack (a productized world + motifs), per Dreamcore archetype teaser.

• Toolchain signal: The post cites Midjourney for image generation and Grok Imagine for video, implying a “static world bible → moving proof-of-world” loop, as noted in Dreamcore archetype teaser.

It’s a clean packaging pattern: release a world’s primitives (archetypes), then show a finished artifact to anchor how they’re used.

A near-future story frame: mild productivity gains reshape work, but housing stays stuck

Post-work economic narrative (illscience): A creator lays out a specific “AI future” arc—20% productivity showing up as a four-day work week because most jobs aren’t fully automatable; deflation (not just disinflation) pushing down real costs in healthcare and education as admin work gets automated; and a split between “wildly ambitious” builders and people opting into a “soft cozy” lifestyle, as written in Four-day week scenario. The same post flags housing as the black-pill constraint: it’s “human coordination and policy tradeoffs,” and “even infinite free intelligence won’t convince the NIMBYs,” per Four-day week scenario.

For storytellers, it’s a ready-made set of conflicts: abundance in some systems, stasis in the one that dictates daily life.

Shipping pressure shows up as sleep debt and “maximally locked in” posts

Creator pacing and burnout: Multiple posts sketch the hidden cost of always-on creation: “can’t post,” “can’t sleep,” “already maximally locked in,” framed as deadline pressure and attention bottlenecks in Locked-in schedule spiral. A separate note describes taking a first “break” in a month, napping for hours, and waking disoriented—paired with “I need to normalize my working schedule,” as written in Work schedule reset.

The through-line is psychological rather than technical: AI speeds production loops, but it also widens the gap between what you could ship and what you can sustainably ship.

The “I’ll explain this calmly” ChatGPT voice is turning into a meme-able writing tone

ChatGPT reassurance cadence (venturetwins): A short meme captures a now-recognizable assistant voice—“Let’s pause for a moment… I’ll explain this calmly, without sugarcoating or catastrophizing… You’re not broken… I’m proud of you,” as written in Reassurance voice meme.

As a creator signal, it’s a stable template for dialogue: therapist-coded pacing, de-escalation language, and affirmation—useful as parody, as sincere narration, or as a character voice you can drop into scripts.

🏁 What shipped (and what’s blowing up): AI shorts, glitch experiments, and animation milestones

A mix of shipped work and “proof of momentum”: viral view milestones, short AI film experiments, glitch-based microcontent, and creators explicitly contrasting handmade animation vs AI-assisted pipelines.

Kris Kashtanova’s “10 years in New York” AI animation hits 2M views

AI animation (Kris Kashtanova): Following up on viral animation (her personal “10 years in New York” short), she says the animation has now reached 2M views, framing it as a real-world validation moment rather than a tools demo, as shown in the 2M views screenshot.

The post is light on process details today, but the signal for other creators is distribution momentum: a personal milestone story (not a product pitch) can still travel widely on-platform, per the framing in the 2M views screenshot.

Glitch cliffhanger micro-series: “But wait… how does it end?!” format

Short-form series format (GlennHasABeard): He’s continuing a repeatable loop of glitch-heavy micro-clips built around a cliffhanger hook (“But wait… how does it end?!”) and follow-up posts that keep the thread moving, as shown in the glitch hook clip and the cursor payoff clip.

The visual language is consistent—distortion layers, chromatic fringing, and “found footage” texture—reinforced by stills like the blurred, glitchy bike montage in the glitch still.

The mechanics are simple but effective: a named “episode” concept, a promise of an ending, and fast iteration on a single aesthetic, per the sequencing across the glitch hook clip and cursor payoff clip.

Clay stop-motion BTS: a “no AI involved” counterpoint workflow

Stop-motion workflow (Kris Kashtanova): She shared a handmade clay animation clip and explicitly positioned it as “No AI involved,” then described the practical pipeline—modeling clay for modifiable parts, tripod + remote for stable frames, and assembly in After Effects—according to the clay animation clip and her behind-the-scenes notes.

She also posted frame assets (repeated clay “light bulb” characters) that show what’s actually getting iterated between frames, as visible in the behind-the-scenes notes.

The contrast is the point: creators are increasingly pairing AI-assisted work with “handmade receipts” to establish craft credibility, and she’s making that move explicitly in the clay animation clip.

Sora 2 short movie (#1): leaning into multi-shot inconsistency as a choice

Sora 2 (OpenAI): Rainisto shared a “#1 Short movie. Sora 2.” and described a craft stance: letting the model’s current multi-shot limitations (identity and environment shifting) shape the story’s mood rather than fighting it, as written in the short movie post.

The note is unusually direct about aesthetics coming from constraints (“The sound of the instrument shapes the song”), which is a useful framing for creators shipping narrative tests early, per the reflection attached to the short movie post.

Artedeingenio hits 50,000 followers after 3.5 years of daily AI art posting

Creator momentum (Artedeingenio): He marked a 50,000 followers milestone and attributed it to posting AI art daily for three and a half years without missing a day, framing the payoff as “worth it” after starting early, per the 50K milestone post.

This kind of cadence claim is becoming its own creator credential—“I did it every day” as the differentiator—rather than any single model or style trick, which is the explicit emphasis in the 50K milestone post.

Fan-made AI video for A Knight of the Seven Kingdoms as franchise-style proof

Franchise-style AI short (wildpusa): A fan-made clip for “A Knight of the Seven Kingdoms” is being shared as a proof-of-concept for TV-grade, recognizable IP aesthetics done outside a studio pipeline, per the fan-made scene share.

The post’s core signal is demand for “looks like the show” continuity—even when it’s unofficial—since the caption anchors on a specific character beat (“Baelor Targaryen…”) in the fan-made scene share.

Titled dark-fantasy “scene drops” as serialized worldbuilding

Worldbuilding publishing pattern (DrSadek_): A run of distinct, titled dark-fantasy images (“The Halo of the Condemned King,” “The Canyon of the Clouds,” “The Bloom Within,” “The Obedient Deep”) reads like episodic art-direction—single-scene mood pieces with consistent tone and naming—across the Condemned king post, Canyon post , and Bloom post.

This format matters because it’s not “random prompts”; it’s closer to a drip-feed story bible where each post is a location/prop/beat you can later stitch into a larger narrative, as implied by the repeated title-first presentation in the Condemned king post and Canyon post.

“Share your AI Art” open call as a built-in distribution funnel

Distribution mechanic (MayorKingAI): A public “Share your AI Art” call invites creators to drop work for a next-day featured roundup, creating a lightweight, recurring funnel for discovery and social proof, as stated in the open call post.

The post makes the promise concrete (“Tomorrow (Monday), I’ll feature the top creations”), which is the incentive structure that drives participation in the replies, per the wording in the open call post.

Adobe Firefly “Hidden Objects” Level .025 keeps the puzzle-serial format going

Adobe Firefly (Adobe): Following up on Hidden Objects (the earlier levels), Glenn posted “Hidden Objects | Level .025” with a clear interaction loop—“find all 5 hidden objects”—and a visual UI strip of the target icons, as shown in the Level .025 puzzle.

The format is designed for comments: it gives viewers a job (find 5 items) and a reason to screenshot/zoom, and it’s explicitly credited as “Made in Adobe Firefly” in the Level .025 puzzle.

📚 Signals beneath the hype: adoption stats, weekly paper digests, and where AI conversation actually happens

Light on deep technical drops in this sample, but strong meta-signals: weekly paper roundups, adoption/payment stats, and claims about which platforms are functioning as the real distribution layer for AI practice.

A viral stat claims 84% haven’t used AI and 0.3% pay

AI adoption stats: A widely reposted claim says 84% of people have never used AI, and only 0.3% of users pay for premium services, framed as an argument against “AI is a bubble” narratives in the quoted post Adoption stat claim. The numbers are presented without methodology in the tweet, but the way they travel matters: creators and tool builders are using penetration and payment-rate claims as shorthand for “how early are we, really?”

This is less about accuracy (no source cited in Adoption stat claim) and more about what gets repeated as a durable story: most people still aren’t in these tools, and monetization is concentrated.

A distribution take says AI practice is concentrated on X and YouTube

AI distribution: One thread argues it’s “stunning” how much practical AI learning and conversation happens on X and YouTube, with comparatively little happening elsewhere, as summarized in the retweeted observation Where AI happens claim. For creatives, the takeaway isn’t platform drama—it’s that workflow discovery, prompt formats, and model “folk knowledge” are getting normalized through short-form feeds and video explainers rather than docs or courses, matching the channel emphasis in Where AI happens claim.

HuggingPapers posts a Feb 16–22 “Top AI Papers” weekly filter

HuggingPapers: A new “Top AI Papers of The Week (Feb 16–22)” roundup is circulating as a lightweight way to track research without living on arXiv, as shown in the weekly list post Weekly paper list. It includes items like “Less is Enough: Synthesizing Diverse Data in Feature Space of LLMs” and “SQuTR …” (title truncated in the tweet text), which is the kind of grab-bag creators tend to mine for near-term workflow ideas rather than theory.

Treat it as a distribution signal: paper discovery is increasingly packaged as weekly feeds instead of individual preprints, per the format in Weekly paper list.

Creators keep posting “RIP Hollywood” and “sports won’t be the same” memes

Creator expectation shift: Big, declarative posts like “RIP Hollywood” RIP Hollywood post and “Sports will never be the same again” Sports will never be same are continuing to function as shorthand for “gen video is now culturally legible,” even when they’re not tied to a specific model release or benchmark.

The same pattern shows up in adjacent “look what’s possible” framing around AI art-making, like the reposted claim about “learning to whisper commands to AI” Whisper commands claim.

Promptsref’s “most popular SREF” post turns trendwatching into a brief

Promptsref: Following up on Most popular SREF (daily popularity-as-art-direction posts), a Feb 21 report claims a “Top 1 Sref” bundle and describes the look as “Dynamic Blur & Moody Lighting,” anchoring it to long-exposure / step-print / Wong Kar-wai-style motion blur language in the write-up Popularity report text.

Two notable signals in the same post: it treats SREF codes as a trend proxy (what aesthetics people are reaching for) and as a mini lighting/texture brief rather than a raw code dump, as seen in the extended style analysis attached to Popularity report text.

🛡️ Synthetic legitimacy fights: AI actors, disclosure tension, and fake persona callouts

Policy/legitimacy talk here is mostly about entertainment and identity: whether AI performances become a formal Oscar category, and recurring callouts of deceptive personas—important for filmmakers and ad creators navigating trust and contracts.

McConaughey predicts AI performances could get an Oscar category within 5 years

AI acting legitimacy (Hollywood): A new round of industry chatter claims Matthew McConaughey expects AI to “infiltrate” Oscar acting categories and could trigger a separate Best AI Actor-style category within 5 years, as summarized in the week-in-review post and echoed via a McConaughey wave comment.

The practical tension underneath the prediction is rights management: if AI performances become award-eligible, studios and actors will fight over voice/likeness/character ownership and the contracts that define what models can train on and what “performances” can be sold (e.g., AI appearances), per the same recap.

Michael Eisner: theaters have 20 years left, AI won't write Oscar-winning scripts

Cinema’s next 20 years (Disney): Former Disney CEO Michael Eisner is quoted saying theaters have roughly 20 years left (except for spectacle and niche) while also arguing AI will never write an Oscar-winning script, according to the ai films recap.

This matters for AI filmmakers because it frames two different “permission structures” at once: theaters as a shrinking premium channel, and awards/critical prestige as a human-authorship moat—even as production workflows absorb more AI (the same recap points to collapsing VFX timelines and AI-first funding narratives) in the week-in-review.

Roger Avary reportedly got 3 films funded after pivoting to AI workflows

AI workflow as financing wedge: A widely shared story says Pulp Fiction co-writer Roger Avary got three films funded quickly after reframing his pitch around AI workflow production—contrasting it with prior Hollywood rejection—per the weekly recap.

The signal for creators is less about the specific slate and more about what investors think they’re buying: shorter production timelines and cheaper iteration loops being treated as a fundable “edge,” as described in the same roundup.

Impersonation callout: “anti-woke Christian woman” accounts often aren’t who they claim

Persona authenticity (platform culture): A viral callout claims that when Elon Musk boosts “anti-woke Christian woman” accounts, they frequently turn out to be impersonation/ops accounts (framed here as “an Indian dude running the account”), as stated in the impersonation post.

For AI creators selling products with synthetic faces/voices, this blends into a broader trust problem: audiences increasingly assume the “presenter” may be fabricated or misrepresented, and backlash can target format (persona marketing) even when content is legitimate, per the sentiment in the same thread.

“No IP was touched” shows up as a defensive disclosure meme

Disclosure pressure (IP anxiety): The phrase “No IP was touched” is being used as a short, defensive disclaimer—even dropped without explanation—suggesting creators expect immediate IP suspicion around generative aesthetics, as seen in the one-line post.

It’s a small signal, but a repeatable one: as AI outputs get more referential, creators increasingly preempt the “you copied X” response with an explicit denial, even when they aren’t naming the underlying tools or sources in the same post.

While you're reading this, something just shipped.

New models, tools, and workflows drop daily. The creators who win are the ones who know first.

Last week: 47 releases tracked · 12 breaking changes flagged · 3 pricing drops caught