PinchBench ranks gpt-5.3 at 97.8% – 5,400+ OpenClaw skills curated

Stay in the loop

Free daily newsletter & Telegram daily report

Executive Summary

OpenClaw’s agent ecosystem is getting treated as “production” infrastructure rather than demos: Kilo Code’s open-source PinchBench shipped as a task benchmark for real OpenClaw work (scheduling, coding, email triage) with a success-rate leaderboard; a shared screenshot lists openai-codex/gpt-5.3 at 97.8% and Gemini 3 Flash at 95.1%, but the discourse is still mostly screenshot-driven and not yet anchored by widely replicated runs.

• Awesome OpenClaw Skills: VoltAgent is curating 5,400+ drop-in “skills” from a larger registry; pitched as a portability layer so teams swap capabilities without rewriting agents.

• Paperclip orchestration + governance: Paperclip surfaces budgets, heartbeats, ticket logs, and kill-switches as the control layer for multi-agent sessions; framed as the missing ops console for OpenClaw-compatible stacks.

• Disclosure pressure rising: X adds per-post “Paid promotion” and “Made with AI” toggles; EU Parliament tees up a March 10 vote on training-data transparency/opt-out and remuneration; a New York ad-disclosure rule is claimed for June 9, 2026 with $5,000+ fines, but the underlying text isn’t cited.

In parallel, “directable video” research leans into realtime: RealWonder claims 13.2 fps at 480×832 with a 4-step generator; Helios claims 19.5 fps on one H100 for long video, with public signal currently limited to repo summaries and demos.

While you're reading this, something just shipped.

New models, tools, and workflows drop daily. The creators who win are the ones who know first.

Last week: 47 releases tracked · 12 breaking changes flagged · 3 pricing drops caught

Top links today

- Crawlee web scraping and crawling toolkit

- Cortical Labs biological computing platform

- Free-TV IPTV M3U playlist repository

- yt-dlp video downloader repository

- OpenClaw agent benchmark across real tasks

- Awesome OpenClaw Skills curated library

- Drafted AI home design and floor plans

- Paperclip workflow automation tool

- Typeless Trust Center and HIPAA details

- RealWonder real-time action-conditioned video paper

- HKUST AI Film Hackathon 2026 details

- Google AI Studio app remix instructions

- LTX Studio desktop video workflow tool

Feature Spotlight

OpenClaw agent ecosystem goes “production”: benchmarks, skill hubs, and orchestration

OpenClaw is quickly turning into a standardized “creator agent” stack: a real-world benchmark, a huge skills hub, and orchestration tools make it easier to build reliable, reusable creative agents instead of one-off demos.

Multiple accounts converged on OpenClaw as the practical agent layer for creators—now with a real task benchmark, a massive shared skills library, and open-source orchestration tooling. This is today’s densest cross-account storyline and sets the tone for “agent stacks” in creative work.

Jump to OpenClaw agent ecosystem goes “production”: benchmarks, skill hubs, and orchestration topicsTable of Contents

🦾 OpenClaw agent ecosystem goes “production”: benchmarks, skill hubs, and orchestration

Multiple accounts converged on OpenClaw as the practical agent layer for creators—now with a real task benchmark, a massive shared skills library, and open-source orchestration tooling. This is today’s densest cross-account storyline and sets the tone for “agent stacks” in creative work.

PinchBench brings a real OpenClaw task benchmark, with gpt-5.3-codex on top

PinchBench (Kilo Code): A new open-source benchmark targets real OpenClaw agent work (scheduling, coding, email triage, etc.) and publishes a success-rate leaderboard, as introduced in the benchmark announcement.

The shared chart puts openai-codex/gpt-5.3 at 97.8% success and Gemini 3 Flash at 95.1%, according to the leaderboard screenshot in the benchmark announcement; community reaction immediately framed it as “the OpenClaw goat” in the rank reaction, with the same thread explicitly teeing up the next comparison point (“curious where gpt‑5.4 will land”) in the benchmark announcement.

Awesome OpenClaw Skills repo curates 5,400+ drop-in skills for agents

Awesome OpenClaw Skills (VoltAgent): A curated repository is being pitched as a “GitHub of OpenClaw skills” that you can drop into agents to add automation, research, coding, and workflow actions, as described in the skills hub announcement.

The repo description emphasizes scale and curation—“5,400+” skills curated from a larger registry with filtering/disclaimer language—per the GitHub repo, positioning “skills” as a practical portability layer for production agent stacks rather than one-off scripts.

Budgets, heartbeats, and tickets are becoming the default safety rails for agent teams

Agent governance controls: A concrete pattern is emerging where creators treat agent stacks like production systems—enforcing per-agent budgets, requiring periodic heartbeats (liveness), logging work into tickets (auditability), and keeping explicit “fire” controls (terminate/override) as the control layer, as outlined in Paperclip’s feature framing via the GitHub repo and echoed in the workflow mention.

This matters operationally for creative shops because it’s a repeatable way to prevent “background agents” from quietly running up cost, drifting off-brief, or producing untraceable decisions—without needing to reduce everything back to single-shot prompting.

Paperclip open-sources a control panel for running multi-agent “companies”

Paperclip (paperclipai): An open-source orchestration platform is getting shared as the missing UI layer for coordinating multiple agents toward business goals—explicitly including OpenClaw-compatible agents—per the workflow mention and the linked GitHub repo.

The project frames itself as an ops console for agent teams (persistent sessions, threaded tickets, “heartbeats,” and cost controls), which maps closely to how creative teams are starting to run multi-tool pipelines when one model/tool isn’t enough to finish a deliverable end-to-end.

🎬 Video generation craft: Motion Control swaps, arena rankings, and fast local renders

Today’s video chatter is less about “new models” and more about what’s working in practice: Kling’s Motion Control handling tricky face swaps, Grok topping an Image-to-Video arena chart, and LTX-2.3 speed notes from creators. Excludes OpenClaw/agent benchmarking (covered in the feature).

Grok Imagine Video tops Image-to-Video Arena, edging Veo 3.1 variants

Grok Imagine Video (xAI): A widely shared Image-to-Video Arena screenshot shows grok-imagine-video-720p ranked #1 at 1404 ±6 (preliminary) with 66,616 votes, narrowly ahead of Google Veo 3.1 audio 1080p at 1402 ±12, as captured in the Arena leaderboard.

Treat it like a preference signal, not a lab eval: the same board also lists separate Veo 3.1 “fast” and Grok 480p entries, implying creators are voting on distinct quality/speed presets rather than one canonical model.

Kling Motion Control: multi-angle face swaps hold up surprisingly well

Kling Motion Control (Kling): A creator stress-tested Motion Control on “known” faces (hard mode because viewers notice drift faster) and showed it can keep a subject’s pose/scene while swapping through multiple celebrity identities across tricky angles—still imperfect, but notably stable for a single clip according to the Motion Control character swap demo.

This is the kind of shot that breaks quickly when head turns, lenses shift, or the face gets partially occluded; the demo frames Motion Control as more than a gimmick when you need continuity across a cutless move.

LTX-2.3 local throughput: a UGC “end gag” in ~45 seconds on a 5090

LTX-2.3 (LTX): A creator reports generating a common UGC-style “hard cut end” gag in 45 seconds on a 5090, flagging how fast local iteration is getting for short-form beats in the Local render speed note.

The clip itself is a simple, repeatable pattern (fast zoom + smash cut to “The End”), but the notable part is the throughput claim—this is the kind of timing that changes how many variants you can try per hour.

Kling 3.0: fog-and-silhouette monsters are emerging as a repeatable win

Kling 3.0 (Kling): A creator calls out foggy monster scenes as a particularly strong aesthetic lane—where silhouette readability and eye-glow beats survive the model’s motion/texture noise—backed by a short horror blocking example in the Fog monster clip.

The shared clip leans on big shapes, low detail, and high-contrast eye accents, which is a practical recipe for hiding identity drift while still landing “threat presence.”

MartiniArt as a node-based front-end for Seedance 2 generation runs

Seedance 2 (Anima Labs ecosystem): A creator calls out generating Seedance 2 outputs via MartiniArt as their preferred setup because the node-based workflow makes iteration and organization “very convenient,” per the Node-based workflow note.

This is a tooling ergonomics signal: as clips get longer and more multi-pass, creators are gravitating to graph UIs that make branching, reusing, and swapping inputs less painful than linear prompt histories.

Kling 3.0 micro-horror test: industrial close-ups that cut to sterile lab

Kling 3.0 (Kling): A short “horror” experiment uses an industrial robot arm handling a glowing object and then hard-switches the environment into a bright, clinical lab vibe, as shown in the Robot arm horror test.

This kind of setting swap is a useful pattern for thriller pacing: start in grime and shadows (detail ambiguity), then reveal in sterile light (detail scrutiny).

🧠 Workflows that ship: multi-tool pipelines, ‘service-as-software,’ and agent-native collaboration

High-value creator posts leaned into repeatable workflows and operating models: Freepik Spaces pipelines (Nano Banana→Kling), prompt-to-deliverable “let AI handle the rest,” and the business pattern of packaging expertise as an AI-driven service. Excludes OpenClaw ecosystem items (feature).

Freepik Spaces theme-park tour: Nano Banana assets, then Kling 3.0 animation pass

Freepik Spaces (Freepik): A concrete multi-tool template for “ride-through” videos is being shared: generate three core assets in Nano Banana (logo, map, and the ride-car POV frame), composite the ride car into each scene, then run a Kling 3.0 animation sweep to produce a full tour sequence, as walked through in the Step-by-step workflow and finalized with the shareable Space in the Space duplication link. This is framed as a low-overhead way to produce a whole multi-scene piece without rethinking edits every time.

• Reusable template: The Space is positioned as “duplicate and make it your own,” with the underlying nodes/prompts included per the Space duplication link.

• Asset discipline: The workflow’s constraint—lock the ride car early, then vary environments/lighting/gestures—shows up in the compositing guidance in the Step-by-step workflow.

The only hard dependency called out is Kling support for start/end-frame driven shots, which the thread treats as the batch-enabler in Step-by-step workflow.

Service-as-a-software: packaging expertise as the moat as agents absorb UI

Service-as-a-software thesis: A creator-side business model argument is getting explicit: “90% of the application layer” (dashboards/forms/CRUD) gets absorbed by foundation model product updates, while the durable opportunity is turning domain playbooks into AI-delivered services at scale, per the Service-as-software thread. It’s framed as a shift away from spending months on “beautiful UI applications” toward selling outcomes, with “agents just do it. One prompt. Done,” as stated in Service-as-software thread.

Examples given include service businesses (ad agency, IP law, accounting/consulting) encoding “what good looks like” and scaling delivery with near-zero marginal cost, following the Service-as-software thread.

Kling 3.0 start-end frames plus one master prompt for batch scene generation

Kling 3.0 (Kling AI): A repeatable way to crank through lots of related shots is being described as “initial + final frames + one master prompt,” then generating the whole set and stitching after—explicitly framed as avoiding overthinking cuts, per the Initial and final frames note. It’s presented inside a theme-park tour build, but the mechanism is general: define a consistent motion/style instruction once, then feed different start/end pairs.

• Why creatives care: This makes multi-shot sequences feel closer to a render queue—set the motion rules once, then swap the bookends—matching the “generate all the animations in one go” claim in the Initial and final frames note.

The thread doesn’t share exact Kling parameter values, but it does clearly specify the control surface (start/end frames + master prompt) in Initial and final frames note.

Prompt-driven contributions: issues plus copy-paste prompts replacing PRs

Agent-native collaboration pattern: A repo-level workflow is being normalized where maintainers stop taking PRs and instead ask contributors to file issues and provide “detailed prompts” they can paste into an agent, as shown by Theo’s notice screenshot shared in the Repo disclaimer. This treats prompting as the contribution artifact (problem description + execution instructions) and the maintainer/agent combo as the implementation path.

The post also frames it as part of a broader “reinvent PRs/GitHub/collaboration” push, per the Repo disclaimer.

AI Studio Remix as distribution: copy an app template into your Cloud project

Google AI Studio (Google): A template-as-product sharing loop is highlighted: publish a small app (here, a local freelance rate estimator) and let others clone it into their own Google Cloud project via the in-product “Remix” action, per the App demo and premise and the step list in Remix instructions. The pitch is that once remixed, users can tweak defaults and UI rather than rebuilding.

• Distribution mechanic: The explicit steps—open in AI Studio, tap “Remix,” choose a Cloud project—are treated as the enabling workflow in Remix instructions.

The content doesn’t show the underlying model/tooling config, but it does document the replication pathway that makes these mini-app templates shareable at speed in Remix instructions.

One-prompt content assembly: idea to complete blog post in a single run

Text-to-deliverable workflow: A simple “type an idea, get a deliverable” loop is shown for written content—enter a single prompt (“Write a blog post about sustainable gardening”) and watch a full article populate, ending with a “DONE” state in the Blog post generation demo. It’s positioned as a minimal-input assembly line: you bring topic selection and direction; the system outputs a complete draft fast.

The clip is light on tool attribution and settings, but it’s a clear reference for how creators are packaging writing tasks into a single-shot generation step, as demonstrated in Blog post generation demo.

🧩 Copy/paste prompt kit: Midjourney SREFs, Firefly prompt formats, and brand-ready templates

A heavy prompt day: multiple Midjourney SREF codes (retro anime, Moebius, ink-wash fantasy, cyberpunk), plus practical prompt templates for product shots and visual transformations. Focus is on reusable aesthetic recipes, not tool capability news.

A “conceptual branded outerwear” smart prompt forces brand logic + 3D layout

Nano Banana 2 (Prompt kit): A structured “Senior Creative Director & 3D Artist” prompt is being shared to generate a branded outerwear product showcase with adaptive identity logic (nature prints for outdoor brands; icon-pattern prints for food/tech/other), a fixed two-garment layout (front + 3/4 back), and explicit camera/lighting rules (85mm lens; white cyclorama; soft key + rim lights), as written in the Branded outerwear smart prompt.

It also hard-codes logo placement (subtle chest mark; large integrated back logo) and a footer line for campaign-like presentation, which pushes generations toward catalog-ready consistency instead of single hero shots.

A split blueprint-to-render prompt template is spreading (Firefly + Nano Banana 2)

Prompt template (Image): A copy/paste format is being shared for “blueprint vs final render” composites: “A [subject] split vertically… left half as a technical blueprint, right half as a 3D render, the seam between them glitching” per the Blueprint-to-render prompt.

The same post shows multiple worked examples (motorcycle, submersible, robot, Notre Dame), and explicitly notes it was made in Adobe Firefly with Nano Banana 2 in the Blueprint-to-render prompt.

Midjourney SREF 378114956 blends ink wash, ukiyo‑e color, and fantasy

Midjourney (SREF): Promptsref is pitching --sref 378114956 as an “ink dreamscape” blend—minimal linework, ink wash energy, and ukiyo‑e palette with modern fantasy illustration, per the Ink wash style code and the linked Style breakdown page.

The visual examples skew toward poster-ready silhouettes and “spirit tale” mood (high readability, stylized negative space), which is often what you want for book-cover comps or indie game key art.

Midjourney niji 6 SREF 732508174 is being used as a cyberpunk mecha preset

Midjourney (SREF): A cyberpunk/mecha recipe is being shared as “SREF 732508174 with niji 6,” described as auto-balancing fluorescent neon character color against darker industrial backdrops for a game-splash-screen look, according to the Cyberpunk mecha SREF claim and the accompanying Style guide page.

The claim is less about a single subject and more about consistent material contrast (soft character detail vs hard metallic armor), which is usually the failure mode when you’re iterating mecha designs quickly.

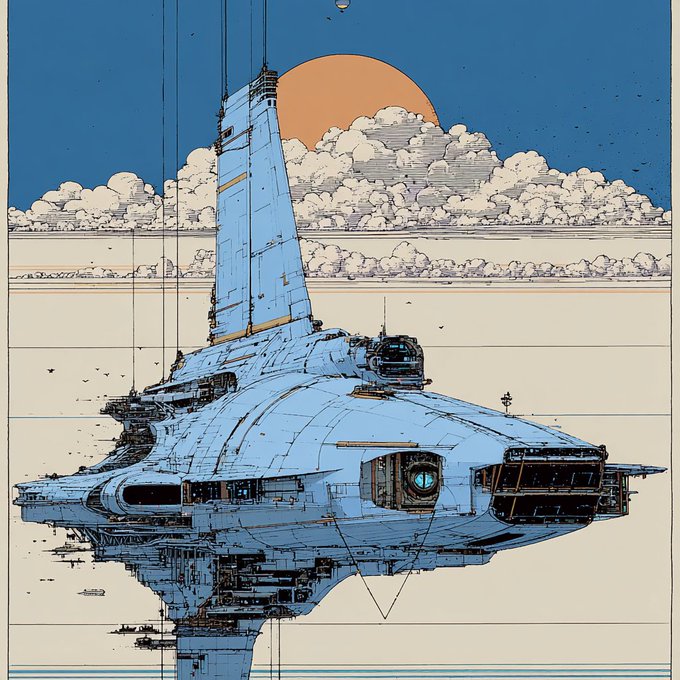

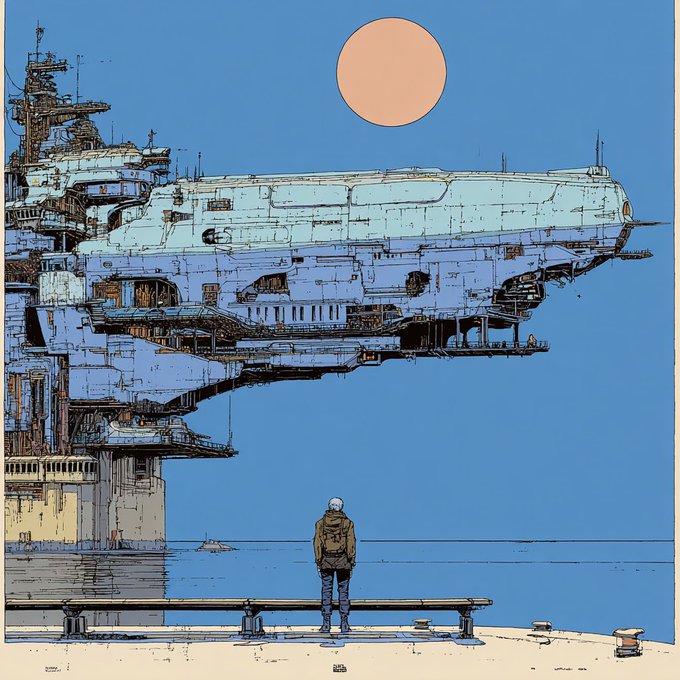

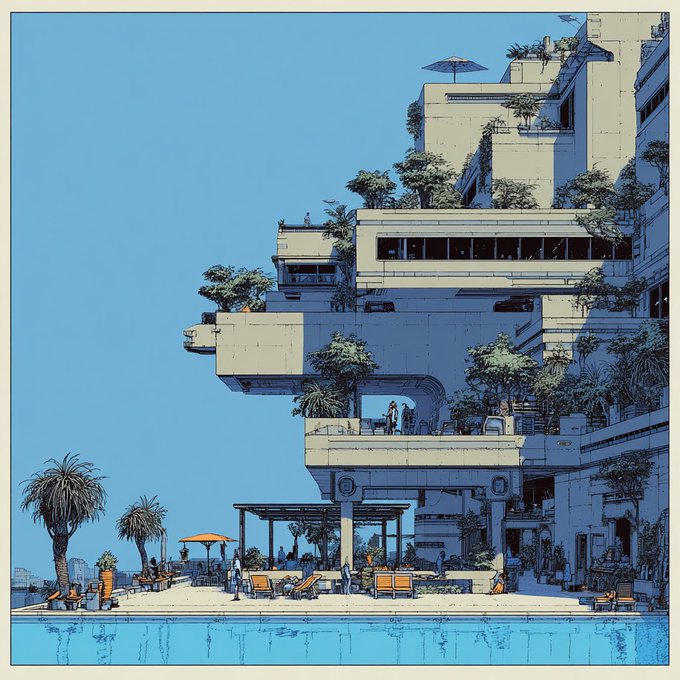

Midjourney SREF 1214835837 nails Moebius-style European retro‑futurism

Midjourney (SREF): A Moebius / bande dessinée lookdev shortcut is being shared as --sref 1214835837, framed as “European retro-futurism” that also evokes Syd Mead / Chris Foss / Enki Bilal per the Moebius style reference.

The examples in the same post read as clean-line sci‑fi illustration with flat color blocks and crisp architectural silhouettes, which tends to be useful when you want concept art that stays graphic instead of drifting into painterly diffusion mush.

Midjourney SREF 2726004757 targets 80s–90s cel anime OVA cinematics

Midjourney (SREF): A retro cel-animation reference is circulating as --sref 2726004757, described as 80s–90s anime with a cinematic OVA feel and semi-realistic character design, with studio touchstones like Pierrot / Madhouse / Sunrise / TMS called out in the Retro anime SREF drop.

The attached frames lean into rain-lit mood, dusk gradients, and thicker linework—good for storyboard stills or title-card frames that need to read like scanned cel art rather than modern anime polish.

Promptsref’s “Vintage Dark Cinematic” SREF pairs film noir light with 80s sci‑fi grain

Midjourney (SREF): Promptsref posted an analysis of its “most popular sref,” listing --sref 929505068 2200230567 --v 7 --sv6 and describing a “Vintage Dark Cinematic” aesthetic (low-key lighting, visible grain/noise, shimmering highlights) in the Top SREF analysis post, with broader context in the SREF library page.

The write-up explicitly frames it as useful for film/game concept frames, luxury-ish product stills (metal/jewelry), and album covers—because the style bakes narrative texture into highlights rather than relying on subject matter alone.

A Nano Banana Pro/2 JSON prompt dials in “Y2K Chrome_Plush” materials

Nano Banana Pro/2 (Prompt kit): Lloydcreates shared a full JSON-style prompt for “Y2K Chrome_Plush” renders—parametric ribbed geometry plus plush faux-fur fused with iridescent liquid chrome, floating on a pure black void with rim light and internal glow—per the Y2K Chrome_Plush prompt.

The same prompt includes heavy negative constraints (no text/logos/stands; no cropped edges; no DOF blur on product) and a specific rendering vibe callout (“8k octane render,” “Unreal Engine 5.4”), which helps keep outputs looking like product CG rather than concept collage.

A reusable prompt converts anime characters into live-action editorial portraits

Prompt template (Image transformation): A copy/paste prompt is circulating to turn “anime characters into realistic human portraits,” specifying fashion-editorial lighting, filmic color science, subtle halation/bloom, and explicit texture requirements (skin pores, lifelike hair strands), while preserving the original framing—see the full text in the Anime-to-photoreal prompt.

The post pairs the template with an example transformation (illustration → photoreal portrait), showing the kind of “same composition, new medium” edit this wording is aiming for.

Midjourney SREF 3027421074: “Cyber Dreamscape” vaporwave gradients + glow

Midjourney (SREF): Another style reference making the rounds is Sref 3027421074, framed as a “Cyber Dreamscape” that mixes 80s retro sci‑fi with softer neon gradients and particle-like glow, as described in the Cyber dreamscape SREF post and expanded on in the Style detail page.

The positioning here is calmer and more UI/cover-friendly than high-contrast cyberpunk—more “velvet gradients” than hard neon edges.

🖼️ Image models & visual formats creators are shipping daily

Image-heavy posts skewed toward repeatable formats (daily puzzles, cinematic poster drops, surreal stills) and new photo-model chatter. This section captures what’s being generated and shared, excluding raw prompt dumps (handled in Prompts & Style Drops).

Higgsfield is claimed to have launched Soul Cinema, an in-house cinematic photo model

Soul Cinema (Higgsfield): A shared claim says Higgsfield “just dropped Soul Cinema,” positioning it as an in-house AI photo model aimed at cinematic frames with richer textures and realism, per the Soul Cinema mention. The post doesn’t include a spec sheet, pricing, or a public eval artifact, so the current signal is mostly positioning rather than measurable deltas.

Hidden Objects levels .054–.055 keep the Firefly + Nano Banana daily puzzle loop rolling

Hidden Objects (Adobe Firefly + Nano Banana 2): Following up on Daily puzzles—the daily “find 5 items” reply-bait format shipped two new boards, with a jungle-root scene in the Level .054 post and a cluttered artist workbench in the Level .055 post; both keep the same mechanic of a dense scene plus the 5 target icons at the bottom.

• Level .054 (jungle tree roots): The five targets include a gator, compass, padlock, shell, and yarn ball, as shown in the Level .054 post.

• Level .055 (artist workbench): The five targets include an anchor, banana, harp, moon, and frog, as laid out in the Level .055 post.

Midjourney plus Magnific AI shows up as a “Synthetic Deletion” finishing workflow

Midjourney + Magnific AI: The “Synthetic Deletion” post frames a repeatable finishing loop—generate the base in Midjourney, then run it through Magnific to push texture/detail and a specific gritty-polished aesthetic, as shown in the Synthetic Deletion image and reinforced by the Settings callout.

What’s missing in the tweets is the exact Magnific settings preset (strength, detail, style), but the share reads like a reference look for “post-process to add tactile surface noise” rather than a new model release.

A high-density city diorama still leans on “world-inside-a-cube” composition

Surreal illustration format: A highly detailed intersection scene stacks multiple scale cues—dense signage and crowds, oversized suited figures, and a giant white bird carrying a transparent cube that contains its own miniature coastal diorama, as shown in the City diorama still.

This kind of “nested diorama” composition is showing up as a sharable still format because it reads like a poster at thumbnail size but rewards zooming with micro-detail.

DrSadek_ keeps shipping cinematic surreal stills as standalone poster drops

Cinematic still cadence: DrSadek_ posted multiple standalone surreal frames in a tight window—“The Spiral Beyond” in the Spiral Beyond still, “The Book of Light and Shadow” in the Book still, and a “Good night” cloud-brain boat image in the Good night still—all formatted like finished key art rather than process shots.

The repeatable pattern is the same: one centered subject, one legible silhouette, and a single high-contrast “portal light” focal point that reads quickly in-feed.

🧰 Creator apps to watch: private-by-design tools, mini-app builders, and vertical AI products

A cluster of ‘creator-facing apps’ surfaced: a HIPAA-aligned local-first writing tool, text-to-mini-app containers, a film-critique app wrapper, and an AI home-design system trained on permit-approved plans. These are product surfaces creators might adopt, not just model demos.

STAGES teases a 3/11 drop and publishes a “not a wrapper” platform argument

STAGES AI: STAGES is continuing a public build-in-the-open cadence with a “It’s coming 3/11” teaser, following up on VISIONZ tease (director-canvas direction) via the post in 3 11 teaser.

The same thread also shares a whitepaper excerpt arguing that calling STAGES “just a wrapper” is “analytically wrong,” listing platform mechanics like route guards, capability middleware, ledger state, and rollback plans, as shown in Wrapper thesis excerpt.

• Control layer angle: A separate note frames how STAGES/OpenClaw are “kept controlled by their user” and ties that to an upcoming desktop release timeline, per Desktop control note.

DraftedAI pitches permit-trained planning that outputs floor plans fast

DraftedAI: Drafted is being pitched as an AI home-design system trained on real house plans that were actually built and passed permitting; the workflow claim is “type what you want” and get 5 floor-plan options quickly, as shown in Drafted product intro and the follow-up explainer in Old way vs Drafted.

• Positioning: The team frames this as “not ChatGPT with a wrapper,” but a specialized model that encodes buildable constraints, per Drafted product intro.

• Traction and financing: The thread cites $1.65M raised at a $35M valuation and “1,000 daily users,” with backers listed (including Patrick Collison), as stated in Backers and round and Usage and scope.

Typeless claims HIPAA compliance by keeping history on-device

Typeless: Typeless is being positioned as “fully HIPAA compliant,” with the key product claim being that user history stays on-device (not stored in the cloud) and inputs are not used for model training, as stated in the HIPAA compliance claim.

• Privacy posture: The pitch is aimed at sensitive writing workflows (medical notes, legal docs, private journaling) where “most AI tools” retain or train on prompts, per the framing in HIPAA compliance claim.

• Compliance roadmap: Typeless also name-drops SOC 2, ISO 27001, and GDPR work “underway,” as described in HIPAA compliance claim.

Wabi’s pitch: create unlimited mini-apps that run inside Wabi

Wabi: Wabi is getting attention for a “mini-apps inside one host app” model—create unlimited small tools and run them inside Wabi, framed as a middle ground between an iOS Shortcut and shipping a full standalone app, according to Mini-app container pitch.

This is a distinct distribution surface for creators who want lots of single-purpose utilities (checklists, calculators, lightweight editors) without maintaining separate installs, as described in Mini-app container pitch.

A Gemini Film Critic AI gets packaged as an Opal app

Opal (Google): A creator-built Gemini Film Critic AI has been wrapped into an Opal app so film feedback can run as a repeatable critique tool rather than a one-off chat session, as described in Film critic app share and linked via the Opal project in Opal app page.

The positioning is straightforward: use the app to “get feedback on my work,” which maps cleanly to script notes, cut reviews, and pitch-deck iteration loops, per Film critic app share.

MartiniArt gets called out as a preferred node-based Seedance 2 workflow

MartiniArt: A creator calling MartiniArt their preferred place to run Seedance 2 highlights “node-based workflow” ergonomics as the reason—implying more controllable, repeatable generation graphs for longer iteration sessions, per Node-based Seedance note.

This is a UI/ops signal more than a model claim: the tool choice is about managing complexity (branching prompts, variations, batching), as described in Node-based Seedance note.

📣 Marketing creatives: UGC hooks and AI-made ad structure that’s scaling

Marketing-oriented creator posts focused on what’s working on short-form platforms: ‘mirror moment’ hooks, pacing, and curiosity-first sequencing. Excludes regulation/disclosure news (covered in Trust & Policy).

The “mirror moment” UGC hook keeps scaling: reaction → problem → solution

UGC hook structure: A creator breakdown claims $95k/month TikTok-style ads increasingly open on a mundane bathroom mirror moment—hair pulled back, arms up, a quick facial “realization”—before any product appears, arguing the authenticity is the scroll-stopper in the Hook structure notes.

• Sequence that’s being copied: The post spells out the ordering as “reaction first / problem second / solution last,” with the product reveal intentionally delayed to let curiosity build in the Hook structure notes.

• Playbook distribution loop: The same post uses “RT + comment ‘mirror’ and I’ll send you the framework” as a built-in lead-capture mechanic, as described in the Hook structure notes.

Luma’s “Travel” spot is pitched as end-to-end AI ad production with Agents

Luma Agents (Luma Labs): Luma shares a finished “Luma Travel” commercial and says the concept, visuals, voice, and music were created start-to-finish with Luma Agents, crediting Jon Finger in the Luma Travel ad claim.

The marketing-relevant takeaway is the positioning: not “AI helped,” but “one system produced the full stack” (creative + post + audio) as stated in the Luma Travel ad claim.

Sponsor ethics debate: “no ethical consumption” vs taking predatory money

Creator sponsorship ethics: A post draws a line between “no ethical consumption under capitalism” as a systemic observation and “I’ll take the money from people we both know are predatory” as an individual choice, arguing that “bad actors survive because good people provide cover through participation,” per the Creator legitimacy critique.

It’s framed as a reputation and trust issue in the creator economy—who takes which checks becomes part of the “legitimacy problem,” as described in the Creator legitimacy critique.

🛡️ Copyright, disclosure, and AI ad rules tightening around creatives

Policy and trust posts centered on disclosure requirements and copyright: EU Parliament moves on training-data transparency and remuneration, NY ad disclosure rules, and platform-level ‘Made with AI’ labeling debates. This is the day’s main governance cluster.

EU Parliament pushes a copyright licensing path for generative AI training

European Parliament: MEPs are set to vote (Tuesday, March 10) on a Legal Affairs Committee report that frames generative‑AI training as a copyright/compensation problem—calling for mandatory transparency about which copyrighted works were used, “fair remuneration” for rightsholders, and mechanisms for creators to exclude their work from training, as summarized in the Vote preview summary and expanded via the Policy recap blog. This matters to working creatives because it’s one of the clearest “operational” policy pushes toward licensable training data (and away from opaque scraping) that could shape how image/video/audio model vendors source datasets.

• Licensing market ask: The report explicitly calls on the Commission to help create a licensing market so deals can be struck with individual creators, not only collecting societies, per the Vote preview summary.

• News/media carve‑outs: Press and news media are called out for targeted protections and compensation demands (AI “exploiting journalistic content”), as described in the Vote preview summary.

It’s still an own‑initiative procedure (recommendations, not binding law), with the press conference and next steps noted in the Vote preview summary.

New York AI-in-ads disclosure rule is flagged with June 9, 2026 start date

New York (advertising disclosure): A circulating claim says New York will require brands to disclose AI use in ads starting June 9, 2026, with fines described as up to $5,000+ for noncompliance in the Regulation claim. For AI ad makers, the practical impact is less about “labeling etiquette” and more about production ops—having to track which shots, voice, and likeness elements were generated/edited so the final deliverable can be disclosed consistently.

The tweet doesn’t include the underlying statute/reg text, so scope details (which media, which thresholds, and what counts as “AI model”) aren’t evidenced beyond the Regulation claim.

X’s “Made with AI” toggle raises reach and cutoff questions for creators

X (content disclosures UI): Creators are circulating a screenshot of X’s “Content disclosures” modal showing two post-level toggles—“Disclose paid promotion” and “Made with AI”—with concern about unclear cutoffs (is the label forever?) and whether the algorithm gates distribution for labeled posts, as discussed in the Disclosure toggles screenshot.

• Early anecdotal signal: One reply claims they’ve used the “Made with AI” disclosure all week and “it doesn’t seem to be hurting my reach… I got boosted,” while acknowledging it could change, per the Reach anecdote.

Net: the UI exists and is being noticed; the enforcement/impact model (and any advertiser-facing implications) remains unstated in the Disclosure toggles screenshot.

Allegation of “minor-looking” AI companion ads puts pressure on platform review

YouTube (ad review & enforcement pressure): A retweeted thread claims YouTube is running ads for “AI girlfriends that look like minors,” calling it “disgusting,” per the Allegation in thread context. For creators and studios selling AI-generated ads or character content, this kind of allegation tends to accelerate platform policy tightening (creative approvals, age gating, and disclosure requirements), even when the public evidence in the post is limited to the Allegation in thread context.

The tweet provides no creative samples or policy response, so verification and specifics aren’t available from the Allegation in thread context.

Ben Stiller calls out unauthorized government use of a film clip

IP permission norms: Ben Stiller is quoted asking the White House to remove a Tropic Thunder clip, saying “We never gave you permission,” in the Permission complaint. For AI creatives, it’s a reminder that rights enforcement conversations are broadening beyond model training into downstream use and distribution—especially when high-visibility channels repost or remix copyrighted footage.

No additional details (which account/post, licensing status, or response) are included beyond the Permission complaint.

📊 Creator distribution signals: analytics, labeling anxiety, and the cost of posting

When the platform itself becomes the story: creators shared analytics snapshots, debated whether ‘Made with AI’ labeling affects reach, and joked about the hidden labor (e.g., watermarking). This category is only about distribution dynamics, not marketing strategy.

X’s “Made with AI” toggle raises new reach and labeling questions

X Content disclosures (X): A UI modal now exposes two posting toggles—“Disclose paid promotion” and “Made with AI”—and creators are openly asking whether the AI label is permanent and whether it quietly throttles reach (especially if you’re paying for X), as described in the Disclosure toggles screenshot.

• Early anecdote, not proof: One reply claims they’ve used the AI label “all week” with no obvious penalty—“If anything I’d say I got boosted”—but also flags uncertainty about whether platform behavior might change later, per the Reach test reply.

X analytics volatility becomes the story, not the post

X Analytics (X): A two‑week “Account overview” screenshot highlights how spiky creator distribution can be—170.1K impressions, 9.6% engagement rate, and visible day-to-day peaks/drops—framed as a “lights out” complaint in the Analytics swings screenshot.

The subtext is distribution unpredictability: even with solid engagement, the graph shape (and the sudden falloff) is what creators end up reacting to publicly, per the Analytics swings screenshot.

Creators keep publishing weekly KPI dashboards as content

Creator KPI culture: A “Growing in public” post shares 7‑day daily averages—14,929 impressions, 10.06% engagements, 64.6 bookmarks, +40.7 net followers, and 12 posts published—as a standalone update in the 7-day averages.

It’s another signal that distribution metrics themselves are becoming a recurring content format (and a feedback loop for what to post next), as shown by the structure of the 7-day averages.

The “watermarking tax” becomes a creator economy punchline

Posting ops overhead: A joke breakdown claims “posting fire takes: 3%” versus “watermarking: 97%,” pointing at the non-creative labor that shows up once you’re pushing lots of AI assets and trying to keep attribution/ownership intact, as framed in the Watermarking time joke.

It’s not a benchmark, but it does capture a real distribution-side pain: high output turns finishing and provenance into the time sink, per the Watermarking time joke.

🎞️ What creators released: shorts, trailers, and format experiments

Finished or semi-finished creative outputs dominated: AI short-film experiments, trailers, and polished visual pieces made with current gen video stacks. This category is for “the thing” being released, not the underlying prompt packs.

Luma Travel: a 60-second commercial made end-to-end with Luma Agents

Luma Travel (Luma Labs): Luma shared a ~60s “Luma Travel” spot where the concept, visuals, voice, and music were produced start-to-finish with Luma Agents, crediting creator Jon Finger in the release post Luma Travel showcase. It reads as a proof that “ad assembly” is now an agent workflow, not a tool chain.

• What’s notable on-screen: the piece is structured like a conventional brand spot (title card, hero product shots, world transitions), which is the practical bar agencies care about, as shown in the Luma Travel showcase.

• Creator signal: Finger also posted additional experiments with the same agent stack, suggesting this isn’t a one-off demo but an ongoing production loop More Luma Agents tests.

Anima_Labs releases “ICE,” their first AI short movie using Seedance 2

ICE (Anima_Labs): Anima_Labs is calling “ICE” their first AI short movie and is explicitly tying the jump to longer formats to Seedance 2, framing the project as a step beyond quick clips toward short-film pacing ICE short movie note. Runtime, workflow details, and distribution aren’t included in the post, but the intent—“increasingly longer formats”—is stated directly ICE short movie note.

Historical portrait montage built with Nano Banana 2 and Kling 3.0 Multishot

Nano Banana 2 + Kling 3.0 (montage format): OzanSihay released a ~54s Turkish-language historical montage that cycles through stylized portraits of women heroes, noting it was created with Nano Banana 2 plus Kling 3.0 Multishot Historical montage post. The result lands as a repeatable “named-figure sequence” format: consistent portrait styling, hard cuts between characters, and legible on-screen titling throughout Historical montage post.

Retro-industrial sci‑fi short: AI-made animation with industrial metal score

Retro-industrial sci‑fi animation (creator short): Artedeingenio released a ~3m24s retro-industrial sci‑fi sequence—dense machines, pans across an industrial megastructure—explicitly framed as “all made with AI” and paired with an industrial metal soundtrack Retro-industrial short. The post also teases a forthcoming breakdown of how it was made, implying a repeatable pipeline behind the look Retro-industrial short.

Flow State: Joshua Bonzo’s abstract motion piece made with Luma

Flow State (Luma): Luma highlighted “Flow State,” a ~46s abstract piece by Joshua Bonzo built around a luminous, morphing blue form and slow camera motion, positioned as a finished motion-art clip rather than a tool demo Flow State post. The release is a clean example of “single-subject generative cinematography” as a shareable format.

THE LAST ARTIST drops a 20-second teaser ahead of a new trailer this month

THE LAST ARTIST (NAKIDpictures): A ~20s teaser montage dropped with the promise that a new trailer is coming “this month,” functioning as a project-marketing beat rather than a tool announcement Trailer teaser. The clip is edited like a conventional action trailer bumper (title card → rapid cuts → logo lockup), suggesting the team is packaging AI-made footage in familiar promo structure Trailer teaser.

Immortal paintings brought to life: a short AI animation compilation

Painting-to-motion format: awesome_visuals shared a ~73s compilation framed as “immortal paintings brought to life with AI,” credited to a Douyin source in the thread context Paintings-to-motion clip. It’s presented as a visual reference piece—classical artwork style translated into animated movement—without tool disclosure or a how-to breakdown Paintings-to-motion clip.

Metallic morph study: more Luma Agents cinematography experiments

Luma Agents (creator experiment): Jon Finger posted a ~34s abstract cinematography study—metallic/liquid object morphing and pulsing against a dark background—explicitly as “more playing with our Luma Agents,” with cinematography credit called out in the caption Luma Agents experiment. It’s a tight “motion-material study” format: one subject, controlled lighting, and continuous transformation Luma Agents experiment.

📄 Research radar for creators: real-time long video + action-conditioned generation

Research posts that matter to creatives focused on real-time generation and longer-form video systems, including paper+code drops with demos. This section stays practical: ‘what capability is coming’ rather than pure theory.

RealWonder ships real-time action-conditioned video generation (paper + code)

RealWonder (Stanford/USC): A new research release claims real-time video generation conditioned on physical actions, with the paper shared in the paper drop and a full code release echoed in the code release. The core pitch is interactive control—user actions drive a physics simulation step, which then conditions a lightweight diffusion video generator for fast, temporally coherent motion.

• Performance targets: The released implementation claims 13.2 fps at 480×832 using a 4-step diffusion generator, as described in the GitHub repo summary.

• What’s actually controllable: The repo summary emphasizes rigid/deformable/fluid/granular interactions via a physics-sim intermediate, rather than only camera motion or pose steering, per the GitHub repo.

• Creator relevance: This frames “directable” video as an input problem (action → motion) instead of a prompt-only problem, matching the real-time/interactive direction implied by the paper drop.

Helios repo frames real-time, minute-scale video generation on a single H100

Helios (PKU-YuanGroup): A repo link circulating today positions Helios as a real-time long-video generator, with the share pointing to the GitHub repo via the demo and code RT. The repo summary claims minute-scale generation at 19.5 fps on one H100, leaning on a large diffusion architecture and efficiency work aimed at keeping long clips coherent.

The public signal here is simply that the project is being passed around as “real-time long video” infrastructure rather than a short-clip toy, as implied by the GitHub repo description.

ELO-style leaderboards are shaping which video models creators pick

Image-to-video leaderboards: Creators are increasingly treating ELO-style arenas as decision inputs for model choice, with the Image-to-Video Arena screenshot showing grok-imagine-video-720p at #1 (1404 ±6) ahead of multiple Veo 3.1 variants in the arena screenshot.

A second shared table adds a pricing lens—listing ELO plus API $/minute (e.g., Kling 3.0 1080p Pro at $20.16/min) in the pricing and ELO table.

Treat these as provisional signals—both are community-vote artifacts—but the spread (score, votes, and cost) is being used like a practical procurement heuristic, as shown by the way the arena screenshot and pricing and ELO table are posted.

Virtual Try-Off LoRA claims ‘worn photo → clean garment asset’ workflow

Virtual Try-Off LoRA: A new LoRA is being shared with the stated workflow goal of taking a photo of a person wearing a garment and generating a clean, isolated garment asset, according to the Virtual Try-Off LoRA RT. Details (training set, base model compatibility, and failure modes) aren’t included in the captured tweet, but the framing is clear: it’s meant as a pipeline primitive for virtual try-on and apparel asset preparation, per the Virtual Try-Off LoRA RT.

🧑💻 Vibe coding reality check: agent-era collaboration norms and dev-tool gripes

Developer-creator crossover posts focused on how coding culture is adapting: prompt-driven contributions, warnings about ‘vibe coding’ without fundamentals, and frustration with certain app backends. Excludes OpenClaw benchmark/skills (feature).

Some repos now prefer “issues + agent prompts” over pull requests

lawn (Theo): A repo-level collaboration pattern is showing up where maintainers explicitly pause PR intake and ask contributors to describe problems in Issues and provide “detailed prompts I can quickly copy/paste into my agent of choice,” as shown in the Repo PR disclaimer.

• Collaboration norm shift: Instead of reviewing patches, the maintainer keeps the edit loop local and uses community input as structured intent/spec; the screenshot in Repo PR disclaimer makes the “prompt as contribution” contract explicit.

This is a concrete example of how agent-driven dev is reshaping open-source etiquette: contribution becomes diagnosis + promptability, not just code.

Context switching gets blamed for why people can’t ship—even with AI

Focus and shipping (workflow): A creator reaction to a Cal Newport discussion argues the real productivity killer right now is “TOO MUCH context switching,” even calling out email as a technology that prevents flow because every message is a different kind of task, per the Context switching note.

For AI-assisted building, the implication is less about model quality and more about attention economics: even strong agents don’t help if the human operator is bouncing between threads faster than they can hold intent.

Vibe coding gets a “fundamentals-first” warning from devs

Vibe coding (practice): A cautionary take is circulating that “vibe coding is for experienced software devs” and that beginners shouldn’t lean on agent-driven coding until they “really know how to do the basic stuff,” per the Vibe coding warning.

The subtext for creative coders is that agents can accelerate output while also hiding gaps in debugging, architecture, and deployment literacy—so this reads less like gatekeeping and more like a reliability/safety norm forming around agent-era building.

Convex skepticism: “realtime sync was the only use case”

Convex (backend): A creator reports trying Convex and feeling the only meaningful benefit was realtime sync, while questioning the broader value proposition and pointing out there’s “still not an easy solution… to take any Postgres db and make it convex-y,” according to the Convex critique.

For tool-builders shipping creative products, this is a pointed signal: the migration/onramp story (existing DB to new reactive backend) is still a deciding factor even when agents can write large chunks of glue code.

AI-era role anxiety shows up as “I don’t need you anymore” meme

Org design anxiety (software teams): A Spider‑Man pointing meme frames software engineer, product manager, and designer all telling each other “I don’t need you anymore,” as shared in the Role redundancy meme.

It’s not a product update, but it’s a clean read on what’s bubbling up in creative-tech teams: agents compress interfaces, specs, and execution, and people are renegotiating where the human contribution sits.

📅 Workshops & webinars: film hackathons and AI video product walkthroughs

Event posts were light but actionable: an AI film workflow workshop for students and scheduled webinars about AI video tooling. This stays strictly to dated, attendable items.

Hailuo AI × HKUST AI Film Hackathon runs an “Advanced Workflow” workshop on March 8

HKUST AI Film Hackathon (HKUST) / Hailuo AI (MiniMax): An “Advanced Workflow Workshop” is scheduled for March 8, 2026 (2:00–5:00 PM) at HKUST campus with a live Zoom option, including Meeting ID 910 5084 9482 and passcode 350579, as listed in the Workshop announcement. It’s framed as hands-on training for students to learn generative AI film workflows and “AI-powered storytelling” with named speakers Ka Tam and Jeni To in the Workshop announcement.

The post doesn’t specify which exact tools will be demoed beyond “advanced workflow,” but the positioning is explicitly “bring your stories to life in your first AI film,” per the Workshop announcement.

Pictory sets a March 18 “future of AI video” session with its CEO and CMO

Pictory (Pictory): Pictory announced a March 18 webinar (11 AM PST) about “where AI video is heading,” led by CEO Vikram Chalana and CMO Scott Rockfeld, with registration linked in the Webinar invite via the Webinar registration. The framing is less about a single feature drop and more about positioning AI video as a workflow shift for teams “building in an AI-first world,” per the Webinar invite.

No agenda, product demos, or concrete feature list are specified in the post beyond the talk’s theme, so the operational details appear limited to time + speakers + registration in the Webinar invite.

Pictory’s drag-and-drop scene reorder workflow gets a concrete walkthrough

Pictory (Pictory): Pictory highlighted a workflow for tightening pacing by dragging scenes to new positions directly in the editor, positioning it as a fast way to “refine pacing and structure,” as shown in the Feature walkthrough. The step-by-step is documented in the linked Scene rearrange guide, which focuses on reordering inside the scene list rather than rebuilding a cut.

This is a small feature, but it’s one of the few “NLE-like” operations that meaningfully changes iteration speed when you’re generating lots of short segments—exactly the use case implied by the Feature walkthrough.

While you're reading this, something just shipped.

New models, tools, and workflows drop daily. The creators who win are the ones who know first.

Last week: 47 releases tracked · 12 breaking changes flagged · 3 pricing drops caught