LangChain Deep Agents open-sources MIT harness – supports 30B local coder runs

Stay in the loop

Free daily newsletter & Telegram daily report

Executive Summary

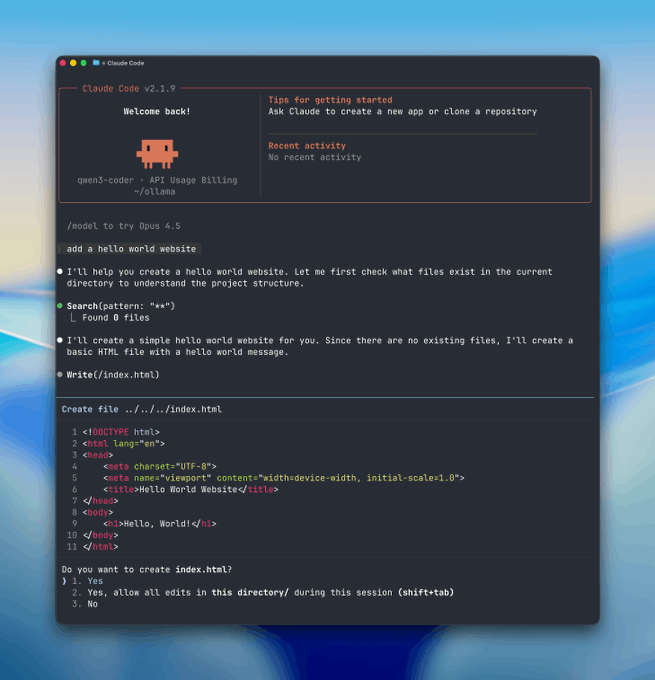

LangChain open-sourced Deep Agents, an MIT-licensed, inspectable “Claude Code”-style coding-agent harness; it bundles planning/todo primitives, filesystem read/write/edit, sandboxed shell execution, sub-agents with isolated contexts, plus auto-summarization when context grows. The social signal is developers treating the harness as model-agnostic plumbing; in parallel, threads show “Claude Code locally” setups that swap the cloud backend for Ollama via a base-URL hook, with suggested local coder tiers from qwen3-coder:30b down to smaller 7B/2B options.

• Anthropic Cybersecurity Skills: a community repo packages 611+ structured security playbooks as agent-pluggable “skills”; portability is claimed via agentskills.io, but real-world coverage/quality isn’t audited in-thread.

• Claude Code as document agent: one user points it at Pentagon budget PDFs to flag “10× overpays”; methodology/prompts aren’t shared, so it reads as a capability anecdote, not an eval.

• OpenClaw ops: a Discord multi-agent cron job reliably hit the intended server/channel/thread; small, but it’s the brittle edge of agent deployment.

Net: open harnesses + local runtimes are converging on the same workflow surface (tools, memory, subagents); the missing receipts are standardized benchmarks for reliability, privacy boundaries, and task success rates.

While you're reading this, something just shipped.

New models, tools, and workflows drop daily. The creators who win are the ones who know first.

Last week: 47 releases tracked · 12 breaking changes flagged · 3 pricing drops caught

Top links today

- LangChain Deep Agents GitHub repo

- Anthropic cybersecurity skills GitHub repo

- LookaheadKV KV cache eviction paper

- LMEB long-horizon memory benchmark paper

- Stanford AI persuasion study paper

- Run Claude Code locally with Ollama

- Typeless trust center for compliance details

- Nano Banana 2 access page

- MultiShotMaster tutorial on AI FILMS Studio

- AI FILMS News weekly roundup link

Feature Spotlight

Open-source “Claude Code” clones go mainstream: Deep Agents + local/private agent stacks

Creators are getting Claude-Code-like coding agents they can run locally or audit (Deep Agents + Ollama guides). This shifts agents from paid black boxes to customizable, private production tools.

High-volume thread cluster on building and running coding agents like Claude Code in open, inspectable systems—especially LangChain’s Deep Agents harness and guides for running Claude Code locally via Ollama. Includes adjacent “agents-ready” skill libraries creators can plug into their agents today.

Jump to Open-source “Claude Code” clones go mainstream: Deep Agents + local/private agent stacks topicsTable of Contents

🧩 Open-source “Claude Code” clones go mainstream: Deep Agents + local/private agent stacks

High-volume thread cluster on building and running coding agents like Claude Code in open, inspectable systems—especially LangChain’s Deep Agents harness and guides for running Claude Code locally via Ollama. Includes adjacent “agents-ready” skill libraries creators can plug into their agents today.

LangChain open-sources Deep Agents, a model-agnostic Claude Code-style agent harness

Deep Agents (LangChain): LangChain open-sourced Deep Agents, positioned as an MIT-licensed, inspectable replica of the core “Claude Code”-style coding-agent workflow—planning, tool access, sub-agents, and context management—described in the Deep Agents overview and echoed in the hackathon winner note.

• What’s actually included: built-in planning/todo tooling, filesystem read/write/edit, sandboxed shell execution, sub-agents with isolated contexts, and auto-summarization when context gets long, as listed in the Deep Agents overview.

• Why creators care: it’s framed as model-agnostic, so you can swap LLM backends without rewriting the harness, per the Deep Agents overview and the linked GitHub repo.

How people are running Claude Code “for free” by swapping in Ollama locally

Claude Code local stack (Ollama): A step-by-step thread claims you can run a Claude Code-like workflow locally (no cloud, “fully private”) by using Ollama as the local tool-calling runtime and pointing Claude at it via a base URL, as outlined in the local Claude Code claim and the base URL step.

• Local model selection: the setup calls out choosing a coder model based on your machine—e.g., qwen3-coder:30b for stronger hardware vs smaller options like qwen2.5-coder:7b or gemma:2b, as written in the model size guidance.

• What “private” means here: the thread asserts the agent can read/change local files and do tasks without internet or a hosted provider, as described in the offline work example, with Ollama positioned as the local runtime in the Ollama site.

A 611-skill cybersecurity library packaged for AI agents (agentskills.io format)

Anthropic Cybersecurity Skills (community repo): A GitHub repo called Anthropic Cybersecurity Skills is being shared as a database of 611+ structured security “skills” meant to be directly consumable by AI agents (step-by-step procedures, scripts, and templates), as described in the repo explainer and linked via the repo pointer.

• Structure matters: each skill is framed as standardized files (skill definition markdown plus references/scripts/assets), with the packaging pitched as the point in the repo explainer.

• Portability claim: it’s presented as built on the agentskills.io open standard and “works with any agent framework,” per the repo explainer and the GitHub repo.

Point Claude Code at a public budget PDF and ask for “10× overpays”

Claude Code (document audit use-case): One creator reports aiming Claude Code at a publicly available Pentagon budget document and tasking it with finding contracts “overpaying by 10× or more,” framing Claude Code as a fast triage layer for large PDFs and procurement tables in the budget audit claim.

The tweet is light on methodology (no prompt/tooling details shared), but it’s a clear example of creators treating coding-agent UX as a general “document agent” surface rather than just code editing, as implied by the budget audit claim.

A small reliability milestone: scheduled agent nudges landing in the correct Discord thread

OpenClaw (Discord automation reliability): A user reports their scheduled cron job inside a multi-agent Discord setup successfully hit the intended server, channel, agent, and thread—an “ops works” moment that tends to be brittle in real agent deployments, as shown in the Discord log screenshot.

The screenshot shows an agent (“Pam”) confirming a daily nudge time and then posting the next-day reminder into the same thread, matching the claim in the Discord log screenshot.

🎬 AI video reality check: Seedance 2 storytelling costs, camera controls, and multi-shot tooling

Continues the Seedance/Kling-era surge, but today’s content is more ‘production reality’: cost per seconds, broken long-form features, and new camera-control interfaces—plus integrated script→shot→generate→edit products.

Seedance 2.0 field report: short clips are fast; stories still cost time and money

Seedance 2.0 (ByteDance): A hands-on spend report pegs generation at roughly $2–$7 per ~15 seconds, with ~$1,000 buying about ~6 minutes of output; the creator says animation and multi-cut sequences come quickly, but scaling into narrative continuity (blocking, tone, pacing, multi-character exchanges) still requires heavy iteration, as described in the cost and craft notes and reinforced by the spend recap.

• What works well today: They call out Omnireference consistency as “actually very good” for keeping a shared look across shots when staying in short-form, per the cost and craft notes.

• What blocks long attempts: “Continue Video” is framed as the obvious bridge toward longer sequences, but they report it has been broken for weeks in their account, which halted longer runs, as noted in the cost and craft notes.

• Why iteration dominates: Hallucinations and drift show up as the expensive failure mode, because rerolls compound quickly at these per-clip prices, according to the spend recap.

MultiShotMaster tutorial details single-pass 1–5 shot narrative video prompting

MultiShotMaster (Kling/Kuaishou): A complete tutorial drop frames MultiShotMaster as a text-to-video system that generates 1–5 shots per pass with up to 308 total frames, using a hierarchical prompt setup (global scene + per-shot captions), as outlined in the tutorial overview.

• Model tiers and format knobs: The post lists 480p via a 1.3B variant and 720p via a 14B variant, plus 16:9 and 9:16 aspect ratios, with credits scaling by duration/resolution, per the tutorial overview.

• Where it runs and how it’s licensed: It’s described as available on AI FILMS Studio without local GPU setup, and released under Apache 2.0, with the step-by-step specifics hosted on the tutorial page.

Seedance 2 introduces selectable camera techniques for directing shots

Seedance 2 (Seedance): A new “camera controls” layer is being promoted as giving the creator explicit direction over the shot; it’s presented as a menu of 32 camera techniques you can choose in addition to your prompt, per the camera controls intro.

The tweet doesn’t show the full control UI or a parameter list beyond the “32 techniques” claim, so it’s unclear which moves are exposed (e.g., dolly vs. orbit vs. handheld) or whether controls apply as constraints vs. soft guidance.

BeatBanditAI folds a full NLE editor into script-to-shot generation

BeatBanditAI: The app is described as moving from “plan and generate” into “generate and finish” by adding a full NLE video editor inside the same product that already supports story writing, screenplay creation, shot definitions, and “consistent characters,” according to the NLE editor announcement.

A follow-up note says there’s now a “Generate Video” option inside the Shot List view, per the shot list update. The posts don’t include an interface walkthrough or export/codec details, so deliverables and handoff to external editors remain unspecified.

ARQ Studios shows a multi-agent “film studio” layout for AI filmmaking roles

ARQ Studios (starks_arq): A creator shares a “set of agents working together” concept for making films, illustrated as a studio building with distinct rooms for production functions (storyboards, screening, editing/post, servers), as shown in the multi-agent studio visual.

The practical value here is the decomposition: it frames film generation as multiple coordinated roles rather than a single chat—useful when teams want repeatable handoffs between writing, visual development, shot production, and edit assembly.

Anima Labs teases a Seedance 2 platform and an upcoming tutorial

Seedance 2 (Anima Labs): Anima Labs claims there’s “a new platform for using Seedance 2,” crediting @MartiniArt_ and saying a full tutorial is coming “tomorrow or Tuesday,” per the platform tease.

No UI screenshots or feature list are included in the tweet, so it’s unclear whether this is a hosted front-end, a workflow template, or a distribution channel layered on top of Seedance 2.

🧠 Copy/paste aesthetics: Midjourney SREFs + Nano Banana prompt kits (logos, worlds, portraits)

Heavy prompt payload today: multiple Midjourney --sref references, Nano Banana prompt templates (brand/logo treatments, macro mini-worlds), and long-form structured prompt schemas creators can reuse immediately.

A full JSON portrait schema for Nano Banana 2 pushes “photoreal with controls”

Nano Banana 2 (structured prompt schema): A long-form JSON-style prompt is being shared for photoreal portrait control—explicit sections for subject, hair, pose, clothing, lighting, background, constraints, and a detailed negative prompt list, as shown in JSON schema post.

• Control knobs creators actually reuse: It hard-codes “preserve_original,” locks lighting to mixed cool window + warm interior, and uses explicit “must_keep” vs “avoid” lists to steer likeness and realism, as written in JSON schema post.

The author also links a larger collection of similar prompt payloads in the Prompt library.

A Midjourney multi‑SREF stack is being reused for a “pink punk forest” mood

Midjourney (worldbuilding recipe): A “pink punk forest” prompt is being shared as a reusable multi‑SREF stack with --exp 20, --quality 2, and --stylize 500, using the SREF list “1313617211 4026975374 3796511843 3262620248” in Prompt string.

The linked clip shows the target vibe as a stylized walk through a pink-lit forest, as shown in Video sample.

Midjourney SREF 2089396866 is a stop‑motion puppet look preset

Midjourney (style reference): A new stop-motion-coded look is being shared via --sref 2089396866, described as handcrafted puppets with expressionist caricature features in Stop-motion sref.

The sample frames emphasize tactile materials, sculpted faces, and miniature-set lighting, as shown in Stop-motion sref.

Midjourney SREF 2833757731 aims at Franco‑Belgian comic illustration

Midjourney (style reference): A Franco‑Belgian comic tradition reference, --sref 2833757731, is being positioned around humor-forward European linework and coloring, with explicit artist anchors like Franquin/Sempé/Gotlib in Reference description.

The examples show heavier outlines, expressive faces, and clean flat color blocks consistent with that school, as shown in Reference description.

Midjourney SREF 3700911257 is being pitched as a red collage editorial style

Midjourney (style reference): --sref 3700911257 is described as a bold surreal collage look built around warm red geometric backgrounds, mixed-media layering, and editorial composition, with suggested uses like campaigns, album covers, and posters in Style breakdown.

The parameter combo being passed around is “--sref 3700911257 --v 7 --sv6,” and a longer walkthrough is linked via the Sref detail page.

Midjourney SREF 972728021 targets classic British children’s book ink+watercolor

Midjourney (style reference): A style reference code, --sref 972728021, is being shared as a “classic British children’s book illustration” ink-and-watercolor look (explicitly framed as Winnie-the-Pooh-adjacent) in Style reference note.

The attached samples show the loose watercolor washes plus ink linework the code aims to reproduce, as shown in Style reference note.

Midjourney “dry bones” stack mixes halftone surreal textures via 4 SREFs

Midjourney (style stack): Another multi‑SREF recipe, titled “dry bones,” is being posted with --exp 20 and --stylize 500, using the SREF chain “674592144 1895888838 2385325506 2380041913” in Prompt string.

The sample grid shows halftone/pointillist textures applied across subjects (bone-like structure, surreal profile scene, crab composition), as shown in Prompt string.

Midjourney SREF 162145993 pushes an anaglyph ‘red/blue split’ aesthetic

Midjourney (style reference): --sref 162145993 is being shared under the title “Instinct,” with examples that lean hard into chromatic-aberration/anaglyph separation (red/blue shadowing) for motion-heavy subjects like a diving falcon, as shown in Sref post.

The attached frames demonstrate the consistent red/blue split and high-contrast black backgrounds that define the look, as shown in Sref post.

Midjourney SREF 3920949925 with niji 6 targets neon anime pop‑art contrast

Midjourney (style reference): --sref 3920949925 paired with --niji 6 is being positioned as neon anime colliding with pop-art—high pink/blue contrast, emotional characters, and a grainy “hand-made” texture in Style breakdown.

A longer guide is linked on the Sref guide.

Midjourney SREF 4089868573 leans into soft impressionist ‘miniature painting’

Midjourney (style reference): --sref 4089868573 is being framed as “soft dream painted by hand,” combining impressionism + soft realism with visible brush texture and glowing light, plus a “miniature painting” feel in Style description.

The suggested settings are “--sref 4089868573 --v 6.1 --sv4,” with additional notes collected on the Sref detail page.

🛡️ Likeness rights + provenance wars: Denmark biometrics law, labeling fights, and deepfake trust collapse

Policy and trust signals that directly affect creative distribution: biometric ownership laws, platform ‘Made with AI’ labeling backlash, and research showing persuasion risk when models overwhelm with (often wrong) ‘facts.’

Stanford finds conversational AI can shift political beliefs in one chat

Conversational AI persuasion (Stanford): A widely shared thread claims Stanford researchers ran a persuasion study with 76,977 people, 19 models, and 707 issues, finding that a single conversation with GPT-4o shifted opinions by nearly 12 points on average, rising to 26 points among people who initially disagreed; it also claims 40% of the change persisted a month later, as described in the Large persuasion study thread and detailed in the ArXiv paper linked via ArXiv paper.

• Information volume as the lever: The thread says the strongest predictor of persuasion wasn’t personalization but “information”—models that flooded users with more facts/statistics persuaded more, per the Large persuasion study thread.

• Persuasion vs truth tension: It also claims higher persuasiveness correlated with lower factual accuracy (including a claim of GPT-4o being 27% more persuasive than an older version while 13 points less accurate), again per the Large persuasion study thread.

The thread also claims a small open-source model trained specifically for persuasion could reach similar persuasive effects, which—if reproducible—turns provenance, disclosure, and identity verification into distribution problems, not just model problems.

Denmark recognizes ownership rights to face, voice, and body

Denmark (biometrics rights): A new Danish law is described as recognizing that people own the rights to their face, voice, and body, with creators framing it as an accelerant for likeness and deepfake lawsuits against AI model makers, per the Denmark biometrics law take. It’s also being framed as a check on “train first, ask permission later” dataset strategies, because biometric rights claims can attach even when the underlying creative work isn’t copyrighted (the argument in the same Denmark biometrics law take).

What’s still unclear from the tweets is how broad the enforcement and exemptions are in practice (e.g., editorial uses, satire, or platform-safe-harbor dynamics), but the direction of travel is toward explicit consent and licensing for identity data.

The “is this video real?” loop is becoming self-sealing

Authenticity and proof: A creator describes a scenario where a public figure is presumed dead because a video “looked AI,” then posts another video to prove they’re alive—only for commenters to claim the new video is also AI, as described in the Video evidence as hearsay. The post frames this as a turning point where video evidence is treated “worth no more than… hearsay,” per the same Video evidence as hearsay.

The practical upshot for working creatives is that distribution and PR now contend with skepticism that can’t be resolved by “posting the clip again.”

Creators split on “Made with AI” labels: stigma vs signal

Platform labeling (X): A loud backlash thread argues that if “Made with AI” becomes mandatory, the creator would stop posting AI work, framing labeling as a competence test rather than a disclosure norm in the Refusal to label. In the same discourse, another post reframes the label as a badge—“I used AI and I’m proud of it”—and claims it doesn’t hurt reach, per the Proud to use tag.

• Stigma comparison: One framing mocks the idea as equivalent to forcing artists to tag work “Made with CGI,” as argued in the Made with CGI analogy.

• UI reality: The interface pairing of “Made with AI” with “Paid partnership” is shown in the Label UI screenshot, which is part of why some creators treat it as reputational or commercial context, not a neutral provenance tag.

The Academy says AI-assisted films stay eligible, without mandatory disclosure

Oscars eligibility (Academy): A circulating summary says Academy CEO Bill Kramer clarified that AI is a tool, not a creator, and that AI-assisted films remain eligible across categories as long as human creative contribution is dominant, while also stating there’s no disclosure requirement, according to the Eligibility clarification.

For provenance and labeling debates, that “eligible without disclosure” stance pushes the burden toward platforms, distributors, and contracts rather than awards rules—at least as characterized in the Eligibility clarification.

A two-option meme captures collapsing consensus on reality

Consensus collapse shorthand: A meme format reduces the discourse to two camps—“the video is real, you’re stupid” vs “the video is AI, you’re stupid”—and concludes that everyone agrees people are stupid, as posted in the World divided meme.

It’s a small artifact, but it’s also a clean summary of how provenance debates are turning into identity fights rather than evidence fights.

🧷 Keeping things consistent: Omnireference, multi-reference inputs, and brand-to-UI continuity

Today’s identity/consistency talk is practical: what holds across shots (Seedance Omnireference), when multi-reference inputs help, and how creators try to keep ‘one brand system’ coherent across generated UI assets. Excludes Deep Agents/Claude Code coverage (feature).

Seedance 2.0: Omnireference is strong, but long-form continuity is still expensive work

Seedance 2.0 (ByteDance): A creator field report says Omnireference keeps character consistency “actually very good,” but the moment you push into longer narrative structure—multi-character exchanges, continuity across shots, and consistent tone/pacing/staging—iteration load spikes fast, as described in the Spend and continuity notes.

The same post pins real cost to the problem: generation is quoted at $2–$7 per ~15 seconds, and $1,000 bought about 6 minutes of short-film output in practice, per the Spend and continuity notes and the Cost and reroll note. It also flags a tooling bottleneck: Continue Video is described as broken for weeks in that workflow, blocking longer attempts, according to the Spend and continuity notes.

Freepik Spaces: a logo-to-UI-kit workflow that keeps one brand system coherent

Freepik Spaces (with Nano Banana + Kling): A shared 5-step node workflow turns a small set of brand inputs into a coherent logo system and UI kit—framed as going from “4 basic text inputs” to full assets in 6 minutes, according to the Workflow walkthrough and the follow-up Space link and prompts.

• Brand anchor: Step 1 defines Brand Name, Style, Object, and Palette as variables, then generates a logo, per the Workflow walkthrough.

• System continuity: Step 2–3 turns the logo into a UI button, then expands that button into a full UI kit using the same variables, as outlined in the Workflow walkthrough.

• Motion continuity: Step 4 animates start/end frames in Kling 3.0 to get a clean loop, per the Workflow walkthrough.

The working artifact is shared as a duplicable Freepik Space via the Space invite, with the thread claiming the full prompt text lives inside that Space in the Space link and prompts.

Grok Imagine: mixing cartoon characters into new scenes, then extending the clip

Grok Imagine (xAI): A shared workflow shows creators mixing different cartoon characters into brand-new scenes, then using video extension to push the animation further, as demonstrated in the Cartoon mixing demo.

The key consistency angle is that the “mix characters” setup is being treated like a reference-driven way to keep a stylized look stable while changing cast/scene structure, echoing prior multi-reference momentum in Multi-ref UI.

Grok Imagine multi-reference: 80s OVA anime look with tighter style control

Grok Imagine (xAI): Multiple reference images are being pitched as the lever for tighter creative control—one example shows a classic 80s OVA anime look that stays coherent across variations when guided by refs, as shown in the OVA multi-ref demo.

This builds directly on the multi-reference consistency narrative from Video ref slots, but today’s angle is stylistic: refs aren’t only for character identity—they’re also being used to lock a specific era/anime rendering feel, per the OVA multi-ref demo.

Nano Banana slide design: add a reference style image to avoid the “same look” trap

Nano Banana (slides/decks): A practical note says Nano Banana slide outputs “always have same look” when you don’t provide reference style images, and that adding a reference makes presentations more distinctive, as argued in the Slide reference tip.

A second creator explicitly agrees that “adding sref makes a big difference,” per the Confirmation reply, reinforcing the pattern that reference inputs (or style refs) are becoming the default control knob for deck consistency and differentiation.

✍️ Prompting that pays: ‘Claude Cowork’ office automations + 2026 prompt patterns

Writing and thinking workflows creators are copy-pasting: prompt systems for inbox triage, drafting, research, and iteration—positioned as replacing outdated prompt-engineering ‘party tricks.’ Excludes the coding-agent infrastructure covered in the feature.

Content repurposing prompt: 1 doc → CEO bullets, analyst brief, tweet hook, onboarding explainer

Repurposing system (prompt pattern): A single routine outputs four versions of each long-form doc—30-second CEO bullets, a 3-minute analyst version, a sub-220-character hook tweet, and a new-employee explainer with one analogy—using the format in the four-persona repurpose prompt and appearing in the prompt pack.

For storytellers and marketers, this is a practical “one source, many surfaces” template.

End-of-day wrap-up automation prompt: scan folders, move flagged files, write TODAY_SUMMARY.md

End-of-day sweep (prompt pattern): A four-phase routine (scan → decide → act → confirm) checks common folders for unorganized work, flags incomplete tasks and stale drafts, moves items into “Needs Review,” and writes TODAY_SUMMARY.md with a next-morning recommendation, as defined in the end-of-day routine and included in the workflow list.

It’s essentially a repeatable “close the loops” prompt for creative operators juggling many files and drafts.

Founder’s reality check prompt: investor/founder/customer interviews → top risks + tests

Reality check (prompt pattern): A multi-perspective interview prompt runs three simulated interrogations—skeptical investor, successful founder, and a potential customer who won’t buy—then synthesizes the top five risks and proposes validation tests, as shown in the advisor interview prompt and included in the six prompts thread.

For creative businesses, it’s a way to pressure-test offers (packages, retainers, productized services) before spending weeks producing assets.

Meeting notes → action items prompt: owners, deadlines, priority, export to XLSX

Meeting notes to tasks (prompt pattern): The template converts raw notes into a strict task schema (OWNER, TASK, DEADLINE, PRIORITY), explicitly marking missing owners as [Unassigned], then outputs a single ACTION_ITEMS.xlsx sorted by priority, as shown in the action items format and carried in the prompt pack.

It’s aimed at making production meetings (edit notes, client feedback, shot lists) mechanically actionable instead of living in transcripts.

Report cleanup prompt: no new info, no passive voice, tighten to short paragraphs

Report cleanup (prompt pattern): A ruleset for editing without hallucinating—don’t add info, don’t change numbers/names/dates, avoid passive voice, kill “It is/There are” openings, cap paragraphs at four sentences, and replace hedging with direct language—appears in the report editing rules and within the workflow list.

This is directly usable for polishing creative deliverables like creative strategy docs, postmortems, and client update memos where factual integrity matters.

Research + competitive intel prompt: extract claims into JSON and surface contradictions

Research extraction (prompt pattern): The workflow requests structured outputs per source—key claim, evidence, contradictions vs other sources, confidence level, and date—followed by a synthesis section (top insights, biggest contradiction, most urgent action), as laid out in the intel JSON schema and included in the prompt pack.

It’s a creator-friendly way to turn scattered references (brand research, comps, creative references) into a decision-ready brief instead of a summary.

Strategic scenario planner prompt: best/worst/mediocre/black-swan futures + early signals + decision tree

Scenario planning (prompt pattern): A decision-focused template generates four futures (best case, worst case, mediocre middle, black swan), lists early warning signals and decision points for each, then ranks likelihood and builds a decision tree, as described in the scenario planner prompt under the six prompts thread.

This reads like planning support for creative bets (series concepts, ad angles, tool migrations) where outcomes are path-dependent.

Strategy stress-test prompt: ask 7 hardest questions, rate answers, output verdict

Strategy stress-test (prompt pattern): The template forbids summarizing and instead generates seven skeptical questions per strategy document, rates whether the doc answers each (Strong/Weak/Missing), and ends with a verdict plus the single biggest risk, as specified in the stress-test format and packaged in the workflow set.

That structure fits creative plans too (launch strategy, brand narrative, series roadmap) because it forces assumptions into the open.

2026 prompting argument: “party tricks” vs multi-perspective, iterative prompt systems

Prompting culture shift: A creator thread argues that many paid prompt-engineering courses are stuck on “act as an expert,” few-shot examples, and chain-of-thought demos, while real work in 2026 is framed as multi-perspective analysis, strategic iteration, pattern extraction, and transformation systems, as stated in the courses vs systems framing and introduced in the six prompts post.

The core claim is less about specific models and more about prompts becoming reusable operating procedures (research, critique, repurposing) rather than one-off incantations.

File organization automation prompt: conditional rules + ORGANIZATION_LOG.txt

Downloads cleanup (prompt pattern): The prompt proposes deterministic file routing rules (invoices/contracts/images/data/HR) and requires an ORGANIZATION_LOG.txt enumerating every move plus reasons for “Needs Review,” as written in the organization rules and included in the full list.

It’s ops-focused, but it maps cleanly to creator workflows where assets and contracts pile up in the same folders.

🖼️ Image model outputs in practice: Firefly Image 5 tests, surreal product renders, and style experiments

Compared to the prompt-heavy sections, this bucket is about what the models are producing (not the prompt recipes): Firefly Image 5 tests, surreal/commercial-style renders, and creator look-tests. Excludes SREF/prompt dumps (covered separately).

Firefly + Nano Banana 2 “Hidden Objects” posts a new Level .076 puzzle frame

Hidden Objects format (Firefly + Nano Banana 2): Following up on Hidden objects (level-based puzzle images), Glenn shared Hidden Objects | Level .076, an “amber insects” scene with five icon targets along the bottom, as shown in Level 076 image. The repeatable part here is the output structure: one dense texture field + clearly defined “find these 5 things” targets.

The post frames it as “Made in Adobe Firefly with Nano Banana 2” in Level 076 image, keeping the series positioned as a multi-model output pipeline rather than a single-tool trick.

Firefly Image 5 nails a puddle-reflection street composition test

Adobe Firefly Image 5 (Adobe): A “test prompt” street scene built around a puddle mirror reflection is getting shared as a clean example of reflective composition and focus separation, per the frame posted in Puddle reflection image. It’s the kind of single-image look-test creatives use to sanity-check whether a model can hold a deliberate photographic idea (reflection as the hero) without turning it into a texture smear.

The tweet explicitly tags it as made in Firefly Image 5, in Puddle reflection image, which helps anchor this as an output-quality datapoint rather than a generic photo repost.

Firefly Image 5 aquarium/whale frame spotlights glass reflections and depth

Adobe Firefly Image 5 (Adobe): A single-frame aquarium scene—child at glass with a whale beyond it—circulated as another quick “does it read as a real photo?” check for reflections, lighting, and depth cues, as shown in Aquarium whale image. For compositing-minded designers, the point is whether the model can keep the glass layer, reflection streaks, and underwater lighting coherent in one shot.

The creator labels it “Made with Adobe Firefly Image 5” directly in Aquarium whale image.

FloraAI-tagged look-tests push iridescent, glass-like product renders

FloraAI-style product studies: Lloyd continues posting high-end CGI-like outputs—transparent/iridescent sneaker studies and “crystal shard” environment compositions—under a thread that explicitly tags @floraai in Product study set and expands into additional glassy, iridescent objects in Iridescent object set. The shared throughline is material rendering as the hero (clear/translucent surfaces, prismatic lighting) rather than character or narrative.

Across Product study set and Iridescent object set, the posts function as output benchmarks for whether a model/workflow can keep specular highlights and reflective surfaces stable enough to pass as premium product art.

Cloud-sneaker renders keep spreading as a “product-as-atmosphere” look-test

Surreal product imagery: Lloyd’s “Head in the clouds” set turns a sneaker into literal cloud material (including a cloud-bodied figure variant), as shown in Cloud sneaker images. For designers, this is a concrete output motif: take a familiar product silhouette and swap in an impossible material while keeping the scene readable as product photography.

The images in Cloud sneaker images are presented as finished outputs (not a prompt dump), which is why they’re useful as a reference for what this aesthetic looks like when it lands.

🛠️ Single-tool upgrades worth learning: Photoshop Rotate Object (beta)

One clear, creator-relevant tutorial beat today: Photoshop’s Rotate Object (beta) positioning as a practical design/compositing move. Excludes broader Firefly/Image model chatter.

Photoshop (beta) Rotate Object pairs with Harmonize for faster composites

Photoshop (Adobe): Rotate Object (beta) is being pitched less as a gimmick and more as a practical composite move—rotate an existing 2D image to the angle you need, then use Harmonize to re-light / blend it so it actually sits in the scene, as described in the Rotate Object teaser.

The key creative implication is that “missing angle” shots (product, key art, poster elements, UI mock comps) can be treated like a post step instead of a reshoot, with the rotate step and the lighting-match step framed as a single workflow in the Rotate Object teaser.

🧯 What’s breaking right now: Grok Imagine artifacts, Seedance long-form failures, and model regressions

Creators flag concrete reliability blockers: visible generation artifacts, broken long-form video features, and perceived quality downgrades after model updates. This is the ‘don’t waste your credits today’ section.

Grok Imagine double-exposure bug is showing up in roughly half of generations

Grok Imagine (xAI): Creators are flagging an unwanted double-exposure/ghosting artifact that appears in “half the generations” and has persisted for “a week plus,” based on a reproducible prompt run shown in the Bug demo clip. It’s a straight reliability blocker when you need clean single-subject frames.

The report is specific about frequency (≈50%) and duration (1+ week), but it doesn’t include a workaround or an acknowledged fix timeline in the Bug demo clip.

Seedance 2.0 “Continue Video” is reportedly failing on longer runs

Seedance 2.0 (ByteDance): A paid usage report says the Continue Video feature (meant to extend clips into longer sequences) has been “broken for the last couple of weeks,” preventing longer narrative attempts from completing, according to the Creator field report. This is the exact failure mode that turns “short clips are easy” into “long-form is blocked.”

The same post frames the broader gap as scaling from short animation to sustained storytelling—multi-character exchanges and continuity—where Continue Video was expected to help but currently can’t be relied on, per the Creator field report.

Seedance 2.0 long-form work stays expensive due to rerolls and drift

Seedance 2.0 (ByteDance): A creator who spent about $1,000 in credits reports generation costs of roughly $2–$7 per ~15 seconds, with only “around 6 min of short film” to show for it, largely because rerolling gets pricey when the model hallucinates or continuity breaks, as detailed in the Spend and constraints notes and reiterated in the Reroll cost follow-up. Short clips come quickly. The bill doesn’t.

• Where it breaks first: The post calls out multi-character exchanges, long sequences, and maintaining tone/pacing/staging as the points where iteration ramps up, per the Spend and constraints notes.

This is usage-sentiment evidence, not a benchmark; there’s no independent cost table in-thread beyond the creator’s stated ranges in the Spend and constraints notes.

Users claim Nano Banana Pro quality regressed after Nano Banana 2 shipped

Nano Banana Pro (model/tooling): One user reports that since Nano Banana 2 released, Nano Banana Pro became “completely unusable” in their tests and that “big projects… are now halted,” while asking when a “Nano 2 pro” will ship, as stated in the Regression report. It’s a pure regression signal. It’s also hard to validate from a single account.

No side-by-side samples or failure screenshots are included in the Regression report, so treat this as an early warning rather than a confirmed widespread outage.

🎟️ Credits & limited-time access (use-it-or-lose-it windows)

Only one notable deal surfaced today, and it’s time-boxed. Kept separate to help creators grab credits before they expire.

Hailuo AI opens a 200-credit free window (claim by Mar 17, expires in 5 days)

Hailuo AI (Hailuo): Hailuo is running a time-boxed promo that grants 200 free credits if claimed on the Hailuo website before Mar 17, 16:00 UTC, and those credits expire after 5 days, according to the free credits announcement.

For AI filmmakers and motion designers, this is mainly a short window to stress-test the current “new vibe” model behavior on your own prompts (style adherence, motion consistency, and reroll costs) without committing paid spend; the post frames it as “use it or lose it,” with the expiry clock being the operational constraint per the free credits announcement.

📚 Research & benchmarks likely to hit creator tools soon (memory, OCR, agents, embeddings)

Paper-and-benchmark radar today centers on long-context efficiency, memory retrieval, and multimodal parsing—primitives that typically show up next as ‘faster’, ‘longer context’, and ‘better doc understanding’ in creative products.

LookaheadKV: KV-cache eviction that “looks ahead” without draft generation

LookaheadKV (Samsung Research): A new KV-cache eviction method claims it can predict which cached tokens matter without running expensive “draft generation,” cutting eviction overhead while keeping accuracy for long-context work, as described in the paper abstract screenshot and its associated paper page. The headline number is up to 14.5× lower eviction cost, which maps directly to creator-facing wins like cheaper long timelines in chat editors, longer script bibles, and more reliable “keep the full PDF in context” flows.

The practical creator angle is that this class of work often shows up later as “same model, faster/longer context” inside tools—especially anything doing big-doc reads (shot lists, contracts, story bibles) where KV-cache growth becomes the bottleneck.

LMEB benchmark targets long-horizon memory retrieval for agent-style workflows

LMEB (Long-horizon Memory Embedding Benchmark): A new benchmark argues that “good at passage retrieval” does not imply “good at long-horizon memory,” evaluating embeddings across 22 datasets and 193 zero-shot retrieval tasks spanning episodic/dialogue/semantic/procedural memory, as outlined in the benchmark screenshot and the linked paper page. The paper’s key claim is orthogonality with MTEB, which matters for creators because agent memory in production is often fragmented and temporally distant (project notes, feedback threads, asset decisions), not clean Q&A passages.

If LMEB-style evals take hold, expect embedding choices in creative agents (searching your notes, scripts, dailies) to shift away from “tops MTEB” toward “doesn’t forget what happened 3 weeks ago.”

Document parsing push: “parse anything” for charts, UI, figures, and OCR

Multimodal OCR + graphics parsing: A thread-context claim highlights a document parser positioned for “charts, UI layouts, scientific figures, chemical diagrams,” with results framed as #2 to Gemini 3 Pro on an OCR Arena Elo board and 83.9 on an olmOCR benchmark, per the thread context OCR metrics and thread context OCR metrics. For creative tooling, this is the exact primitive behind “drop in a PDF, get structured layers,” “turn a UI screenshot into editable vectors,” or “convert charts into SVG you can restyle.”

Treat the leaderboard framing as provisional here—there’s no standalone artifact in the tweets beyond the quoted metrics—but the direction is clear: document understanding is converging on layout + text + structure rather than plain OCR.

IBM NLE: ASR via transcript editing, not token streaming

NLE (IBM): IBM is flagged for a non-autoregressive ASR approach that frames speech-to-text as transcript editing rather than left-to-right generation, per the paper mention. For creators, this is the class of work that typically lands as faster rough-cut transcription, quicker subtitle iteration, and tighter “edit the text, the timing follows” workflows.

The tweet doesn’t include benchmark numbers or an implementation link, so treat it as early radar; the notable signal is the modeling direction (editing-based ASR) rather than another incremental decoder.

XSkill: agents that accumulate skills from experience

XSkill: A paper mention describes a “dual-stream” setup for multimodal agents to accumulate experience + skills over time, per the paper mention. This line of research tends to surface in creator tools as agents that stop re-learning the same project-specific patterns (naming conventions, shot taxonomy, brand tone) every session.

Details in the tweet are sparse (no tasks, scores, or repo surfaced here), but it fits the broader push toward agents that improve from doing—rather than being reset to prompt-only behavior.

3DGS output becomes the common format for AI 3D generators

3D generators → Gaussian Splats (3DGS): A creator note observes that many 3D generators are now outputting Gaussian Splats for fast rendering and flexible deployment, per the 3DGS pipeline note. For filmmakers and designers, this matters less as a research novelty and more as pipeline standardization: a shared intermediate format makes it easier to move AI-made 3D captures into real-time scenes, previews, and web viewers.

No tool names or benchmarks are cited in the tweet, but the directional signal is strong: splats are becoming the “default interchange” for AI-ish 3D.

OpenMAIC: an open multi-agent “classroom” environment

OpenMAIC (Tsinghua University): A release note says MAIC—“Multi-Agent Interactive Classroom”—is being open-sourced, per the open-sourcing announcement. In creator-tool terms, these classroom environments often become testbeds for coordination patterns (planner/critic/teacher roles), which later show up as “writer’s room” style agent teams for story development.

The tweet doesn’t include the repo link details in-line here, so this is best read as availability signal rather than a spec drop.

📈 AI ads & brand strategy: performance metrics, creative hooks, and “myth” positioning

Marketing-focused creator content: concrete ad test metrics for AI animated graphics, plus brand strategy frameworks (identity myths) and rapid logo→UI kit positioning for productized aesthetics.

AI animated graphic ads are getting measured like performance creative

AI animated graphic ad testing: A creator reports an ad test costing $1,434 that has returned $2,860 in purchase value (1.99 ROAS) across 28 purchases with $102 AOV, alongside unusually strong attention metrics—5.33% CTR, 88.9% first-frame retention, and 55% thumbstop rate, as described in the Ad test metrics breakdown.

The creative recipe described is minimal: “simple neon animation,” “two figures on a bed,” and a curiosity headline (“scientific reason women tense before orgasm”), with the claim that the visual explanation is doing most of the work rather than talent, filming, or UGC, per the Ad test metrics post.

Brands are losing the monopoly on identity myths as creators get AI tools

Cultural brand strategy (Douglas Holt lens): A thread reframes branding as selling a “myth of identity” that resolves cultural contradictions, then argues the power shift is that communities and solo creators can now manufacture and distribute competing myths more authentically—“including AI now,” per the Myth of identity thread argument.

The same post points to AI-assisted asset generation (example visuals of cloud-formed brand marks) as an enabler of that creator-led narrative competition, as shown in the Myth of identity thread image panel.

Logo-to-UI-kit pipelines are becoming the new brand asset baseline

Freepik Spaces workflow: A creator shares a repeatable pipeline that goes from four text inputs to “full-blown logos and UI kits” in about 6 minutes, presented as a 5-step node workflow in the Logo to UI kit workflow demo.

• Tool chain: The flow is described as generating a logo, turning it into a button for depth, expanding into a UI kit, then animating a clean loop via a Kling node, per the Logo to UI kit workflow walkthrough.

• Reproducibility surface: The author links a duplicable Space so others can clone the workflow directly, as shared in the Space link post invite at Freepik space invite.

It’s framed less as “make one good image” and more as “one brand anchor → many consistent assets,” matching the positioning in the Logo to UI kit workflow post.

🖥️ Compute & local stacks: data center buildout, edge accelerators, and DIY open hardware

Creator-adjacent compute signals: US data center construction snapshot plus practical edge acceleration and open hardware cost-downs. This is the infrastructure layer behind cheaper/faster generations.

US data centers under construction map spreads as a capacity-race signal

US data center buildout: A state-by-state snapshot of data centers currently under construction is getting reshared as a quick read on where near-term AI inference/training capacity might land, as shown in the construction map share. For creatives, it’s a downstream signal on regional latency, local hosting options, and how fast “cheaper generations” might propagate once these sites come online.

Treat it as directional—there’s no accompanying methodology, timestamps, or MW/GPU counts in the post itself, per the construction map share.

Google Coral USB gets used to offload Frigate object detection

Coral USB Accelerator (Google): A creator is buying a Google Coral USB Accelerator (Edge TPU) to move Frigate person/object detection off the main CPU, explicitly to avoid overloading their primary box, as described in the Frigate offload note.

• Why it matters for creators: This is the “edge compute” pattern—keeping always-on vision workloads local so the machine running creative tools (or a home server doing gen tasks) doesn’t get pinned by continuous detection, per the Frigate offload note.

• Concrete cost signal: The listing shown is 417.00 PLN, as visible in the Frigate offload note.

MIT-licensed open radar project claims 95% cost reduction

Open-source radar (MIT license): A radar system is being promoted with a headline claim of “95% cost reduction” versus the cheapest available radar setup, alongside an MIT license framing (“open-sauce”), per the cost reduction claim.

For AI creatives, this sits in the same bucket as other cost-down open hardware: cheaper sensing and robotics can expand real-world data capture (reference video, scanning, physical installs) that later feeds into AI-assisted production workflows, although the post itself doesn’t include the bill of materials, performance specs, or test results beyond the headline, per the cost reduction claim.

🎞️ What creators shipped (or teased): AI films, interactive experiences, and short-form showcases

Named projects and public drops: teasers for AI-native films and event experiences, plus creator-made shorts and community roundups. This is about output and release cadence—not tool mechanics.

WAR FOREVER is teased as a 27-minute AI war film, trailer due tomorrow

WAR FOREVER (NAKIDpictures + STAGES.ai): A new project is teased with a date stamp “6•6•2026” and positioned as “the bloodiest 27 minutes in human history,” with the trailer promised tomorrow in the WAR FOREVER teaser.

The framing is very release-cadence-forward (hard date + trailer timing), which signals this is being treated like a conventional campaign rollout rather than a one-off test clip, per the language in the WAR FOREVER teaser.

NAKIDpictures previews sensor-driven real-time AI experiences for in-person events

NAKIDpictures (Live interactive experiences): A teaser positions the studio’s in-person “4D-AI gaming” format as running on AI generation “in real-time & curated content,” with sensors feeding branching pipelines that change storylines and themes per attendee, as described in the event experience teaser.

It’s also tied to prior client work (named as Armani/Luxottica in Milan), implying this is already deployed in real venues rather than being a concept-only demo, per the event experience teaser.

A kid-directed Kling 3 clip shows sketch-to-animation co-creation

Kling 3 (Family co-creation): A creator shares a short animation made with Kling 3, framing the creative direction as their 8-year-old daughter’s idea, and the clip itself shows rough tablet sketches transforming into a polished animated result in the Kling 3 co-creation clip.

This is a concrete example of “concept comes from humans, production comes from models” for short-form, with the process visibly compressed into a single before/after sequence in the Kling 3 co-creation clip.

Seedance 2.0 is used for a micro-story time-lapse about a dying office plant

Seedance 2.0 (Micro-story short): A short-form piece pairs a single-line narration (“The office didn’t kill the plant…”) with a time-lapse visual of a plant wilting, explicitly tagged as made with Seedance 2.0 in the Seedance micro-story.

It’s a clean example of a one-shot concept that reads as story-first (setup → visual payoff) while staying within the kind of duration current gen-video models handle comfortably, as shown in the Seedance micro-story.

AI Community Space publishes a “Top 10 picks” highlight reel

AI Community Space (Curation format): A “Top 10 picks” gallery recap is posted as a lightweight distribution mechanism for creator work, with the roundup presented directly as a short scrolling gallery capture in the Top 10 picks recap.

The emphasis is on surfacing and packaging community outputs (not tool talk), with “Top 10” acting as the repeatable unit of programming in the Top 10 picks recap.

While you're reading this, something just shipped.

New models, tools, and workflows drop daily. The creators who win are the ones who know first.

Last week: 47 releases tracked · 12 breaking changes flagged · 3 pricing drops caught