Midjourney V8 Alpha tests 5× faster 2K renders – Relax mode missing

Stay in the loop

Free daily newsletter & Telegram daily report

Executive Summary

Midjourney opened V8 Alpha to early community testing; it touts better prompt following plus ~5× faster renders, a native 2K option via --hd, and improved text rendering when quoted. The rollout ships with a compute caveat: Relax mode isn’t supported yet, and --hd, --q 4, SREF, or moodboard jobs run ~4× slower and cost ~4×; access also looks staged, with some users seeing “Unknown version: 8.” Early sentiment is split—speed is widely praised, but some creators report regressions on specific prompt types and note that legacy prompt habits from V5.2/V7 don’t transfer cleanly.

• OpenClaw exposure: SecurityScorecard reports 40,214 internet-exposed instances across 28,663 IPs; the thread claims 12,000+ are trivially reachable, but remediation details and validation artifacts aren’t shown.

• Composio Agent Orchestrator: open-source layer for ~30 parallel coding agents; one worktree/branch/PR per agent; claims CI-log feedback loops and PR-review autofixes.

• ByteDance Seed MoDA: depth-KV attention claims 97.3% of FlashAttention-2 efficiency at 64K with ~3.7% FLOPs overhead; code is posted, but third-party benchmarks aren’t in-thread.

While you're reading this, something just shipped.

New models, tools, and workflows drop daily. The creators who win are the ones who know first.

Last week: 47 releases tracked · 12 breaking changes flagged · 3 pricing drops caught

Top links today

- Midjourney V8 community test access

- Kaggle AGI benchmarks hackathon hub

- GSD for Claude Code GitHub repo

- AI layoffs explainer video on YouTube

- Ropedia Xperience-10M dataset on Hugging Face

- OpenViktor Product Hunt launch page

- ClawHub Pexo video creation skill

- Pexo agent video maker web app

- Luma Dream Brief $1M ad competition

- Runway AI Summit tickets and details

- Freepik Spaces AI video workflow guide

- Flow Studio combat-to-3D workflow demo

- Mixture-of-Depths Attention paper link

Feature Spotlight

Midjourney V8 Alpha starts public testing (speed, 2K, better text, new UI)

Midjourney V8 Alpha is live for community testing: ~5× faster renders, native 2K mode, stronger prompt adherence, and improved text rendering—plus workflow UI upgrades. Early feedback will shape the model fast.

High-volume story today: many creators are hands-on with Midjourney V8 Alpha and sharing what changed (prompt following, speed, 2K/--hd, text rendering) plus early friction/quality takes. Excludes all non-V8 Midjourney SREF drops (covered in prompt_style_drops).

Jump to Midjourney V8 Alpha starts public testing (speed, 2K, better text, new UI) topicsTable of Contents

🖼️ Midjourney V8 Alpha starts public testing (speed, 2K, better text, new UI)

High-volume story today: many creators are hands-on with Midjourney V8 Alpha and sharing what changed (prompt following, speed, 2K/--hd, text rendering) plus early friction/quality takes. Excludes all non-V8 Midjourney SREF drops (covered in prompt_style_drops).

Midjourney V8 Alpha opens early community testing with 5× speed and 2K mode

Midjourney V8 Alpha (Midjourney): V8 Alpha is now in early community testing with the headline upgrades Midjourney is emphasizing—better prompt following, about 5× faster renders, a native 2K mode via --hd, and improved text rendering when you put text in quotes, as announced in the V8 test announcement and reiterated in the Long-form V8 notes.

The same rollout also claims stronger personalization, sref, and moodboard performance with backward compatibility for V7 profiles, plus web UX changes (conversation mode, grid mode, sidebar settings) aimed at keeping up with the higher generation throughput, as described in the V8 test announcement and linked from the alpha site.

Midjourney V8 Alpha sentiment splits: some call it a regression for specific prompts

V8 Alpha quality debates (community): Alongside the speed hype, there’s sharp negative feedback on certain tasks—one user asked for a sci‑fi “spaceship hull texture” and wrote “This a joke? I feel like the model is at v3…” in the Spaceship texture complaint.

A parallel thread from a creator who regularly uses older Midjourney versions suggests a compatibility gap: they say they still rely on V5.2/V7 for many pieces and that “the prompts don’t work the same on V8,” as stated in the Prompts differ on V8 and contextualized by their note about staying on older defaults in the Using older versions.

Midjourney V8 Alpha usage notes: --raw for control, crank stylize, and rate fast

Midjourney V8 Alpha prompting (Midjourney): Midjourney is telling early testers to change habits—use --raw quickly if you want a plainer or more “in control” look; lean harder on personalization, moodboards, and srefs; and try pushing --stylize 1000 because V8 “shines” most when you rely on stylization systems and longer, more specific prompts, per the Prompting guidance and Long-form V8 notes.

A separate, very practical note from the same post: the best way to help tune V8 is to rate outputs in the lightbox, with 1/2/3 hotkeys and arrow keys to move quickly, as described in the Prompting guidance.

Midjourney V8 Alpha: Relax not supported yet; --hd/--q4 and styling jobs cost 4×

Midjourney V8 Alpha (Midjourney): The early test comes with a sharp compute/pricing caveat—Relax mode isn’t supported yet, and jobs using --hd, --q 4, SREF, or moodboards are currently ~4× slower and cost ~4× compared to regular jobs, per the Modes and pricing notes and the expanded Long-form V8 notes.

Midjourney says it’s working on a new server cluster for Relax plus “cheaper render modes,” but the tweets don’t give a timeline beyond that statement in the Modes and pricing notes.

Midjourney V8 Alpha access is gated: “Unknown version: 8” error appears

Midjourney V8 Alpha rollout (Midjourney): Some users attempting to run V8 are hitting an “Unknown version: 8” error, which implies staged access rather than a fully open switch, as shown in the Unknown version error screenshot.

Midjourney’s account is replying with basic troubleshooting—try going to the alpha site and refresh—per the Refresh suggestion, but no additional gating criteria are stated in the tweets.

Midjourney V8 Alpha first looks: speed praised, with varied realism and stylization tests

V8 Alpha hands-on (community): Early posts are sharing diverse V8 look-tests (portraits, product/motion-style comps, abstract effects), with multiple creators foregrounding speed and the feel of increased depth/texture in certain outputs, as seen in the V8 image test grids and V8 testing images.

The visible “first look” content also suggests people are evaluating V8 by running the same subject across multiple aesthetics rather than chasing one new default style, as implied by the range of tests in the V8 image test grids.

Midjourney confirms an iOS app is in progress

Midjourney iOS app (Midjourney): In a short reply, Midjourney confirmed it’s working on an iOS app (“yes”) in response to a direct question, as captured in the iOS app reply screenshot.

The tweet provides no ETA, feature scope, or whether it will be V8-first; it’s just a roadmap signal in the iOS app reply screenshot.

🎬 AI video generation in the wild: Seedance experiments, Kling spots, and short-film pipelines

Today’s video feed is mostly creator field tests and micro-experiments: Seedance 2.0 sequence tricks and short clips, plus branded Kling-style mini spots. Excludes lipsync/dubbing tooling (covered in voice_narration).

Seedance 2.0 turns a 2×2 grid into a full evolving sequence

Seedance 2.0 (ByteDance): A creator demo shows Seedance generating a continuous, multi-beat transformation from a single 2×2 grid—suggesting a practical way to “sketch” motion progression with four key frames, as shown in the 2x2 grid sequence demo.

The clip is short, but the takeaway is concrete: a grid can function like a mini storyboard (start → mid → variation → end) while Seedance fills in the in-betweens, per the 2x2 grid sequence demo.

Seedance 2.0 creator clip includes field notes on Mitte: no watermark, variable speed

Seedance 2.0 (via Mitte): A short social vignette (“You missed the sunset. But you got the likes.”) is shared as an example of Seedance output, with the same creator adding practical distribution notes about running it on Mitte—“no watermark,” “pricing is pretty competitive,” and generation times that vary with traffic and “Chinese servers,” per the Seedance clip and provider notes.

• Provider context: The hosting/product angle is mainly anecdotal here (CPP invite + affiliate link), but the operational details are explicit in the provider notes and the destination is the Mitte landing page.

Seedance 2.0 “awkwardness” test uses the prompt “the most boring thing you’ve ever seen”

Prompt stress-testing (Seedance 2.0): One creator reports Seedance 2.0 is “very good at awkward,” then pairs it with a deliberately flat prompt—“the most boring thing you’ve ever seen”—to see what the model does when it has minimal creative direction, as shown in the awkwardness test clip.

This is a useful sanity check pattern: if a model can still produce coherent motion and staging from anti-prompts, it’s often a good proxy for baseline scene-assembly strength—though this post is one data point, not a benchmark.

🧠 Copy/paste aesthetics: Midjourney SREFs, Nano Banana prompt kits, and design templates

Prompt drops were dense today: multiple Midjourney SREF styles plus reusable Nano Banana templates for UI, branding, retro looks, and reflection tricks. Excludes Midjourney V8 Alpha capability news (covered in image_generation).

“Awesome Seedance 2.0” GitHub repo curates prompts and video techniques

Seedance 2.0 prompts (community repo): A GitHub collection called “Awesome Seedance 2.0” is being passed around as a centralized library of high-fidelity prompts and techniques, with the repo overview shown in README screenshot and the source available via the GitHub repo. It’s positioned as a prompt pattern bank (cinematic styles, ads, experiments) rather than a single recipe.

The README excerpt visible in README screenshot emphasizes sourcing from X/WeChat and organizing prompt techniques by category, which signals it’s meant for reuse and iteration.

Gemini prompt template formats “DLSS Off vs On” split-screen comps

Gemini image editing prompt: A copy/paste template shows how to combine two uploaded images into a 16:9 split-screen with a neon-green center divider and precise “DLSS 5 Off” / “DLSS 5 On” labels, with the full text posted in Copy-paste prompt. A separate example image of the meme-format comparison is shown in DLSS on-off example.

The prompt explicitly emphasizes “strictly preserving” each side’s original style and lighting in Copy-paste prompt, which is what keeps it useful for side-by-side visual tests.

Midjourney SREF 1259554060: European gothic aristocratic anime with refined cel shading

Midjourney (SREF 1259554060): A new anime look is described as “European gothic aristocratic anime” with bishōnen character design and refined cel shading, with example outputs shown in Gothic anime examples. The references cited include Rose of Versailles and Black Butler in Gothic anime examples.

The post shares the code directly as --sref 1259554060 in Gothic anime examples.

Midjourney SREF 1742554747 nails a Tintin-like ligne claire × anime hybrid

Midjourney (SREF 1742554747): A style ref making the rounds frames the look as “Tintin met anime”—crisp black outlines, flat vivid colors, and animation-cel polish—packaged as a quick prompt starter in Style breakdown and starter. It’s being positioned for story posters, children’s-book covers, and character design where readability matters.

The shared starter format is [your prompt] --sref 1742554747 --v 6.1 --sv4, as written in Style breakdown and starter.

Midjourney SREF 2641056544: “moving illustration” charm showcased via a train shot

Midjourney (SREF 2641056544): A style reference is being shared specifically for “moving illustrations,” with a train example used to communicate the vibe in Train motion example. The share is framed as a reusable look rather than a one-off scene.

The post attaches --sref 2641056544 as the key reuse hook in Train motion example.

Midjourney SREF 2869353407 pushes watercolor-and-ink urban sketching

Midjourney (SREF 2869353407): A watercolor + ink “urban sketching” aesthetic is being circulated as a single style reference, with multiple sample scenes in Urban sketch examples. The frames lean on loose ink contours, patchy washes, and travel-sketch composition.

The share is concise—just --sref 2869353407—as posted in Urban sketch examples.

Promptsref highlights SREF 1435752685 as a “dreamy blue grain” look

Midjourney SREF 1435752685: Promptsref’s daily “most popular sref” post includes a full style read—deep indigo fields, warm fluorescent orange/coral glows, and heavy grain/noise texture—packaged as “Retro Airy Grain / Dreamy Blue Grain Style” in Style analysis post. The same post lists use cases like lo-fi album covers and mood posters, and points to a broader library via the Sref library.

The ranking callout (“Top 1 Sref”) and parameter string appear in Style analysis post, along with the narrative explanation of why the grain is part of the aesthetic rather than a defect.

SREF 1576552686: dreamy neon “candy gradient” look for Niji 6

Midjourney (SREF 1576552686): A “dreamy neon” preset is being pitched as a consistent way to get glowing pinks/turquoise, jelly-like gradients, and glossy 3D sheen for posters and cover art, as described in Neon sref pitch. A longer parameter explainer is linked in the Style breakdown shared alongside the drop.

The suggested keywords to reinforce the aesthetic (“Neon glow,” “Candy gradient,” “Surreal sheen”) are listed directly in Neon sref pitch.

Midjourney SREF 682853358: “Orange dreams” retro graphic space art

Midjourney (SREF 682853358): A compact style ref share pairs a warm, limited orange palette with textured, mid-century-style space motifs (astronauts, rockets, rings) in Orange dreams sref. The post is a straight code drop with a 4-image sample grid.

The code is presented as --sref 682853358 in Orange dreams sref, which is the only required copy/paste piece.

Midjourney SREF 718618355 turns burgers and fries into pseudo-research diagrams

Midjourney (SREF 718618355): A style ref share presents food illustration as if it’s annotated research—handwritten marginalia, balance-scale comparisons (burger vs fries), and diagrammy layouts—shown in Food diagram set. It’s an example of using a consistent aesthetic wrapper for a whole series.

The post anchors the look as --sref 718618355 in Food diagram set, with the rest of the “research” tone coming from the recurring layout and faux script.

🧩 Agentic creator ops: parallel coding swarms, context-rot fixes, and chat-to-video agents

Heavy agent content today: orchestration layers for multi-agent coding, context-management patterns for Claude Code sessions, and agents that produce media outputs (like finished videos) inside chat surfaces. Excludes security incidents/defenses (covered in agent_security).

Composio open-sources Agent Orchestrator for 30 parallel coding agents

Agent Orchestrator (Composio): Composio’s open-source Agent Orchestrator is being shared as a coordination layer for running “~30 AI coding agents in parallel,” where each agent gets its own git worktree/branch/PR and you supervise via a local dashboard, as described in the feature rundown and linked via the GitHub repo. It also bakes in the unsexy parts teams trip over—looping CI logs back to the agent and iterating on review feedback.

• CI and review loops: The thread claims it can auto-fix CI failures by feeding logs back to agents and auto-address PR review comments, as outlined in the feature rundown.

The practical creative angle is shorter “toolsmithing” cycles (pipelines, editors, internal tools) without manually juggling branches and agent sessions.

Pexo on ClawHub: chat-in-messenger agent that returns a finished edited video

Pexo (ClawHub/OpenClaw): Pexo is described as a new “video creation skill” that lets you request a video inside chat surfaces (Telegram/Discord/WhatsApp) and get back a finished edit—transitions/music/pacing—rather than a raw clip, according to the launch description and the follow-up installation note. The differentiator being marketed is automatic per-scene routing across video models (“Sora, Kling, Seedance…”) so the user doesn’t pick models manually, as claimed in the model-selection detail.

Access points mentioned include ClawHub install and a standalone site, as listed in the installation note and the standalone site.

GSD for Claude Code: subagent contexts to avoid “context rot”

Get Shit Done (GSD): A workflow/tool called GSD is pitched as a fix for long Claude Code sessions that degrade as context fills up; it interviews you, spawns parallel research agents, produces “atomic” XML task plans, then executes each task in fresh ~200k contexts while auto-committing to git, per the workflow description and the GitHub repo. The core claim is keeping the “main” chat window around 30–40% full by pushing heavy work into subagent contexts, as stated in the workflow description.

This is positioned less as prompting and more as session architecture for longer builds.

Adaptive “agent OS” pitch: 71 prebuilt agents + tool integrations + iOS app

Adaptive (Adaptive): Adaptive is being promoted as a single “agent OS” platform with 71 prebuilt agents that connects common business tools (Gmail/Slack/Notion/Calendar/GitHub/Plaid/Square/Figma) and runs continuously via an iOS app, as shown in the launch claim and the old stack vs new stack comparison.

A notable concrete framing is replacement-by-consolidation: the thread contrasts an “old stack” (Zapier $50/mo, a second automation tool $30/mo, plus VA/dev costs) against “one platform,” per the old stack vs new stack and the product positioning on the product page.

OpenClaw shortform content ops: idea capture → weekly planning → scripts → posting → analytics loop

OpenClaw content system pattern: One creator describes a 2-month build of an OpenClaw-based shortform “content ops team,” combining automated idea capture, weekly planning automation, an AI-assisted script pipeline, multi-platform posting, and an analytics feedback loop, as diagrammed in the system map.

The detail that will matter to builders is the structure: “manual filming” sits explicitly as a human-in-the-loop step, while planning/scripting/posting/metrics are treated as automatable modules, as shown in the system map.

Laminar raises $3M for open-source observability for long-running agents

Laminar (lmnrai): Laminar is reported to have raised $3M to build open-source observability for long-running AI agents, per the funding note. The tweet frames the product category as “observability for agents,” which typically covers tracing, debugging, and monitoring multi-step runs—important once agent workflows move from “single prompt” to ongoing background processes.

No product screenshots, benchmarks, or docs are included in the tweet itself, so scope and current availability aren’t verifiable from today’s thread beyond the funding claim.

Signal: agents negotiating on behalf of humans for scheduling and contracts

Negotiation agents: A thread argues we’re close to AI agents negotiating with each other “on behalf of their humans,” pointing to meeting scheduling as an existence proof and extending the idea to restaurant selection and even non-adversarial contract negotiation where preferences and BATNAs are knowable, per the negotiation agent note.

This is a directional signal rather than a shipped tool in today’s tweets, but it maps to where agentic “ops” could go next: not only executing tasks, but resolving back-and-forth coordination work between people.

Claim: an agent system that turns a research idea into a full paper with citations

Paper-writing agents: A tweet claims someone built an AI system that takes a research idea and outputs a full academic paper with “real citations,” per the paper generator claim. No repo, paper, or evaluation artifact is provided in the tweet, so it’s not possible to assess quality or whether citations are robust versus superficially formatted.

For creative teams, the relevant angle is end-to-end “draft automation” expanding from scripts and briefs into more formal documents—but today’s evidence is limited to the claim.

OpenViktor claims “hire replacement” automation with org memory and 3,000+ integrations

OpenViktor: OpenViktor is framed as an all-in-one “AI productivity” product that can act like a hire—“organizational memory” plus 3,000+ tool integrations—and is positioned as undercutting high monthly-fee automation products, per the Product Hunt post. The tweet provides a Product Hunt link but no demo artifacts or technical docs in-line, so the capability claims are currently marketing-forward.

This lands in the same bucket as “agent OS” tools, but with less concrete workflow detail than the threads around orchestration and subagent context management.

🗣️ Voice → picture: open-source dubbing, modern lipsync, and audio-driven video workflows

Voice-centric creation is the news today: open-source multi-speaker dubbing, and creator-tested lipsync that works on music without stem separation. Excludes general video model drops (covered in video_filmmaking).

Fun-CineForge open-sources multi-speaker dubbing for real dialogue scenes

Fun-CineForge (Tongyi Lab / Alibaba): A new open-source dubbing model is being pitched as handling multi-speaker conversations (not just single-person talking heads), including cases where lip cues are unreliable—off-screen speakers, fast cuts, and occlusions—by using what the thread calls “temporal modality” to infer who’s speaking and when, as described in the release thread and expanded in the why dubbing breaks explainer.

The project’s public project page is referenced via Project page, while the thread frames the creative impact as being able to dub dialogue scenes without needing clean, continuous face visibility, per the temporal modality clip.

Freepik Speak lip-syncs to music without stem separation

Speak (Freepik): Creators report Speak can lip-sync to full mixed music tracks without separating a vocal stem first—tested on a ~12-second song segment—plus it supports both still-image+audio generation and video+audio re-sync workflows, as detailed in the hands-on notes and illustrated by the still-to-video result follow-up.

• Failure mode: The same testing notes say sync breaks if the face gets obscured, or never looks toward camera at any point, which matters because the tool burns credits per run, per the hands-on notes.

Freepik Spaces shows a node-based lip-sync pipeline (frames → audio → clips)

Freepik Spaces workflow: Freepik demoed a graph-based workflow for building a music video quickly; the steps shown are: generate character views/angles (Nano Banana 2), attach an audio node containing the script, then generate video for each frame using Veed Fabric 1.0, as outlined in the 10-minute walkthrough and spelled out in the sequence steps.

The same thread claims the setup can produce synchronized clips “from every angle” by repeating the frame-generation step and reusing the audio-driven generation, per the multi-angle note and the Fabric 1.0 lipsync note.

Fun-CineForge ships an end-to-end dubbing dataset pipeline

Dataset pipeline (Fun-CineForge): Alongside the model, the thread claims the team is open-sourcing an end-to-end pipeline that turns raw video into structured multimodal dubbing data—positioned as a way to scale beyond small, error-prone, monologue-heavy datasets, as stated in the pipeline announcement and reiterated with entry links in the try it links post.

What’s not shown here is a benchmark artifact or ablation; the tweets emphasize availability + dataset-building practicality rather than measured WER/lip-sync metrics.

Kling 3.0 Motion Control as a post-lip-sync style pass in Freepik Spaces

Kling 3.0 Motion Control (workflow step): In the same Freepik Spaces graph, Kling’s Motion Control is presented as a later node you can connect to an already-generated clip to push a consistent style across scenes; the reusable prompt template shown is “Keep everything consistent. Turn [SUBJECT] into a [STYLE] character. Keep the features of the original image.” as posted in the style-transfer node step.

This is framed as style unification after the audio-driven lip-sync pass, not as a primary animation tool in the workflow, per the style-transfer node step.

🛡️ Agent security wake-up calls: exposed OpenClaw instances + local “firewalls” for agents

Security was a major theme: exposed agent servers in the wild and a new class of defensive tooling that intercepts agent file/network actions locally. This is distinct from broader synthetic-media policy (covered in trust_safety_policy).

SecurityScorecard reports 40,214 exposed OpenClaw instances on public IPs

OpenClaw (ecosystem): A SecurityScorecard scan surfaced 40,214 exposed OpenClaw instances across 28,663 unique IPs, with the thread claiming 12,000+ are trivially reachable for API-key/personal-data theft scenarios, as described in the [exposure thread](t:94|Exposure numbers) and restated in the [incident breakdown](t:387|What happened recap).

The framing that matters for creative teams is the access scope: OpenClaw-style agents often sit next to your whole production surface (project files, renders, source repos, and .env secrets), so “default settings” plus internet exposure becomes a direct pipeline risk, per the [deployment defaults claim](t:94|Default settings risk).

Hosted OpenClaw providers get compared on isolation, jurisdiction, and secret handling

Hosted OpenClaw (provider selection): A single thread lays out a practical security comparison across four hosted options—focusing on tenant isolation model, data residency/jurisdiction, and secrets handling transparency—with the summary table captured in the [quick comparison](t:390|Provider summary).

• Emergent: Characterized as shared-container infrastructure with app-level separation in the [provider note](t:388|Shared containers claim).

• Kimi Claw: Called out for being hosted under Chinese jurisdiction plus a “massive skill library” and 40GB storage, per the [Kimi Claw note](t:389|Jurisdiction and storage).

• Smithery: Framed as having a clean UX but unclear isolation/secret storage documentation in the [documentation critique](t:335|Isolation docs missing).

The post positions “hosting security” as the deciding variable once agents connect to Slack/email/tool APIs, per the [risk framing](t:390|Why isolation matters).

KiloClaw details a per-customer microVM isolation design and cites a 10-day assessment

KiloClaw (Kilo): The thread claims every customer gets a dedicated Firecracker microVM (own kernel) with five independent layers of tenant isolation, and that API keys are encrypted with RSA-OAEP + AES-256-GCM and only decrypted inside the VM, as outlined in the [architecture claim](t:254|MicroVM design) and expanded in the linked [security write-up](link:254:0|Security breakdown).

It also cites an “independent 10-day security assessment” finding zero cross-tenant access paths and zero common injection classes, per the [assessment claim](t:254|Assessment summary).

Kavach pitches a local agent firewall with fake-success file and network traps

Kavach (open source): A new open-source tool is pitched as a local “firewall” layer between an AI agent and the OS, designed to intercept or neutralize destructive actions by returning fake success to the agent—e.g., redirecting rm -rf-style operations into a hidden directory (“Phantom Workspace”) and spoofing risky outbound calls with fake 200 OK (“Network Ghost Mode”), as described in the [feature rundown](t:34|Capabilities list) and linked via the [GitHub repo](link:225:0|GitHub repo).

The post also claims cryptographic caching/rollback for file edits, a honeypot token file that triggers lockdown, and a “human override” gate that rejects synthetic mouse injection, per the [defense mechanisms list](t:34|Defense mechanisms).

🔍 Likeness & training-data blowback: when text prompts can summon a “digital twin”

Trust issues today are about identity and consent: reports claim models can reproduce a creator’s likeness from text alone, and platforms’ training policies (and opt-out gaps) are becoming the story. Excludes agent/server security (covered in agent_security).

Nano Banana 2 backlash: “digital twin” likeness from text-only prompting

Nano Banana 2 (Gemini Flash Image): A post claims a text-only prompt (no reference image, not naming the person) repeatedly generated near-identical images of YouTube creator Alix Earle—framed as evidence of likeness memorization—describing outputs as “a perfect digital twin” in the incident summary, with more background consolidated in the longer write-up. This is directly relevant to AI filmmakers, designers, and storytellers because it shifts “character creation” risk from spoofing to incidental summoning—where detailed descriptors (jewelry/pose/face cues) might converge on a real person.

The same post describes the creator reaction (“disgust”) and the defense (“I only used words”) in the incident summary, but no raw prompt/output set is embedded in the tweets, so treat the claim as unverified until primary artifacts surface.

YouTube-to-model training: creator opt-out gap becomes the story

YouTube training policy (Google): A thread alleges Google trains Gemini and Veo 3 on a subset of YouTube’s library (citing a “~20 billion” video scale) under existing ToS, while offering creators “zero opt-out” from internal training—contrasting that with tools aimed at blocking third-party scrapers—in the policy claim, with the same narrative expanded in the article write-up.

For working creatives, the practical implication is that distribution platforms can double as training inputs for models that later generate lookalike talent, brand faces, or “UGC-style” performers—even when the original creator never intended licensing.

EU antitrust pressure is being framed around creator data and AI training

EU competition scrutiny (claimed): One post says the European Commission opened a formal antitrust investigation in Dec 2025 focused on whether Google’s model-training use of creator content amounted to forced data sharing “with no compensation” and “no ability to refuse,” as described in the EU probe claim and reiterated in the supporting write-up.

This matters to AI studios mostly as a timeline risk: if the framing sticks (competition + creator rights), licensing/opt-out mechanics could become table-stakes for platforms that also ship creative models.

“AI safety” skepticism shows up as a status/career meme

AI safety cultural signal: A reposted take frames “AI safety” skepticism as a social/career phenomenon—spotting “AI safety” profiles on LinkedIn with “no previous” experience—rather than as a specific tooling debate, as captured in the AI safety gripe.

🛠️ Practical tool moves: Photoshop Rotate Object, mobile edit stacks, and quick generators

Single-tool craftsmanship today: Photoshop’s new beta transform, mobile draw-to-edit workflows, and quick ways to generate graphics/UI without everything looking the same. Excludes multi-tool pipelines (covered in creator_workflows_agents).

Photoshop (beta) Rotate Object details surface: 20 Gen Credits per use and best paired with Harmonize

Photoshop (beta) (Adobe): Following up on Rotate Object (rotate + Harmonize workflow), additional practical details emerged—an 80.lv write-up notes the feature ships in Photoshop beta 27.5.0 and costs 20 Generative Credits, with the first three attempts free, before producing a low-res preview that then upscales with added detail, as described in the 80.lv write-up and echoed by a community recap in the release repost. It’s positioned as a way to “spin/tilt/dolly” a 2D object into a new angle, then use Harmonize to make lighting match the composite, per the same 80.lv write-up.

What’s still unclear from today’s tweets: output constraints (object size limits, failure modes, and consistency across multiple rotations) beyond the credit cost and UI entry points.

Gamma Imagine: generate 3 invite concepts, then iterate with prompt edits and buttons

Gamma Imagine (Gamma): A hands-on share shows Gamma generating multiple event invite designs from a date + theme, then letting the creator revise layout/copy via text prompts and UI buttons, as demonstrated in the invite generation demo. The framing here is design iteration speed plus escaping “same AI font/aesthetic” defaults, with an example invite set shown in the invite examples.

The visible workflow is closer to “generate options → pick one → edit in place” than exporting raw generations into another design app.

Krea mobile app: draw a mask, prompt the edit, then apply Nano Banana stylization

Krea (mobile app): A creator demo shows a fast “draw-on-image → prompt edit → stylize” loop on phone—sketching directly over a photo to guide changes, then applying Nano Banana for the final look, as shown in the mobile edit demo.

The clip frames this less as model-chasing and more as tactile art direction: the drawing step sets constraints (where to change), and the prompt handles the transformation.

Veo 3 looping trick: set the first frame and last frame to the same image

Veo 3 (first/last-frame control): A creator tip notes that seamless loops are easier when the first frame and last frame are the same image, using Veo 3’s first/last-frame feature, as described in the looping tip.

The example shown is a short looping motion clip where the cycle closes cleanly because the endpoints match.

Illustrator trick: rounded-corner cutouts to draw clean, stylized map lines

Adobe Illustrator: A short screen recording walks through building a stylized “map” look by using rounded-corner shape cutouts (clean white paths on a dark field) to form roads/lines, as shown in the Illustrator map demo.

This is a practical backdrop/graphic asset technique that can feed into AI workflows later, but the technique itself is pure Illustrator craft.

A free ASCII art tool is getting shared as a quick “image to text-art” output

ASCII art tool: A short demo shares a free “ASCII Art Magic” utility positioned as a quick way to turn generated imagery into shareable text-art, with the creator framing it as a Midjourney + Grok + converter flow in the ASCII tool demo.

The main creative value is format-switching—turning visuals into something that posts well as plain text (or at least reads as “text-native”) without rebuilding the composition manually.

Codex app UI sentiment: creators prefer the desktop app over CLI/TUI sessions

Codex (app vs CLI): A creator opinion argues the Codex app experience is dramatically better than Codex CLI and other terminal UIs, emphasizing a preference for a graphical surface over TUI workflows, as stated in the UI vs CLI take.

This reads as a UI/ergonomics signal: as coding agents get more capable, session management and visibility in a GUI starts to matter as much as raw model quality.

🧊 3D & animation builders: footage→3D scenes, no-code engines, and faster asset creation

3D creation today is about production acceleration: converting footage into editable 3D scenes, building 2D adventure games with node logic, and rapid mesh generation. Excludes pure video-model clips (covered in video_filmmaking).

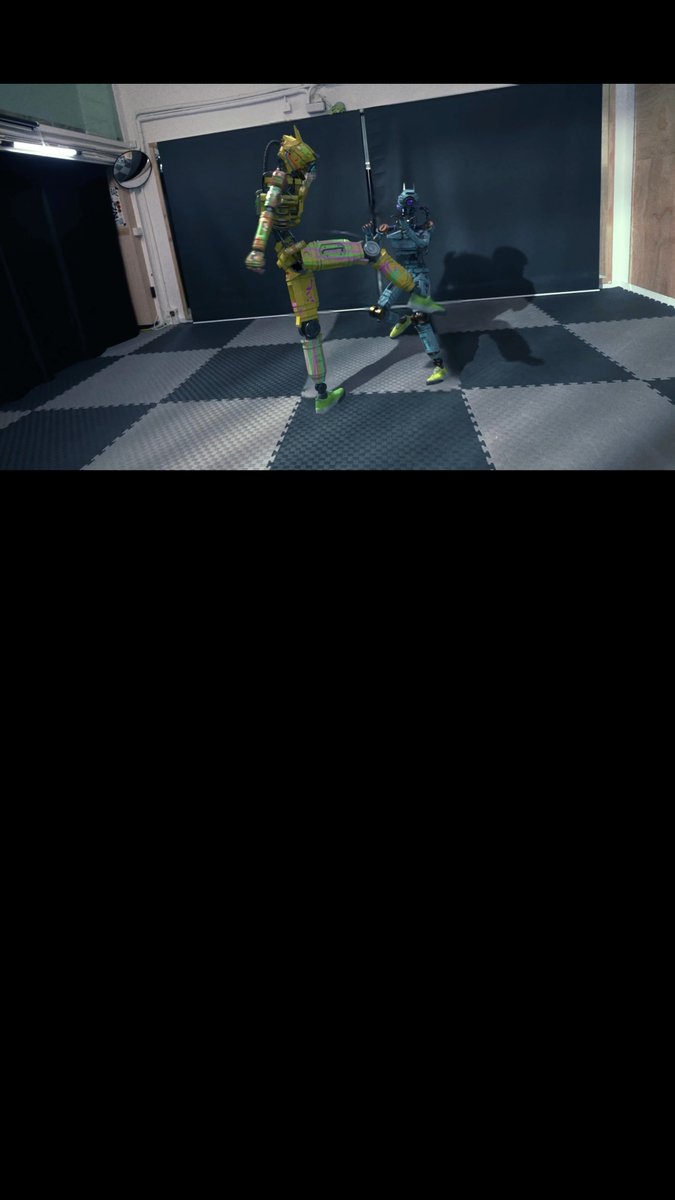

Flow Studio turns live-action fight footage into a tracked, lit 3D scene

Flow Studio (Autodesk): A practical workflow demo shows live-action combat footage getting converted into an editable 3D scene after you assign CG characters; the platform handles camera tracking and lighting “in the cloud,” framing it as a replacement for hours of rotoscoping and manual track setup, as described in the Workflow description.

• Pipeline shape: Upload footage → assign CG characters → cloud processing returns a 3D scene with tracking/lighting already established, per the Workflow description.

The example is credited to creator “Match,” with Autodesk positioning this as a pre-polish acceleration step rather than final animation output, according to the Workflow description.

RAMEN game engine teases AI video-to-spritesheet, spatial lighting, and no-code logic

RAMEN engine (techhalla): A first public build is teased for “next week,” aiming to let you assemble a fully playable 2D adventure in a couple days via a built-in video-to-spritesheet generator (explicitly called “AI video compatible”), spatial detection for 2D backgrounds (custom lights/shadows), and node-based no-code game logic, as outlined in the Feature list post.

• What to watch: The demo clip shows the workflow moving between “Video to Spritesheet,” “Spatial Detection,” and a node graph before previewing a lit 2D level, matching the Feature list post.

The post is also actively recruiting testers (comment to join), which signals this is still a builder-facing alpha rather than a polished release, per the Feature list post.

🎞️ What creators shipped: AI films, spec ads, reels, and playable experiments

Finished work and releases today: longform AI cinema episodes, a big brand-adjacent AI music video breakdown, studio reels, and games/interactive experiments. Excludes tool announcements and prompt drops (covered elsewhere).

WAR FOREVER • Part One releases, with a 2‑minute dogfight tease

WAR FOREVER (Dustin Hollywood): Following up on first look (early clip), Dustin Hollywood published WAR FOREVER • Part One and framed it as “cinema grade,” positioning it as a milestone for creators grinding on AI film craft, as stated in the Part One release post.

He also shared a separate look at a 2‑minute dogfight over a beach that he says appears in a longer film coming in June, as shown in the Dogfight tease post.

• Release surfaces: The cut is also posted “on YouTube,” per the YouTube link post pointing to the YouTube cut.

• What’s new today: The additional dogfight excerpt (and accompanying poster frames) adds a second “scene-scale” preview beyond the earlier first-look clip, as shown in the Dogfight tease post.

Tether’s AI music video drops a free pack: 600+ shots and all prompts

Tether AI music video (starks_arq): Following up on Tether video (project launch), starks_arq says they directed/produced Tether’s “first official AI music video,” made across 1,000+ generations over 5 pipeline runs with 90 shots in the final cut, as described in the breakdown offer.

• What’s being shared: They’re offering a free bundle including a production breakdown PDF, “600+ generated shots,” and “every single prompt,” per the breakdown offer.

• Distribution model: Access is gated via RT + reply (“STARK”) for a DM, and they also point people to a community channel in the Telegram invite, which links to the Telegram group.

A separate post frames the rationale as not charging for educational material when the work is already paid for, per the materials stance.

“Before The Sunrise” spec ad ships from a Midjourney still to Nano Banana Pro + Kling

“Before The Sunrise” (Allar Haltsonen): A short spec ad was released using Nano Banana Pro + Kling 3.0 inside Freepik Spaces, with the creator explicitly noting it began from an older Midjourney image, as described in the spec ad post.

The starting still is shown separately in the original Midjourney still.

• Production thread detail: The post frames the work as a multi-tool pipeline (Midjourney origin → Freepik Spaces workflow → Kling animation), per the spec ad post.

Nova Squadron publishes a split-stream episode: anime feed vs sci‑fi miniseries

Nova Squadron (BLVCKLIGHTai): A new “broadcast” drop titled “Red Horizon Burns” was published as a 17-scene sequence framed as a recovered recording, as written in the signal teaser.

More unusually, the creator says the “Route 47” system pulled two copies of the same broadcast—one presented as an anime episode and the other as the opening chapter of a sci‑fi miniseries—released together in a side-by-side format, as described in the split-stream concept post.

• Format signal: The split-screen cut makes the “two versions of the same story” concept legible immediately (anime feed left, live-action bridge right), as shown in the split-stream concept post.

📅 Deadlines & stages: creator competitions, summits, and live workshops

Time-bound opportunities today include major prize pools and creator-facing industry events (benchmarks hackathon, ad competition, AI video summit).

DeepMind and Kaggle open a $200K hackathon to build cognitive AI benchmarks

Cognitive eval hackathon (Google DeepMind × Kaggle): DeepMind is sponsoring a Kaggle competition/hackathon with $200K in prizes to build new evaluations aimed at “progress toward AGI” (explicitly cognitive capabilities), as announced in the Hackathon launch post and echoed by the organizer call in Benchmark dimensions list.

• What they want built: benchmarks targeting learning, metacognition, attention, executive functions, and social cognition—positioned as a response to “AI saturating most benchmarks,” per the Benchmark dimensions list.

• Why it matters: if you ship creative tools on top of frontier models, new evals are one of the few levers that can shift what model teams optimize for (especially around long-horizon cognition rather than short prompt tricks), which is the explicit framing in the Hackathon launch post.

Luma’s Dream Brief offers up to $1M for unmade ad ideas, due March 22

Luma Dream Brief (Luma): Luma is running a global competition with prizes “up to $1,000,000” for producing an unmade advertising idea using Luma AI, with a submission deadline of March 22, per the Competition teaser and the details on the Competition page.

The positioning is explicitly “no client, no approvals,” i.e., closer to a creator-first spec ad lane than a brand commission, as described in the Rules recap post.

Runway AI Summit lands March 31 in New York with NVIDIA, EA, Lucasfilm and Adobe

Runway AI Summit (Runway): Runway is selling tickets for its March 31 in‑person summit in New York, pitching sessions with speakers from NVIDIA, EA, Lucasfilm, and Adobe, as stated in the Summit announcement and detailed on the Event page.

The tweets don’t include a pricing breakdown beyond “tickets available,” but the Event page lists a one-day agenda format and the speaker roster focus (media + creative tooling + compute).

Dustin Hollywood schedules a March 29 generative filmmaking masterclass

Generative filmmaking masterclass (Dustin Hollywood): A new March 29 in‑person masterclass was announced covering generative filmmaking workflow/prompt design; the offer includes a deliverable video, working files, a “book of prompts,” and one year free of Stages.ai, according to the Masterclass announcement.

The same post also claims a bundled zip of templates plus “500+ effects & editorial prompts,” and notes sign-up timing (“available on 3/17/2026”) in the Masterclass announcement.

Pictory hosts a live webinar on generative AI video on March 18 (11am PST)

Pictory webinar (Pictory): Pictory scheduled a live session for March 18, 11 AM PST with CEO Vikram Chalana and CMO Scott Rockfeld on how generative AI is shaping video creation, as announced in the Webinar announcement with sign-up via the Registration page.

A follow-up post frames the angle around “automation in video production” and enterprise considerations like security/compliance, per the Enterprise pitch.

📚 Research & datasets creatives will feel soon: depth-scaling attention, city world models, embodied video corpora

Mostly papers/datasets today that map to creator tooling next: attention mechanisms for long context, grounded world simulation video, and huge egocentric datasets for embodied/spatial models. (No bioscience items included.)

Ropedia Xperience-10M releases 10M embodied interactions with 10k hours of first-person captures

Ropedia Xperience-10M: A large egocentric multimodal dataset drops on Hugging Face with 10 million “experiences” and 10,000 hours of synchronized first-person recordings, including six video streams, audio, stereo depth, camera pose, hand + full-body mocap, IMU, plus hierarchical language annotations, as described in the [dataset announcement](t:107|dataset announcement).

For creative tools, datasets like this are the raw ingredient for better “actor-in-space” understanding (hands, gaze, body motion, camera motion) that tends to show up later as more controllable character blocking and spatial continuity.

Seoul World Model grounds city-scale video generation in real street-view retrieval

Seoul World Model (SWM): A “real metropolis” world-simulation paper describes retrieval-augmented conditioning on street-view imagery to generate longer-horizon, spatially faithful city videos (trajectories described as spanning hundreds of meters), as introduced in the [paper post](t:96|paper post) and expanded on the [paper page](link:96:0|paper page).

For filmmakers and storytellers, this is the research direction behind future “location-true” generative previs (consistent turns, blocks, landmarks) instead of purely invented city continuity.

Mixture-of-Depths Attention (MoDA) targets depth scaling without long-context slowdown

Mixture-of-Depths Attention (ByteDance Seed): The MoDA paper proposes attention heads that mix current-layer sequence KV with prior-layer “depth KV,” aiming to reduce “signal dilution” as models get deeper; the writeup claims 97.3% of FlashAttention-2 efficiency at 64K with a hardware-friendly algorithm, plus ~3.7% FLOPs overhead in reported experiments, as shown in the [paper card](t:82|paper card) and detailed on the [paper page](link:82:0|paper page).

For creators, this line of work maps to models that keep long-context reliability (story bibles, project specs, shot lists) while scaling depth for better reasoning—without paying the full latency penalty that usually comes with more layers.

Intel publishes MiroThinker-1.7 INT4 checkpoints for lighter deployment

MiroThinker-1.7 INT4 (Intel): Intel-linked INT4 quantized checkpoints for MiroThinker-1.7 (and a mini variant) are posted to Hugging Face, emphasizing 4-bit deployment efficiency, as pointed to in the [INT4 availability note](t:160|INT4 availability note) with model artifacts at the [Hugging Face listing](link:160:0|Hugging Face listing).

For production creative stacks, INT4 releases are often what makes “run it locally / run it cheap” feasible for long-running drafting, tagging, or project-knowledge assistants.

PokeAgent Challenge benchmarks long-context planning via Pokémon battling and speedrunning

PokeAgent Challenge: A benchmark paper frames Pokémon as a testbed for partial observability, game-theoretic decisions, and long-horizon planning, spanning both competitive battling and RPG speedrunning; the post highlights scale ("20M+" battle trajectories) and a competition validation angle, as shown in the [paper card](t:111|paper card) and summarized on the [paper page](link:111:0|paper page).

This kind of evaluation tends to correlate with agents that can keep a coherent plan across many steps—useful for creative pipelines where “multi-scene consistency” is the whole job.

Mamba series update signals continued shift toward hybrid architectures

Mamba (hybrid models): A thread signals a “newest model in the Mamba series” and frames it inside the broader move toward hybrid architectures, as noted in the [Mamba update repost](t:38|Mamba update).

For creatives, the near-term implication is mostly practical: hybrid designs are often pursued for speed and long-context efficiency, which is the same set of constraints that show up when you’re iterating scripts, shot lists, or long edit logs through an assistant.

MiroThinker-1.7 and MiroThinker-H1 announced as research agent family updates

MiroThinker (MiroMind): A release post announces MiroThinker-1.7 and MiroThinker-H1 as the latest generation of a research-agent model family, per the [release mention](t:54|release mention).

The creative relevance is that “research agent” families usually optimize for tool-using, planning, and long-task follow-through—capabilities that later get productized as better brief-to-treatment and brief-to-shot assistance.

MoDA releases code alongside the paper for depth-KV experiments

MoDA code release: The MoDA abstract screenshot includes a code pointer (“Code is released at …/MoDA”), signaling that the depth-KV mechanism is intended to be reproducible and benchmarked by others, as captured in the [abstract screenshot](t:82|paper abstract) and summarized on the [paper page](link:82:0|paper page).

This matters downstream for creative tooling because open implementations tend to get folded into training stacks and eventually show up as “longer context that stays sharp” in production models.

📈 Distribution reality: where longform AI video gets discovered (and where it doesn’t)

Discourse today centers on reach and discovery: creators feel squeezed by short-form-first algorithms and are asking what a dedicated longform AI video platform should do.

Longform AI video still lacks a discovery home beyond short-form algorithms

Longform AI video distribution: A creator discussion argues that current social platforms are optimized for quick hits, not “sitting down and watching” longer AI pieces—so narrative films, music videos, and surreal experiments don’t have a natural discovery surface, as laid out in the platform question. The feature wishlist is notably creator-centric—browse by mood/genre, follow specific artistic voices, and avoid algorithmic penalties for longer runtimes, per the same platform question.

• What “good discovery” looks like here: Mood/genre navigation and creator-following are framed as table-stakes for longform discovery, rather than pure engagement ranking, according to the platform question.

When reach drops, some AI video creators lean into experimentation

Creator reach volatility: 0xInk describes distribution as cyclical—“the algo comes and goes”—and frames low reach as a period that’s more conducive to trying new formats rather than optimizing for performance, as stated in the reach reflection. That posture maps to the broader longform/discovery gap: if distribution is unreliable, creators may prioritize craft experiments over packaging for feeds.

• Experimentation as a distribution response: The associated workflow clip provides concrete evidence of “experiment more” behavior during a low-reach stretch, aligning with the stance in the reach reflection.

While you're reading this, something just shipped.

New models, tools, and workflows drop daily. The creators who win are the ones who know first.

Last week: 47 releases tracked · 12 breaking changes flagged · 3 pricing drops caught