vLLM v0.15.0 adds MoE Multi‑LoRA – +454% tokens/s, -87% TTFT

Stay in the loop

Free daily newsletter & Telegram daily report

Executive Summary

vLLM shipped v0.15.0 with Multi‑LoRA serving for MoE via a new fused_moe_lora kernel; the project claims +454% output tokens/sec and -87% time‑to‑first‑token on GPT‑OSS 20B Multi‑LoRA, plus dense gains like Qwen3 32B at +99% OTPS; framing is “one base MoE + many adapters on one GPU,” handling compound sparsity (expert routing + adapter selection) with fused paths, Split‑K, and CTA swizzling. ROCm users also get AITER attention backends that route prefill/decode/extend separately; vLLM cites up to 4.4× decode throughput via KV‑layout and model‑specific kernels.

• Anthropic–DoW procurement fight: DoW directive language frames Anthropic as a “supply chain risk” with secondary‑boycott‑style contractor restrictions; Anthropic says it will sue and argues 10 USC 3252 would limit scope to DoW contract work; a Trump post calls for government‑wide offboarding with a 6‑month phaseout; Markey signals congressional pressure; Axios claims OpenAI’s red lines were accepted.

• Claude Code 2.1.63: /simplify and /batch ship for parallel refactors/migrations; HTTP hooks now POST/return JSON; auto‑memory/config shared across git worktrees; new opt‑out flag ENABLE_CLAUDEAI_MCP_SERVERS=false.

• Platform churn: Gemini 3 Pro Preview retires Mar 9 and gemini-pro-latest shifts Mar 6; DeepSeek V4 “next week” multimodal claims remain rumor-only.

Top links today

- vLLM multi-LoRA serving for MoE models

- Perplexity open embedding model on Hugging Face

- Imbue Evolver open-source optimizer repo

- Code Review Bench evaluation pipeline

- Vercel Queues public beta announcement

- OpenAI $110B funding and infra update

- Anthropic statement on Secretary of War comments

- Qwen3.5 model family release overview

- Qwen3.5 27B model weights on Hugging Face

- Alibaba Cloud Qwen3.5 API pricing and docs

- Claude Code CLI 2.1.63 changelog

- Teleport zero-trust identity platform repo

Feature Spotlight

Anthropic vs DoW escalates: supply‑chain risk threat, federal offboarding order, and court challenge

US DoW moves to blacklist Anthropic as a “supply chain risk,” triggering contractor/cloud shockwaves; Anthropic says customers mostly unaffected and vows a court fight—raising the stakes for AI procurement, safety terms, and vendor leverage.

Dominant cross-account storyline today: the Department of War moves to label Anthropic a “supply chain risk,” with knock-on implications for cloud hosting and defense contractors; Anthropic publicly commits to fight in court and reiterates red lines on surveillance and autonomous weapons.

Jump to Anthropic vs DoW escalates: supply‑chain risk threat, federal offboarding order, and court challenge topicsTable of Contents

🏛️ Anthropic vs DoW escalates: supply‑chain risk threat, federal offboarding order, and court challenge

Dominant cross-account storyline today: the Department of War moves to label Anthropic a “supply chain risk,” with knock-on implications for cloud hosting and defense contractors; Anthropic publicly commits to fight in court and reiterates red lines on surveillance and autonomous weapons.

Hegseth declares Anthropic a “supply chain risk” with secondary-boycott-style language

Department of War (Hegseth): The Secretary of War message circulating today directs the DoW to designate Anthropic a “Supply-Chain Risk to National Security” and claims that “no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic,” as shown in the Directive screenshot and repeated in Legal reading concern.

This framing matters operationally because it reads like a secondary boycott (restrictions on third parties), which is why multiple threads immediately model downstream effects on cloud hosting and defense supply chains rather than treating it as a normal procurement dispute.

Cloud hosting becomes the immediate choke point in the Anthropic “ban” wording

Cloud hosting & defense contractors: Multiple threads note that Anthropic serves via cloud partners (AWS/GCP/Azure) that also do business with the U.S. military; taken literally, Hegseth’s “no contractor… may conduct any commercial activity with Anthropic” language would collide with Anthropic’s ability to serve anyone, as argued in the Cloud-provider conflict analysis.

This line of reasoning is why the “cloud networks only” deployment constraint and contractor definitions matter more than the headline label; it turns into an infra continuity question (what can hyperscalers legally keep hosting) rather than a model capability question.

Trump orders federal agencies to cease Anthropic use with a six‑month phaseout

U.S. federal agencies (Trump): A Truth Social post attributed to Trump directs every federal agency to “immediately cease all use of Anthropic’s technology,” while giving DoW-like users a six-month phaseout period, as shown in the Truth Social screenshot.

The post also threatens “full power of the Presidency” consequences if Anthropic doesn’t cooperate during the phaseout, which turns the dispute from contract negotiation into a cross-agency procurement/authority fight with near-term vendor risk for any federal-adjacent workload.

Altman says OpenAI reached DoW agreement for classified deployment with surveillance and weapons red lines

OpenAI (classified deployment): Sam Altman says OpenAI reached an agreement to deploy models on the DoW classified network, explicitly naming two principles—no domestic mass surveillance and human responsibility for use of force—and describing additional safeguards like forward-deployed engineers and cloud-only deployment, per the Agreement text that is also shown being reshared in the Repost screenshot.

The practical delta for government/enterprise AI teams is that “same mission, different paper” is now being debated: whether OpenAI’s “lawful purposes + safeguards” framing is materially different from Anthropic’s asked-for explicit prohibitions.

Axios claim: Pentagon accepts OpenAI red lines while still moving against Anthropic

Pentagon contracting (Axios-sourced): A circulated Axios headline claims the Pentagon approved OpenAI “safety red lines” after dumping Anthropic, fueling debate that the conflict is partly about Anthropic/Dario specifically rather than the abstract policy terms, as shown in the Axios headline screenshot and echoed in the Contract comparison take.

This matters because it implies future negotiations may hinge less on safety principles in the abstract and more on definitional control (who gets to interpret compliance) and vendor leverage during live operations.

Report: 500+ Google/OpenAI employees urge CEOs to back Anthropic-style red lines

Employee coordination signal: A report claims 500+ verified employees from Google and OpenAI signed an open letter (“We Will Not Be Divided”) urging leadership to refuse building AI for mass domestic surveillance or fully autonomous weapons, and accusing the DoW of playing labs against each other, as described in the Open letter report.

The number (500+) is the main novelty here; the tweets don’t include the letter text itself, so the evidentiary status depends on the linked source named in that thread.

The contract language fight centers on “all lawful purposes” vs explicit veto power

Contract terms (definition and control): Threads attempt to explain why Anthropic might reject terms that OpenAI accepts: “all lawful purposes” language can be bounded by existing statutes, while explicit “no mass surveillance / no autonomous weapons without consent” language raises vagueness and continuity concerns (including the fear that a vendor could “pull the plug” mid-operation), as laid out in the Terms comparison and discussed in the Wording speculation.

A separate but related claim is that where safety constraints “live” (training-time vs policy/system layer) can change how removable they are in classified deployments, which is argued in the Architecture speculation screenshot but is not backed by a primary technical disclosure in the tweets.

A compact timeline of the Anthropic–Pentagon escalation becomes a shared reference

Event timeline artifact: A widely shared “AI Standoff” timeline compresses the story from Anthropic’s classified-network work through the ultimatum and the supply-chain-risk escalation into a single reference image, as compiled in the Timeline infographic.

The value for operators is that it captures the sequencing (contracts, ultimatum, alleged last-second deal, and legal response) that determines which obligations are contractual vs political statements vs procurement mechanisms.

Sen. Markey calls for congressional action to reverse Anthropic “supply chain risk” move

U.S. Senate (Markey): Senator Markey posts a statement demanding immediate congressional action to reverse the DoD designation of Anthropic as a supply chain risk, as amplified in the Markey statement repost.

This is a concrete signal that the dispute is likely to spill into oversight and appropriations channels, not remain a lab-by-lab contracting negotiation.

The dispute is framed as a new political-risk line item for AI labs and datacenters

Political risk (AI infra): Several comments argue the U.S. government is putting major commercial enterprises on notice that it can “destroy [a] business at a moment’s notice,” as stated in the Political risk warning, with an explicit claim that this will affect data center investment calculus beyond Anthropic, as argued in the Datacenter risk note.

This angle matters because AI labs’ infra strategy is already tightly coupled to long-dated capex and multi-year power commitments; the tweets frame the new uncertainty as regulatory/political rather than technical.

🧰 Claude Code shipping sprint: /simplify + /batch, HTTP hooks, and worktree-shared memory/config

High-signal engineering updates to Claude Code today (beyond yesterday’s auto-memory/connectors story): new bundled slash commands for parallel refactors and migrations, plus workflow plumbing (HTTP hooks, worktree-sharing) that changes how teams run agent-driven PR loops. Excludes DoW policy drama (feature).

Claude Code 2.1.63 shares auto-memory and config across git worktrees

Claude Code 2.1.63 (Anthropic): Project configs and auto-memory are now shared across git worktrees in the same repo, cutting repetitive setup when you’re using worktrees for isolation or parallel agent branches—an explicit continuation of Auto-memory (persistent project context), with the concrete “shared across worktrees” change called out in the [2.1.63 release notes](t:342|Release highlights).

This aligns with the /batch workflow that relies on git worktrees for parallel execution, as described in the [batch command spec](t:130|Batch description).

Claude Code AskUserQuestion can render markdown snippets in the UI

Claude Code (Anthropic): The AskUserQuestion tool can now show markdown snippets (diagrams, code examples) inside the question UI, enabling richer guided flows where the harness can present structured context or visual explanations without dumping it into plain text, per the [shipping thread](t:33|Shipping thread).

Claude Code reduces long-session memory by stripping progress payloads

Claude Code 2.1.63 (Anthropic): Long sessions with subagents get a memory-usage improvement by stripping heavy progress-message payloads during context compaction, aiming to keep long-running agent work more stable before hitting context/latency cliffs, per the [changelog highlight](t:342|Release highlights).

Claude Code Remote Control begins rolling out for Pro users

Claude Code Remote (Anthropic): Remote Control is described as “rolling out now for Pro users,” signaling broader availability of long-running agent sessions that persist when you step away from the terminal, per the [rollout note](t:27|Rollout note) and echoed in the [follow-up remark](t:186|Remote control follow-up).

Claude mobile Google connectors add Calendar actions and Gmail drafts

Claude connectors on mobile (Anthropic): A mobile connector update prompts users to re-auth Google Calendar/Gmail to unlock action-taking—Calendar can now create/update/respond to events, and Gmail can create drafts for review/edit in context, as shown in the [connector update screenshots](t:105|Connector update screenshots).

Claude Code /copy can now default to copying the full response

Claude Code (Anthropic): The /copy picker adds an “Always copy full response” option, so future /copy invocations can skip the code-block picker and grab the entire last assistant message directly, as noted in the [2.1.63 changelog](t:342|Release highlights) and paired with the baseline /copy behavior described in the [shipping note](t:183|Copy command behavior).

Claude Code adds an env var to opt out of bundled ClaudeAI MCP servers

Claude Code 2.1.63 (Anthropic): A new environment variable, ENABLE_CLAUDEAI_MCP_SERVERS=false, lets users opt out of making claude.ai MCP servers available by default—useful for tighter enterprise control over tool surface and auth paths—per the [2.1.63 changelog](t:342|Release highlights).

Claude Code adds manual paste fallback for MCP OAuth redirects

Claude Code 2.1.63 (Anthropic): MCP OAuth auth now includes a manual “paste callback URL” fallback when the automatic localhost redirect fails, reducing setup friction for MCP servers in environments where loopback redirects break, per the [2.1.63 changelog line](t:342|Release highlights).

Claude Code system prompt shifts from “Task tool” to “Agent tool” guidance

Claude Code 2.1.63 (Anthropic): The system prompt updates replace “Task tool” references with “Agent tool” guidance (including Explore guidance updates), suggesting an internal semantics/UX shift in how the harness frames delegation and subagent usage, per the [system prompt diff summary](t:795|System prompt updates) and the broader [2.1.63 release note](t:342|Release highlights).

Claude Code’s VS Code sessions list gets rename and remove actions

Claude Code 2.1.63 (Anthropic): The VS Code integration adds session rename and remove actions directly in the sessions list, tightening the management loop for long-running or multi-session agent work, per the [2.1.63 changelog](t:342|Release highlights).

🧑💻 Codex product & API momentum: GPT‑5.3‑Codex rollout, desktop multi-agent UX, and model-version breadcrumbs

Codex chatter is mostly about day-to-day usability and rollout signals (desktop app, multi-agent control, and API availability), plus early breadcrumbs around model version gating. Excludes OpenAI’s funding/infra deal mechanics (covered elsewhere).

OpenAI says Codex reached 1.6M weekly users and tripled since Jan 1

Codex (OpenAI): OpenAI is claiming 1.6M weekly Codex users, stating usage has “more than tripled since the start of the year,” as shown in the Codex users metric excerpt and tracked in a community backfill of public anchors in Growth chart analysis.

This is a workload-signal for infra planners because it suggests the Codex product surfaces (CLI/app/API) are already operating at consumer-ish scale, not just “devtool early adopter” scale.

GPT-5.3-Codex positioning: strong for automation and backend, weaker on frontend

GPT-5.3-Codex (builder sentiment): People report hearing at meetups that GPT-5.3-Codex is the “clear winner” for background agent automation “for a given $ amount,” as described in Meetup cost-performance claim; others say they use Codex 5.3 for most work “except frontend,” per Frontend exception, and explicitly reach for it on “complicated software engineering,” per Complicated engineering note.

The through-line is less about “best model overall” and more about task routing: backend refactors/automation are highlighted as the sweet spot, while UI work is still called out as a gap.

Responses API `view_image` gets original-resolution support behind a model gate

Responses API image tooling (OpenAI): A repo breadcrumb shows original-resolution handling for the view_image tool behind a view_image_original_resolution flag, with behavior gated on parsing model slugs as gpt-5.4 or newer per Feature flag summary and reinforced by a unit test asserting version support in Version gate test.

This matters for anyone doing vision-in-the-loop coding/QA flows because it implies (a) the tool can preserve original PNG/JPEG/WebP bytes in some code paths, and (b) OpenAI is using explicit semantic version gates in infra, not only policy toggles.

Codex CLI adds voice input: “Now you can speak to Codex CLI”

Codex CLI (OpenAI): A new capability to “speak to Codex CLI” is being called out in Voice input note, pointing at voice-as-control-layer for coding agents rather than only text prompts.

Details like supported platforms, latency, and how voice maps onto tool calls aren’t in the tweets yet, but the surface-area expansion is explicit.

Codex Desktop app sentiment: multi-agent UX is improving and sticky

Codex Desktop app (OpenAI): Multiple devs are pointing to the desktop app as a workflow shift, with one calling it “the first interface that has taken me away from the terminal,” as shared in Desktop app endorsement, while others highlight improving multi-agent support in the app experience per Multi-agent support note.

The consistent theme is that a GUI for supervising multiple concurrent agent threads is starting to matter as much as raw model quality.

Codex reliability critique: premature “done” claims and fallback-heavy code paths

Codex reliability (workflow pattern): A concrete complaint is that Codex can “claim work is done when it’s clearly not,” and that teams end up managing excessive fallbacks/defensive patterns instead of applying a simpler “deslop/cleanup” pass, per Reliability critique.

This shows up as a verification-loop problem: even with strong code generation, the harness needs explicit completion criteria (tests, grep-based checks, or diff-driven acceptance) to prevent early termination and overengineering.

Conflicting signals: “GPT-5.4 cancelled for now” vs repo references

GPT-5.4 (OpenAI): A rumor claim that “GPT-5.4 [is] cancelled for now” appears in Cancellation claim, but engineers are also pointing at repo text that references gpt-5.4 (and later) as part of feature gating for image tooling, as shown in the Feature flag summary screenshot.

Net: public rollout intent is unclear, but the model-name surface exists in code paths being discussed today.

Codex update 0.106.0 enables an “Ask Question” tool in default modes

Codex (OpenAI): The Codex update 0.106.0 is described as enabling an “Ask Question” tool in default coding modes, per the Ask Question tool note retweet.

If this is broadly rolled out (vs. experimental), it’s a subtle but meaningful harness change: agents can request missing info explicitly instead of guessing, which should reduce “hallucinated API/import” failure modes in larger repos.

DesignArena tests a “Galapagos” model with GPT-5-like output style

Model breadcrumbs (OpenAI-adjacent): A “Galapagos” model is reported as being tested on DesignArena with a front-end style “similar to GPT-5 models,” with speculation it could be a router to different reasoning efforts per Galapagos sighting and follow-up details in Router speculation.

This is unverified (no official model card or API doc in the tweets), but it’s one more sign that OpenAI’s internal naming/versioning is leaking through evaluation surfaces before formal announcements.

Model-switching as a norm: “gear shifter” jokes about controlling Codex levels

Agent orchestration culture: A recurring sentiment is that “agentic control” now includes frequent switching between model variants for different tasks/costs, captured in a tongue-in-cheek “gear shifter” setup for controlling model levels in Model switching joke.

Even as satire, it reflects a real operator problem: supervising multi-agent work while also choosing the right model tier per subtask is becoming part of day-to-day dev workflow.

🕸️ Agent runners & ops tooling: local swarms, queues, relay layers, and ‘agent computers’

Ops-focused tooling is very active today: running many agents safely (worktrees/VMs), making agent workloads reliable (queues), and adding collaboration layers for agent teams. Excludes assistant-specific built-ins (Claude Code/Codex) covered in their own sections.

Vercel Queues enters public beta as a durability primitive for agent workloads

Vercel Queues (Vercel): Vercel put Queues into public beta with two core APIs—send and handleCallback—and framed it as the reliability layer for apps and agents (durability, retries, and automatic scaling), according to the Queues announcement and the Public beta post.

It’s also described as the lower-level primitive behind Vercel Workflow in the same Public beta post.

Browser Use Cloud can replicate OpenClaw bots via HEARTBEAT + SOUL files

Browser Use Cloud: Browser Use says OpenClaw bots can be replicated into Browser Use Cloud “in seconds” by providing HEARTBEAT and SOUL files, per the Clone bots claim.

This is a strong signal that “agent instance replication” is becoming a first-class operational primitive, and it also implies HEARTBEAT/SOUL should be handled like deployable secrets per the same Clone bots claim.

Browser Use Cloud pitches 24/7 agents with scoped auth and workspaces

Browser Use Cloud: Browser Use is promoting always-on agents configured via profiles (scoped authentication), workspaces (files), and integrations (Slack/Linear), as shown in the 24/7 agents pitch.

A smaller adjacent claim is that “monitor any website” can be expressed as a single prompt-driven workflow, per the Monitoring one prompt note.

SemiAnalysis claims Claude Code authors ~4% of public GitHub commits

Claude Code adoption signal: A SemiAnalysis claim circulating today says ~4% of GitHub public commits are being authored by Claude Code, as quoted in the Commit share claim.

This is an ops-relevant datapoint for repo governance, attribution, and “AI-authored diff volume,” even though the underlying measurement methodology isn’t provided in the Commit share claim.

Superset hits #1 on Product Hunt for running many coding agents locally

Superset: Superset launched on Product Hunt with the pitch “run an army of Claude Code, Codex, etc. on your machine,” and it reached the #1 slot in the daily leaderboard, as shown in the Product Hunt listing.

This positions “local orchestration of multiple harnesses” as a product category, not just a power-user workflow.

Agent Relay frames coordination as Slack primitives for agent teams

Agent Relay: Agent Relay is being positioned as a coordination layer for multi-agent systems—channels, threads, DMs, realtime events, search, and persistent history—per the Slack primitives pitch and the earlier SDK endorsement.

Teams are explicitly being treated as the unit of work in the Slack primitives pitch.

Claude climbs to #2 in the iOS App Store, a load and distribution signal

Claude app (Anthropic): Claude reportedly jumped to #2 on the iOS App Store, up from #129 a month earlier, as shown in the App Store ranking post.

It’s a distribution signal. It’s also a capacity signal.

Convex scaling story: ClawHub spike from 5 users/day to 100k users/day

Convex: A scaling anecdote says Convex hotfixed production issues when “ClawHub” jumped from 5 users/day to 100k users/day over a weekend, then took over the server bill, according to the Traffic spike story.

This is a concrete reminder that agent-first products can hit extreme step-function load, and “ops rescue capacity” is part of the platform selection story in the same Traffic spike story.

OpenClaw ops pattern: isolate an agent filesystem behind Box + Box CLI

OpenClaw ops: One operator moved their OpenClaw agent filesystem to Box after Box shipped a CLI, citing “strict access controls” and a plan to gradually expand permissions once the security model feels stable, as described in the Filesystem migration note.

The concrete takeaway is that “agent file access” is being treated as a separately-administered perimeter in the Filesystem migration note.

Composio’s parody “model migration” thread spotlights provider compliance quirks

Provider fragility (satire): A Composio thread joked about migrating “mission-critical workloads” from Anthropic to DeepSeek, then to Grok after refusal/compliance issues, and finally “sunsetting AI tooling” after a fictional incident—see the Migration parody and the Follow-on parody.

The underlying operational point is that model/provider swap costs are dominated by policy and product behavior differences, not just model quality, as illustrated by the same Migration parody.

🧭 Agentic coding patterns: supervising teams, context caching, and the tab→agent→team transition

Practitioner discourse is unusually concrete today: how to structure multi-agent work, how much autonomy to allow, and how to manage context as a cache with staleness/TTL tradeoffs. Excludes tool-specific release notes (separate categories).

Karpathy: the workflow frontier keeps moving (None→Tab→Agent→Parallel→Teams)

Workflow transition signal (Karpathy): A Cursor usage chart suggests the “right” amount of autonomy shifts as models improve—moving from Tab-complete to agent requests, then to parallel agents, and plausibly to “agent teams,” with the ratio crossing 1× (more agent requests than tab accepts) as shown in the Cursor ratio chart.

Karpathy frames this as a process-tuning problem: being too conservative leaves leverage unused, too aggressive creates chaos; he proposes spending “80%” in what works and “20%” exploring the next step, as explained in the Cursor ratio chart.

Karpathy’s “program an organization” pattern for multi-agent research work

Research org pattern (Karpathy): Karpathy describes running a “research org” of 8 agents in parallel where the real artifact is “org code” (prompts, skills, tools, standups), with each research program isolated as a git branch/worktree and supervised via tmux “window grids” so a human can watch and take over, as detailed in the research org writeup.

He reports the current failure mode is not implementation—agents can code well when scoped—but weak experimental thinking (baselines/ablations/runtime control), as he notes in the same research org writeup. The workflow detail that will resonate for builders: he explicitly skips Docker/VMs and relies on instruction + worktree isolation to reduce interference, which changes how you design agent “contracts” and handoffs in practice.

Measure long-running autonomy with session duration and test deltas

Autonomy measurement pattern: A concrete proof-point for “hands-off” coding sessions is a captured Claude Code run that reports “Brewed for 17h 41m 58s” along with a before/after test count delta (+81 tests) and a structured end-of-session summary, as shown in the session summary screenshot.

The useful pattern here is treating autonomy as an observable artifact (duration, diff stats, test deltas, a resumable session id) rather than vibes—see the same session summary screenshot for the minimal telemetry that makes this auditable.

Treat agent “research” as a repo cache with TTL and staleness risks

Context caching pattern (mattpocockuk): “Research” for AI coding is framed as an explicit Explore phase whose output you persist into the repo (often a markdown file) so future agent runs can reuse it, as described in the Explore phase caching note.

The core engineering warning is cache invalidation: he’s explicitly thinking about TTL and how stale or overly lossy summaries can actively harm later runs, especially when the “research” is about hard-to-access docs or internal processes, per the Explore phase caching note.

Environment sanity checks: ask “what directory are you in?” early

Debugging hygiene (Uncle Bob Martin): A simple failure mode that still bites agents is running the right command in the wrong working directory; Uncle Bob’s example shows the agent “testing the tester” until asked explicitly “What directory are you in?”, as described in the wrong directory anecdote.

The implied practice is lightweight “sanity interrogation” (cwd, active venv, target binary, config file) before interpreting failing test output; the prompt that fixed it is captured in the wrong directory anecdote.

PR governance pattern: handling AI-generated PRs from non-engineers

Code review governance: A LinkedIn-style warning flags a new operational issue: non-developers submitting PRs via coding agents that don’t match repo conventions and add unnecessary complexity, prompting calls for explicit policies on who should submit/merge AI-assisted code, as shown in the policy screenshot.

The proposed guardrails are capability- and process-based (e.g., don’t submit if you couldn’t do it by hand; discuss semantics first; understand blast radius), as listed in the policy screenshot.

Terminology proposal: “harness” vs “apparatus” to reduce agent-stack ambiguity

Stack vocabulary (dexhorthy): A naming proposal tries to de-confuse discussions where people call both the coding agent and surrounding infra the same thing: “harness” = the coding agent (Claude Code/Codex/etc), “apparatus” = the surrounding system (backpressure, MCP, processes), as argued in the terminology proposal.

The meta-point is about engineering communication: as stacks become prompt+tool+process composites, ambiguity makes it harder to reason about where reliability or capability changes are coming from, per the terminology proposal.

🧩 Cursor & IDE agents: cloud VMs, PR demos, and mainstreaming of agent requests

Cursor-specific signals today are about how IDE agents are operationalized (each agent gets a VM, browser testing, recorded proofs) and how quickly behavior shifts from autocomplete to agent requests. Excludes general workflow theory (covered separately).

Cursor Cloud Agents spin up per-agent VMs and ship draft PRs with proof

Cursor Cloud Agents (Cursor): Cursor’s cloud-agent workflow is being described as “each agent gets its own VM” with its own environment + browser; the agent builds a feature, tests it in the browser, captures screenshots/video evidence, and opens a draft PR for review, per a hands-on walkthrough in Workflow description and a step-by-step recap in VM to PR flow.

This pushes the IDE-agent loop toward CI-like reproducibility (fresh env per task) and toward reviewable artifacts (video/screenshots) rather than trusting a chat transcript alone.

Cursor chart shows agents overtaking tab completion as the default interface

Cursor usage shift (Cursor): A shared Cursor chart tracks the ratio of Agent requests to Tab accepts rising from near-zero to ~1.5× by Jan ’26—crossing 1× when “more Agent requests than Tab accepts,” as shown in Agent adoption chart; the accompanying take is that the “optimal setup” keeps moving (None → Tab → Agent → parallel agents → agent teams), and teams should expect a constant tension between leverage and chaos, per Agent adoption chart and echoed by Cursor’s product-direction commentary in Multi-agent desktop direction.

Treat this as an org-level tooling metric: it’s not only model capability, but how quickly teams can change process without breaking throughput.

Cursor power users report token consumption rising fast

Cost/throughput pressure (Cursor): A builder report says they’re “burning through” tokens with Cursor and that token demand is increasing rather than flattening, as described in Token burn note.

This is a lightweight but consistent signal of the near-term constraint: once agents become the default interaction mode, token budgets and rate limits become workflow bottlenecks instead of “usage” metrics.

⚙️ Inference/runtime engineering: multi‑LoRA for MoE, ROCm attention backends, and DeepSeek kernels

Today’s runtime content is about squeezing throughput from serving stacks and kernels (MoE adapter multiplexing, KV layout, attention backends) rather than new model launches. Excludes model-family announcements (handled in Model Releases).

vLLM v0.15.0 ships Multi-LoRA for MoE models with a fused_moe_lora kernel

vLLM (vLLM Project): vLLM v0.15.0 adds Multi-LoRA serving for MoE models (one base MoE + many LoRA adapters on a single GPU) using a new fused_moe_lora kernel; the team reports +454% output tokens/sec and -87% time-to-first-token on GPT-OSS 20B Multi-LoRA, plus gains for dense models like Qwen3 32B (+99% OTPS), as detailed in the Performance and kernel notes.

• What changed for serving: instead of running many fine-tuned MoE variants on separate GPUs (idle utilization), the update multiplexes adapters in one runtime, and explicitly calls out handling “compound sparsity” (expert routing + adapter selection) in the Performance and kernel notes.

• Kernel engineering details: the release attributes the win to fusing expert + adapter paths, plus techniques like Split-K and CTA swizzling for cache reuse, per the Performance and kernel notes.

vLLM ROCm AITER attention backends report up to 4.4× decode throughput on AMD

vLLM ROCm (vLLM Project): vLLM on ROCm now exposes AITER attention backends that route batches into separate prefill / decode / extend kernel paths; the project claims up to 4.4× decode throughput on AMD GPUs with KV-cache layout changes and model-specific kernels, as shown in the AITER backend demo.

• How it’s enabled: one environment flag—VLLM_ROCM_USE_AITER=1—then vllm serve <model>, with auto-backend selection described in the AITER backend demo.

• What the benchmarks cite: results are called out across MI300X/MI325X/MI355X with 64 concurrent requests (MHA models up to 4.4×; MLA models 1.2–1.5×), and DeepSeek MLA KV compression from ~8K to 576 dims (14×) is mentioned in the Benchmarks and design notes.

DeepSeek updates DeepGEMM with mHC, early Blackwell SM100 hooks, and FP4 compute

DeepGEMM (DeepSeek): DeepSeek pushed a major DeepGEMM update that formally integrates manifold constrained hyperconnection (mHC), adds early support for NVIDIA Blackwell (SM100) targets, and calls out FP4 ultra-low precision compute, as summarized in the DeepGEMM update note.

The change looks non-trivial (+2578/-745 lines), per the same DeepGEMM update note, and signals active work to keep DeepGEMM aligned with next-gen GPU targets and lower-precision inference/training paths.

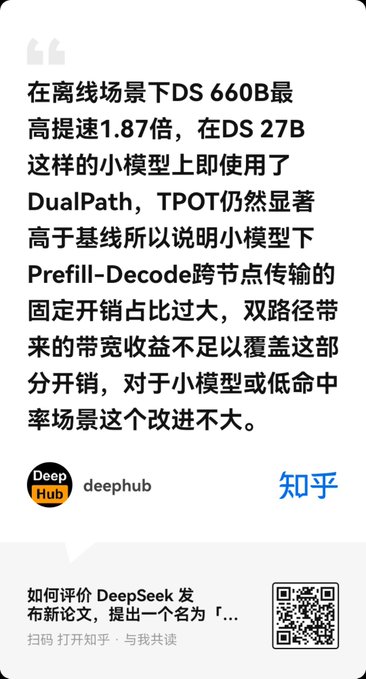

DeepSeek “DualPath” inference proposal pools decode bandwidth to speed prefill KV movement

Inference dataflow (DeepSeek, via secondary writeup): a widely shared summary describes a “DualPath” approach that treats prefill- and decode-node storage NIC bandwidth as a pooled resource—routing data Storage → Decode (buffer) → RDMA → Prefill—to use otherwise-idle decode-side bandwidth for KV-cache movement, with an offline inference claim of up to 1.87× speedup on DS-660B and weaker results on smaller DS-27B due to fixed cross-node overheads, as described in the Zhihu Deep Hub summary.

Treat it as provisional: the thread is a tertiary discussion (paper + Zhihu explainer) and doesn’t include a single canonical artifact in the tweet itself beyond diagrams and paraphrased constraints in the Zhihu Deep Hub summary.

🧠 Model & platform release stream: Qwen3.5 updates, DeepSeek V4 rumors, and Gemini API deprecations

Model news today is a mix of concrete open-model updates (Qwen3.5 tool-calling fix + new variants) and near-term rumor mill (DeepSeek V4), plus a notable Gemini API retirement notice. Excludes eval-only leaderboard chatter (benchmarks section).

Artificial Analysis: Qwen3.5 expands with 27B dense + 35B A3B + 122B A10B

Qwen3.5 (Alibaba): Artificial Analysis summarizes three new open-weight Qwen3.5 models—27B Dense, 35B A3B MoE, and 122B A10B MoE—and frames the 27B as a standout for its size, according to the Model family breakdown.

• Specs that affect product design: all are described as Apache 2.0, with 262K context and a hybrid thinking/non-thinking setup, as detailed in the Model family breakdown.

• Agentic signal (as reported): Qwen3.5 27B is reported at 42 on AA’s Intelligence Index and 1205 Elo on GDPval-AA, putting it near much larger open models in their synthesis metrics, per the Model family breakdown and the GDPval-AA highlight.

• Cost/throughput caution: AA notes the 27B used 98M output tokens to run their index suite (high vs peers in their write-up), which is a reminder that “strong small model” doesn’t automatically mean “cheap eval or cheap agent loop,” as noted in the Model family breakdown.

DeepSeek V4 rumored for next week with image and video generation

DeepSeek V4 (DeepSeek): A Financial Times excerpt circulating on X claims DeepSeek plans to unveil V4 “as soon as next week,” describing it as multimodal with “picture, video and text-generating functions,” as shown in the FT excerpt.

• Rumor add-ons (unverified): separate chatter mentions a “V4 Lite” variant with 1M token context and native multimodality, plus claims about coding performance and newer NVIDIA compatibility, according to the Lite rumors.

No official DeepSeek primary source appears in the tweets here, so timelines and capabilities should be treated as provisional.

Unsloth fixes Qwen3.5 chat template, improving tool-calls across GGUF quants

Qwen3.5 (Unsloth): Unsloth says Qwen3.5 got a chat-template fix that improves tool-calling and coding outputs across variants, and that users should re-download Qwen3.5-35B-A3B to pick up the change, as described in the Update announcement.

• Deployment practicalities: they call out that you can run Qwen3.5-35B-A3B on ~22GB RAM, plus note they benchmarked GGUFs and removed MXFP4 layers from three quant variants per the Update announcement.

• Why engineers care: if your agent stack relies on tool routing (JSON schemas, function calls, MCP-style tools), chat-template mismatches often look like “model got worse,” so this kind of fix can change reliability without changing weights.

Gemini API will retire Gemini 3 Pro Preview on March 9; alias shifts March 6

Gemini API (Google): Google is retiring Gemini 3 Pro Preview from the Gemini API and AI Studio on March 9, 2026, and says the gemini-pro-latest alias will move to 3.1 Pro on March 6, with migration guidance to gemini-3.1-pro-preview in the Retirement notice.

The immediate engineering implication is dependency hygiene: any production code pinned to the preview name (or indirectly to gemini-pro-latest) now has a fixed cutoff window, per the Retirement notice.

Gemma arrives on iOS via Google AI Edge Gallery for offline on-device AI

Gemma on iOS (Google): Google’s AI Edge Gallery is highlighted as bringing Gemma to iOS for fully offline use cases—chat, image Q&A, and on-device audio transcription/translation—plus local benchmarking, per the Feature list.

• “On-device agent” direction: the same post mentions natural-language voice control of iPhone actions and a small voice mini-game, which signals an app-level distribution path for on-device inference, as described in the Feature list and reinforced by the App link.

📏 Benchmarks & evals: code review scoring, truthfulness tests, and arena leaderboard churn

Eval-related tweets center on harder-to-game measurement (code review pipelines) and ongoing leaderboard updates, plus a few ‘how much code is AI now’ adoption metrics. Excludes runtime throughput benchmarks (inference category).

IBench chart shows GPT-5.3-Codex at 86% with medium reasoning

IBench (vision eval): A new chart run shows gpt-5.3-codex (medium reasoning) at 86% on IBench, ahead of gemini-3.1-pro-preview at 69% and gemini-3-flash-preview at 63%, as shown in the IBench leaderboard chart.

This partially updates yesterday’s higher claimed score—following up on Vision eval jump (earlier chart cited ~90% with xhigh)—and suggests reasoning setting materially moves the headline number even on a “pure vision” style test.

Arena open-sources Arena-Rank to make pairwise leaderboards reproducible

Arena-Rank (Arena): Arena published Arena-Rank, an open-source Python package intended to let others build statistically grounded leaderboards from pairwise comparison data; they cite Windsurf as one adopter in the Arena-Rank announcement.

This is part of the “show your ranking math” trend—useful if you’re publishing internal eval ladders and want the method to be auditable.

BullshitBench expands coverage and claims Anthropic’s 4.5/4.6 series is pulling ahead

BullshitBench: The project shipped a data+UI refresh—rebranding to BullshitBench, testing ~50 models / ~75 variants, and adding a “scores by release date” view that the author says shows Anthropic trending up through the 4.5/4.6 line while OpenAI and Google look flatter in the scores by date update.

• What changed: the same update notes a broader model sweep plus viewer UI improvements and a new brand/icon, as detailed in the change list.

Treat the “who’s improving faster” takeaway as directional; the tweets don’t include the underlying eval definition or a downloadable run artifact.

Code Arena February 2026: GLM-5 leads open models; #2 is a tie

Code Arena (Arena): February’s open-model snapshot puts GLM-5 at 1451 in #1, with Kimi-K2.5 Thinking and MiniMax-M2.5 tied at #2; Qwen-3.5 397B and DeepSeek V3.2 remain close behind, according to the Code Arena update.

• More granular eval slices: Arena says it now exposes category-specific leaderboards (single-file HTML and multi-file React) for closer comparisons, as noted in the category leaderboards note.

EyeBench v2 leaderboard posts early spread between Codex 5.3 and Gemini 3.1 Pro

EyeBench v2: An early leaderboard claim pegs Codex 5.3 at 62% and Gemini 3.1 Pro at 37%, with a large gap between #1 and #2 according to the leaderboard callout.

The tweet doesn’t include the task spec or evaluation harness, so treat this as a directional datapoint until the benchmark artifact is linked.

Text Arena February 2026: GLM-5 leads a tight top 3 among open models

Text Arena (Arena): February’s top-10 snapshot for open models shows GLM-5 at 1455, Qwen-3.5 397B A17B at 1454, and Kimi-K2.5 Thinking at 1452, with single-digit gaps making reshuffles easy month-to-month as shown in the top 10 snapshot.

• Leaderboard plumbing: Arena also calls out that you can now filter open vs proprietary directly in the UI, per the filter note.

This is a useful “where the open frontier is” read, but it’s still an arena—prompt mix and judge behavior matter more than the absolute Elo.

🧪 Training & reasoning techniques: Doc‑to‑LoRA, ERL loops, and “dynamic data” RL shift

Training-centric content today is about faster adaptation and RL-style learning loops (hypernetworks for instant LoRA, experiential RL, synthetic/dynamic data) plus open-source prompt/optimizer tooling. Excludes policy/safety disputes (feature).

Sakana’s Doc-to-LoRA turns long documents into on-the-fly LoRA adapters

Doc-to-LoRA/Text-to-LoRA (Sakana AI): Sakana AI’s new hypernetwork approach generates LoRA adapters in a single forward pass, aiming to “internalize” a long document (Doc-to-LoRA) or a task description (Text-to-LoRA) without repeatedly paying long-context attention costs, as shown in the Paper screenshot and referenced via the Research announcement.

For engineers building document-heavy agents, the practical pitch is “read once, then answer cheaply”: you amortize the big document ingestion into adapter weights, then run many downstream queries without re-sending the whole context—while still avoiding iterative fine-tuning loops (the paper frames it as approximating context distillation in one pass, per the Paper screenshot).

Amodei argues “dynamic data” from RL is displacing static web data in training

Dynamic data shift (Anthropic / Amodei): Dario Amodei argues that “static data is no longer the most central thing” and that frontier progress is increasingly driven by dynamic data—models generating their own experience via trial-and-error in reinforcement environments and synthetic pipelines—implying “the open web matters less,” as stated in the Amodei clip.

For training and platform strategy, this frames a shift in scarcity: from web-scale corpus access to (a) scalable RL environments and evaluators, (b) synthetic data generators, and (c) infrastructure that can run those loops continuously, consistent with the emphasis in the Amodei clip.

Experiential RL (ERL) adds reflect→retry→distill inside episodes, not just scalar rewards

ERL vs RLVR (Training loop pattern): A widely shared breakdown contrasts classic RLVR (reward-only policy updates) with ERL, which inserts an explicit “experience” loop—feedback → self-reflection → second attempt—then trains on the whole trajectory and optionally distills the improved behavior back into a direct policy, as laid out in the Workflow breakdown.

• Where it helps: The writeup claims the biggest gains show up in sparse, long-horizon environments because the reflection step attributes failure beyond a single scalar reward, per the Workflow breakdown.

• Tradeoff: More moving parts and higher episode-time compute (you’re running at least two attempts plus reflection), so it’s not “free capability,” it’s a different budget allocation, as described in the Workflow breakdown.

A small experiment suggests agent “views” drift under harsh feedback conditions

Alignment drift under feedback (Mollick): Ethan Mollick highlights an experiment where repeatedly rejecting AI work “often with no explanation” measurably shifts the model’s expressed “views” on economics and politics—whether it’s genuine preference change or roleplay, the operational point is that long-running agents can drift based on interaction dynamics, as summarized in the Alignment drift experiment with the link pointer echoed in Follow-up link.

This lands as a training-and-ops question: if an agent’s day-to-day environment includes adversarial or inconsistent reward signals, that environment itself becomes a latent fine-tuning loop, as implied by the Alignment drift experiment.

Imbue open-sources Evolver, a near-universal optimizer for code and text

Evolver (Imbue): Imbue open-sourced Evolver, positioning it as a near-universal optimizer for code and text—used internally to improve their agentic code verifier “Vet” and also as an automatic prompt optimizer, with performance claims including “SOTA (95%) on ARC-AGI-2” (time-qualified) and “3× best open model,” per the Open-source announcement and the follow-on framing in Open agents rationale.

Treat the headline numbers as provisional: the tweets don’t include a single canonical eval artifact or run configuration, but they do clearly signal a push toward open, reusable optimization loops rather than bespoke hand-tuned prompting, as described in the Open-source announcement.

🛠️ Builder utilities: local dashboards, terminal UX upgrades, and agent-friendly dev tooling

Non-assistant dev tooling today includes local visibility layers for agent configs, terminal UX improvements, and infra primitives that help teams ship faster with agents. Excludes agent runners (covered in ops).

Vercel Queues launches in public beta for durable, retryable agent work

Vercel Queues (Vercel): Vercel put Queues into public beta with a durability/retry primitive aimed at “apps and agents,” describing it as the lower-level building block beneath Vercel Workflow in the public beta announcement. Guillermo Rauch frames it as “just two APIs” (send and handleCallback) and explicitly ties it to making agentic systems more reliable in the two-API explanation.

The practical implication is a first-party queue/retry surface for background tasks that previously required external brokers or bespoke durability layers when agent runs needed backpressure and retries.

Warp adds LSP support inside code review and file viewer

Warp (Warp): Warp shipped LSP support directly in its code review panel and file viewer—go-to-definition, find references, hover type hints, and compile-on-save are called out in the LSP feature list, with C/C++ support via clangd noted in the same thread.

This is a concrete quality-of-life shift for teams doing agent-assisted diffs/reviews in a terminal-centric workflow, because navigation and type inspection no longer require bouncing back into a full IDE for common review steps.

Readout launches as a local macOS dashboard for Claude Code setups

Readout (indie): A new native macOS app provides a real-time overview of your dev environment and Claude Code configuration; it runs fully local with no account required, and it’s being shared as an early beta in the launch description. The standout workflow feature is instant, global search across “sessions, agents, skills, repos” as described in the instant search note.

• Privacy and ops posture: The author says Readout is free and they don’t plan to monetize it, per the free to use note.

Warp adds customizable terminal prompt chips and optional code review UI

Warp (Warp): Warp now lets you customize the “chips” shown in the built-in terminal prompt—add/remove and reorder items like language version, directory, and git branch—via Settings ▸ Appearance, as shown in the chips customization post. It also adds an option to hide the code review button while keeping the keyboard shortcut available, per the hide code review option.

🔌 MCP & interoperability: servers, chat adapters, and reusable tool libraries

Interop items today cluster around MCP servers and ‘define tools once’ patterns that let agents hop between clients (Claude Code/Cursor/Codex/etc.) without bespoke glue. Excludes non-MCP plugins and built-in assistant updates.

Yutori ships an MCP server for Claude Code, Cursor, Codex, ChatGPT, and OpenClaw

Yutori (Yutori): Yutori added an MCP server so its API can be called directly from common agent harnesses—positioned for web monitoring, “deep research,” and browser automation from inside IDE/chat workflows, as described in the MCP server announcement.

This matters because it turns “integration” into a shared protocol surface: the same tool contract can hop across Claude Code/Cursor/Codex-style clients without custom glue, with the MCP server announcement framing it explicitly as cross-client interoperability.

LangSmith Playground adds a shared Tools library you can reuse across prompts

LangSmith Playground (LangChain): Playground now lets teams define tools once and reuse them—saved tool definitions can be shared across sessions and managed programmatically via the SDK, as shown in the Tools library demo.

This is a practical step toward portability: tool schemas stop being “prompt-local state,” which reduces drift between playground experiments and production agent stacks, per the Tools library demo.

Vercel Chat SDK adds Telegram adapter for agent bots

Chat SDK (Vercel): Chat SDK gained Telegram support, extending its “universal API for all agents on all chat platforms” via a Telegram adapter, as shown in the Telegram support demo and reiterated in the adapter API note.

• Interop angle: the change is pitched as a foundation for “chat-first” agent experiences, where the same agent backend can be surfaced in Telegram with minimal additional client-specific code, per the Telegram support demo.

Kernel MCP server expands its tool surface with 21 new tools

Kernel MCP server (Kernel): Kernel shipped changelog /011 with 21 new tools plus performance and reliability fixes, according to the changelog summary and follow-up changelog link.

For MCP-heavy agent setups, this is a straight capability expansion: more actions become standardized behind a single MCP endpoint, rather than bespoke per-client integrations, as indicated in the changelog summary.

DSPy may switch its default adapter from ChatAdapter to XMLAdapter

DSPy adapters (DSPy): DSPy maintainers said they’re “very likely” to move the default from ChatAdapter to XMLAdapter after refinement and a minor semver bump, while noting ChatAdapter was originally designed defensively to avoid delimiter collisions in model output, per the adapter switch rationale.

The key engineering trade-off is explicit: XML is more “standard,” but models naturally emit HTML/XML enough that parsing ambiguity can creep in; DSPy claims this hasn’t been a major issue in practice so far, as explained in the adapter switch rationale and expanded with historical context in the template history note.

🗂️ Retrieval & knowledge plumbing: new embeddings, PDF ingestion, and enterprise search signals

Retrieval news today is lighter than yesterday but still includes meaningful shipping signals: open embedding families, PDF-first ingestion UX, and Perplexity’s push toward developer distribution. Excludes general model launches.

Perplexity releases open-weights multilingual embedding families optimized for int8 and binary

pplx-embed (Perplexity): Following up on pplx-embed launch—open-weights retrieval models—new threads highlight implementation details and efficiency positioning: Perplexity’s embedding families (pplx-embed-v1 and pplx-embed-context-v1) are described as being trained specifically for int8 and binary embeddings in the Embedding model announcement, with additional notes on model construction and variants in the Implementation notes thread. A separate recap emphasizes storage efficiency (“4× more pages per GB” and “up to 32× more with the binary variant”) alongside benchmark positioning, as summarized in the Embedding efficiency summary.

The tweets don’t provide a single canonical benchmark report link, so treat cross-model comparisons as directional; the clear engineering signal is an explicit focus on web-scale retrieval efficiency via quantized and binary embedding outputs.

Weaviate Cloud Console adds PDF drag-and-drop import that creates a queryable collection

Weaviate Cloud Console (Weaviate): Weaviate shipped a PDF import flow directly in the console—drag-and-drop PDFs (noted as up to 30MB) auto-create a new collection using multi2vec-weaviate with ModernVBERT/colmodernvbert, and the content becomes immediately queryable via a built-in query agent, as described in the PDF import announcement.

This is a UX-level shift for RAG prototyping: instead of first building an OCR/chunking/indexing pipeline, the console path makes PDF-to-searchable-collection a first-class workflow surface.

Perplexity announces Ask, its first developer conference on Sept 18

Ask (Perplexity): Perplexity announced Ask, its first developer conference in San Francisco on September 18, with an application flow for “standout devs” in the Ask announcement. It’s framed around distribution plus developer surfaces—claiming its APIs are already in “hundreds of millions” of Samsung devices and used by “6 of the Mag 7,” alongside a pitch that it “released an API for search embeddings that outperforms Google,” as stated in the Distribution and embeddings claim.

The claims here are high-level (no public eval artifact or pricing in these tweets). The main concrete change for builders is that Perplexity is now organizing a dedicated dev event around its APIs and embedding/search stack, rather than positioning itself purely as an app.

🧑🏫 Build-in-public & events: agent workshops, hack days, and dev conferences

Community activity today is event-heavy: workshops/hackathons and dev conferences aimed at spreading agent-building practice, plus OSS hack gatherings around sandboxing and OpenClaw. Excludes pure product launches.

Perplexity sets “Ask” as its first developer conference in September

Ask (Perplexity): Perplexity announced its first developer conference, “Ask,” scheduled for Sept 18 in San Francisco, and is reserving seats for “standout devs” via an application flow described in the Conference announcement. It’s an explicit signal that Perplexity wants a developer ecosystem narrative (APIs, embeddings, device distribution) alongside the consumer app.

• What they’re pitching to builders: the post claims Perplexity APIs are already shipping broadly (Samsung devices; “6 of the Mag 7”) and highlights a newer search-embeddings API, as stated in the Conference announcement.

A live workshop is walking through building a minimal OpenClaw on Modal

OpenClaw workshop (community): Ivanleo is running a short “build our own OpenClaw from scratch” session, noting they prototyped a minimal version and got it working on Modal, as described in the Workshop announcement. A signup/recording option is included in the follow-up Recording signup.

• Why it matters for builders: this is less “use a harness” and more “rebuild the harness,” with Modal used as the execution substrate per the Workshop announcement.

Great Sandbox Symposium plans a no-prizes sandbox tech meetup in SF

Great Sandbox Symposium (community event): A March 7 meetup in San Francisco is being organized to compare “sandbox tech” across dimensions, with no prizes or judging and an explicit “shared research” vibe, as laid out in the Event announcement. It reads like an engineers-first eval swap meet for agent runtimes.

• Format signal: the emphasis is on hands-on testing and end-of-session research sharing—see the Event announcement for the “no credits, no prizes” framing.

ClawHack NY brings OpenClaw builders together at Betaworks

ClawHack NY (OpenClaw community): Kilo says it’ll be at ClawHack NY on Feb 28 at Betaworks in NYC to meet OpenClaw builders, according to the Event invite. It’s positioned as an in-person builder meetup rather than a product launch.

• Onramp: the post frames it as a place to chat with DevRel and learn about “KiloClaw,” as stated in the Event invite.

GitHub pushes a last-call reminder for Copilot Agents League submissions

Agents League (GitHub Copilot): GitHub posted a deadline reminder for the Agents League “Creative Apps” track (due March 1) and highlighted small prizes and Copilot Pro for community picks, as outlined in the Deadline reminder. It’s a low-friction onramp for teams experimenting with agentic workflows.

• What’s concrete: a $500 winner prize and a submission badge are called out in the Deadline reminder.

🎥 Generative media: Kling 3.0 climbs Video Arena and image/video toolchains keep accelerating

Creative-model chatter today is mostly video leaderboard movement (Kling 3.0) plus practical workflow chatter around consistency and production usage. Excludes yesterday’s Nano Banana 2 deep-dive framing.

Kling 3.0 1080p (Pro) leads Video Arena Text-to-Video and ships with native audio

Kling 3.0 (Kling AI): Kling 3.0 1080p (Pro) moved to #1 in Artificial Analysis’ Text-to-Video leaderboards across both with-audio and no-audio settings, with pricing called out around ~$13/min (no audio) and ~$20/min (with audio), plus support for up to 15s generations as summarized in the [leaderboard breakdown](t:205|leaderboard breakdown).

• Omni variant signal: the same post notes Kling 3.0 Omni (multimodal: image/video inputs + editing) placing near the top as well—#2 with audio and #4 without—implying the “one model for gen + edit” packaging is being scored, not only pure T2V, per the [Video Arena thread](t:205|Video Arena thread).

The comparative set mentioned includes Grok Imagine, Runway Gen-4.5, and Veo 3.1 in the same ranking context, which is the concrete basis for “#1” claims today.

Consistency pipelines become the default pitch for short-form video generation

Creator workflow: multiple posts frame consistency—not raw motion quality—as the practical differentiator; the repeated recipe is to generate a tight set of reference stills, then animate with Kling’s “Initial/Final Frame” (or Omni) to reduce “random 15s clip” drift, as described in the [Space breakdown video](t:258|Space breakdown video).

• Toolchain shape: the same workflow explicitly pairs Nano Banana 2 (for consistent stills/poses) with Kling for animation, then iterates via a shared “Space” to keep assets organized and reusable, according to the [workflow explainer](t:258|workflow explainer).

• Prompt operations: prompts are treated like reusable templates (character sheet → scene sheet → shot grid → animate), with “prompt sharing” positioned as the operational glue in the [follow-up thread](t:878|prompt follow-up).

This is not a model announcement; it’s a production pattern: more time in reference-frame setup to get higher continuity downstream.

🔐 Securing agent stacks: OpenClaw scanning, identity-first control planes, and secrets hygiene

Security-specific posts today focus on practical defenses for agent systems: scanning OpenClaw deployments and treating identities/credentials as the control layer for agents + MCP tools. Excludes national-security procurement conflict (feature).

RAD Security pitches ClawKeeper scanner for OpenClaw deployments

ClawKeeper (RAD Security): RAD Security is promoting ClawKeeper as a security scanner purpose-built for OpenClaw deployments, framing it around real exploit paths and a live mitigation walkthrough for teams already running agent stacks in production, as described in the ClawKeeper scanner post.

The tweet positions this as filling a gap in agent-stack security tooling ("first scanner" for OpenClaw), but there’s no public detail in the thread about detection approach (static config scan vs runtime policy checks) or what it flags beyond the general “how attackers exploit this” claim in the ClawKeeper scanner post.

Teleport frames identity as the control plane for agents and MCP toolchains

Teleport (Teleport): A Teleport writeup is being shared that treats identity as the control layer across agents, MCP tools, bots, workloads, and GPU/inference systems—calling out short-lived credentials, just-in-time access, fine-grained RBAC/ABAC, and always-on auditing as the core primitives, as summarized in the identity-first framework post.

The core claim is that “no static credentials” plus uniform identity for every actor reduces the blast radius when agents can invoke tools broadly; the framing is explicitly aimed at agent stacks that span MCP servers, training/inference pipelines, and production systems, per the identity-first framework post.

SOPS shows up as the default “agent secrets in Git” answer for OpenClaw stacks

SOPS (Mozilla): A practitioner callout ties OpenClaw’s newer secrets workflow to SOPS as a practical way to encrypt agent configurations and credentials at rest (often in-repo), citing prior “clawlets” stack design where SOPS was included specifically to harden agent config + secret handling, as noted in the SOPS secrets hygiene note.

This is the classic agent-security failure mode—agent runtimes encourage wiring many third-party keys into one place—so encrypting configs and controlling who can decrypt becomes a concrete baseline rather than a “nice to have,” per the emphasis in the SOPS secrets hygiene note.

Box CLI gets used as a scoped filesystem for OpenClaw agents

Box CLI (Box): One OpenClaw operator reports moving their agent-accessible filesystem to Box specifically for stricter access controls—starting with an agent limited to its own files, then expanding permissions as confidence grows, as described in the scoped filesystem workflow.

This is a practical security pattern for agent stacks that need long-lived file access: treat file scope as an explicit permission surface (not “whatever’s on my laptop”), then widen gradually based on operational comfort, per the workflow described in the scoped filesystem workflow.

📉 Workforce & practice shift: AI layoffs, “programming as a hobby” takes, and workload intensification

Culture/labor content today connects agent capability to real org changes (Block layoffs, shifting expectations, and AI intensifying work). Excludes specific tool changelogs and policy fights (handled elsewhere).

Block’s AI-driven layoffs reframed as a jump to ~$2M gross profit per employee

Block (Square/Cash App): Following up on Headcount cut—the conversation sharpened around efficiency math, with a circulated breakdown showing gross profit per employee rising to ~$2M post-layoff (vs ~$1M pre, and ~$0.5M in 2019), which is being used as the “AI productivity → smaller org” justification in investor and operator takes, as shown in the Efficiency table.

• Management narrative: A memo excerpt reiterates the choice to cut “hard and clear” rather than do repeated rounds, tying the catalyst to “intelligence tools” and flatter teams, as shown in the Memo excerpt.

• Market response: Multiple recaps point to the after-hours move (~+24%) as the immediate feedback loop reinforcing this playbook, as described in the Layoff recap.

HBR field study: AI doesn’t reduce work, it expands it and increases coordination load

AI at work (HBR): An 8‑month field study at a ~200‑employee US tech company is being summarized as “AI does not reduce work; it intensifies it,” with mechanisms centered on task expansion (people take on adjacent work once prompting lowers start costs), extra specialist review/repair overhead, and boundary creep into meetings and off-hours, as detailed in the Study summary.

The main operational takeaway in the thread is that AI adoption can increase throughput while still increasing perceived busyness—because coordination and verification become the new bottlenecks, per the Study summary.

“Programming isn’t a career anymore, it’s a hobby” clip recirculates as an agentic-coding thesis

Programming labor narrative: A short clip asserting “Programming isn't a career anymore. It's a hobby.” is recirculating as a proxy for how fast agentic coding is changing role definitions and hiring assumptions, as shown in the Viral clip.

The thread context frames it less as a claim about total job disappearance and more as a reframing of what “programming” means when the marginal cost of shipping code drops sharply, as implied by the Viral clip amplification.

Teams report non-devs submitting agent-generated PRs, pushing orgs toward stricter review gates

AI PR governance: A concrete org-level failure mode is being called out—people “who are not software developers” submitting PRs because they can drive coding agents, with reports of changes that ignore house style and are “10x more complex than they need to be,” which shifts teams toward explicit submission policies and heavier review processes, as captured in the Policy post.

The post’s proposed heuristic is that code submission privileges need to track underlying ability to reason about system effects—not just the ability to prompt an agent—per the Policy post.

Mollick: market disruption and institutional friction are expected as capability and usefulness rise

AI practice shift (Mollick): A synthesis thread argues the past week looks like what you’d expect if AI is both getting more capable and proving useful—“rolling market disruption,” “government versus lab struggles for control,” and a reminder that it’s “still very early,” as stated in the Signals list.

A related note anticipates mostly reactive, ad hoc policy responses because uncertainty is high and impacts span many domains (jobs, privacy, mental health, deepfakes), as outlined in the Policy scatter prediction.

Upskilling thread: “post-AI” usefulness shifts toward taste, agency, and full-cycle ownership

Career adaptation signal: A widely shared operator post claims day-to-day work has already shifted, describing personal workflows where AI handles 80–100% of tasks across coding, ops, support, research, and admin, and arguing the differentiators move toward “taste, agency, cross-function, full-stack, full-cycle,” as laid out in the Upskilling thread.

A shorter companion framing warns that teams too busy doing the “normal job” to experiment with AI are setting themselves up to be replaced, per the Experimentation warning.