ARC-AGI-3 leaderboard opens at 0.37% – $2M prize fuels backlash

Stay in the loop

Free daily newsletter & Telegram daily report

Executive Summary

ARC Prize Foundation published ARC-AGI-3 results, and the headline is how little moves the needle: frontier models sit at 0.00%–0.37% (Gemini 3.1 Pro Preview 0.37%; GPT-5.4 High 0.26%; Opus 4.6 Max 0.25%) while reported eval runs cost in the low‑thousands to high‑thousands of dollars; the benchmark pitches “sample‑efficient interactive learning,” but release-day interpretation is dominated by near-zero scores and cost-per-progress.

• Scoring/measurement fight: leaderboard reports “no harness”; builders argue harnessed agent systems should be reported too; efficiency is squared with a 1.0 clamp, so you can match but not exceed the human baseline; baseline itself is contested (second-best first-run human by action count; failed attempts handling debated).

• DAB (Data Agent Benchmark): Berkeley EPIC/PromptQL ship 54 queries across 12 datasets/4 DBMS; best model hits 38% pass@1; explicitly tests cross-DB joins plus tool use.

Forecasts already diverge—“months” to saturate vs a late‑2026/2027 step-change—suggesting ARC-AGI-3 may behave like prior evals once a workable approach lands, but no independent reproduction yet beyond leaderboard snapshots.

Top links today

- Expect agent browser QA tool

- Expect open-source CLI and agent skill

- How Claude Code auto mode works

- Claude work tools on mobile

- OpenClaw runtime release notes

- G0DM0D3 jailbreak interface codebase

- G0DM0D3 public jailbreak chat UI

- Hyperagents self-referential agent paper

- Cursor cloud agents on your infrastructure

- Feynman research agent open-source repo

- Unsloth Studio and llama.cpp setup

- Lyria 3 Pro in Gemini API docs

- Google AI Studio music generation experience

- Vercel AI Gateway reporting API

- Agent observability guide for production

Feature Spotlight

ARC-AGI-3 lands: <1% scores, high costs, and scoring-method backlash

ARC-AGI-3 scores drop (<1% for frontier models) and immediately reshape the “agents are here” narrative—because the benchmark penalizes inefficiency hard and bans harnesses, forcing teams to confront real exploration/learning gaps (and big eval costs).

Today’s highest-volume benchmark story: ARC-AGI-3 results go live with frontier models clustered around ~0.2–0.37% and multi‑$K eval costs, prompting intense debate about “no harness,” efficiency-squared scoring, and what the numbers really mean.

Jump to ARC-AGI-3 lands: <1% scores, high costs, and scoring-method backlash topicsTable of Contents

🧪 ARC-AGI-3 lands: <1% scores, high costs, and scoring-method backlash

Today’s highest-volume benchmark story: ARC-AGI-3 results go live with frontier models clustered around ~0.2–0.37% and multi‑$K eval costs, prompting intense debate about “no harness,” efficiency-squared scoring, and what the numbers really mean.

ARC-AGI-3 leaderboard goes live with sub-1% frontier scores and $2K–$9K runs

ARC-AGI-3 (ARC Prize Foundation): Following up on Launch tomorrow—interactive agent benchmark announcement—the ARC-AGI-3 results are now live, with frontier models clustered around 0.00%–0.37% and reported evaluation costs in the low-thousands to high-thousands of dollars per run, as shown in the Leaderboard snapshot and echoed in the Cost range callout. This matters because ARC-AGI-3 is trying to measure sample-efficient interactive learning, but today’s headline numbers are dominated by both very low scores and very high cost per measured progress.

• Release-day snapshot: Google Gemini 3.1 Pro (Preview) at 0.37%, OpenAI GPT-5.4 (High) at 0.26%, Anthropic Opus 4.6 (Max) at 0.25%, and xAI Grok 4.20 at 0.00%, per the Frontier scores table and the Leaderboard snapshot.

The public benchmark entry point and replays are accessible via the Benchmark page, which is where teams will look for reproducibility details.

ARC-AGI-3 human baseline definition becomes a core point of contention

ARC-AGI-3 baseline (human reference): Debate centered on how “human-level” is defined, with criticism that the benchmark uses the second-best first-run human by action count as the baseline reference per environment, and that unsuccessful attempts are treated differently in the reported efficiency framing, as argued in the Baseline excerpt and the Baseline critique. This matters because the chosen baseline strongly shapes the headline “AI <1%” narrative.

One example of the pushback is the claim that “humans score 100%” is a solvability statement rather than an average-human efficiency statement, with further critique of the public messaging in the Scoring criticism thread.

ARC-AGI-3 scoring uses squared efficiency and clamps scores at human parity

ARC-AGI-3 scoring: Multiple threads zoomed in on how the benchmark converts action counts into a score—specifically squared efficiency with a hard cap at 1.0, meaning models can match but not exceed the human baseline on any level. The core definition is laid out with formulas in the Scoring equations and is documented in the Technical report.

A key engineering implication is that ARC-AGI-1/2 and ARC-AGI-3 numbers are not directly comparable because ARC-AGI-3’s metric bakes in both task completion and path efficiency, as explained in the Scoring equations.

ARC-AGI-3 won’t use a harness for official scores, triggering measurement backlash

ARC-AGI-3 evaluation policy: The technical report text circulated showing the official leaderboard decision to report scores without a harness, framing this as “developer-aware generalization” and arguing future AGI systems “will not need task-specific external handholding,” as quoted in the No-harness rationale. That stance drew immediate objections from builders who want harnessed agent scores reported alongside the baseline, as in the Harness objection and the Harness theory.

The disagreement is about what ARC-AGI-3 is measuring: unaided interactive learning versus practical agent systems that depend on tool + UI + memory scaffolding.

ARC-AGI-3 constraints and exclusions become part of the debate

ARC-AGI-3 evaluation constraints: Commentary also focused on benchmark constraints beyond the scoring equation—claims about action/step budgets and exclusions of higher-compute “think longer” variants, plus the decision to emphasize a minimal prompt and minimal tooling setup, as debated in the Constraint critique and reinforced by a “worst case performance” reading of the report text in the No-harness rationale.

The practical upshot is that leaderboard deltas can reflect harness and evaluation design choices as much as model capability, which is one reason several people described the current snapshot as hard to interpret at fine granularity.

ARC-AGI-3 saturation timing becomes the next argument

ARC-AGI-3 trajectory: Forecasts varied widely on how fast the benchmark will be saturated—one camp calling “four months” in the Four-month estimate, another predicting a long flat period followed by a sharp jump late 2026 or 2027 in the Step-change prediction, and others expecting a quick transition from unsolved to solved in the Fast saturation claim.

The common theme is that ARC-AGI-3 is expected to behave like prior benchmarks: low initial scores, then rapid gains once an effective approach lands.

Data Agent Benchmark (DAB) ships: agents struggle with multi-database workflows

DAB (Data Agent Benchmark): A Berkeley EPIC / PromptQL collaboration released DAB, a benchmark grounded in enterprise “data agent” work: 54 queries, 12 datasets, 9 domains, across 4 DBMS, with the best frontier model reaching 38% pass@1 (averaged across trials), as described in the Benchmark announcement.

Unlike many text-to-SQL tasks, DAB explicitly tests cross-database joins, tool use (query + Python), and messy key reconciliation, which is why it’s being positioned as a gap-filler for agent evaluation, per the Benchmark announcement.

Mollick: ARC-AGI-3 looks human-winnable, but tool/harness gaps may dominate early scores

ARC-AGI-3 (practitioner read): Ethan Mollick reports that ARC-AGI-3 is “definitely human winnable,” and frames the open question as whether frontier-model underperformance is primarily harness/vision/tools versus core limitations of LLMs, per the Hands-on take.

This is the near-term engineering question for teams: whether improving the agent stack (UI control, state, exploration heuristics, memory) moves the needle faster than base-model upgrades.

ARC Prize 2026 launches with $2M alongside ARC-AGI-3 benchmark release

ARC Prize 2026 (ARC Prize Foundation): Alongside the ARC-AGI-3 benchmark release, ARC Prize announced $2,000,000 in prizes and pitched ARC-AGI-3 as testing how agents “explore, form hypotheses, plan, learn and adapt,” per the Benchmark positioning thread.

A separate Fast Company writeup amplified the “benchmark exposes a weakness” framing, as shown in the Press coverage screenshot.

🧷 Claude Code shipping log: 2.1.83/2.1.84, auto-mode design, and reliability pain

Continues the Claude Code surge with two CLI releases (2.1.83 + 2.1.84) and deep prompt/flag churn, while users report outages, login issues, and quota/limit frustration impacting daily use.

Claude Code 2.1.84 adds an opt-in PowerShell tool and an idle /clear nudge

Claude Code 2.1.84 (Anthropic): A follow-on release adds an opt-in PowerShell tool for Windows automation, and introduces an idle-return prompt that nudges sessions idle 75+ minutes to run /clear to avoid unnecessary prompt-cache rehydration, as summarized in the Release thread.

Prompt-level behavior also shifted: ClaudeCodeLog reports the removal of an explicit top-level “Avoid over-engineering” rule while retaining narrower “don’t add extras” constraints; it also standardizes GitHub references as owner/repo#123 for clickable links and foregrounds explicit parallel tool batching in commit/PR playbooks, per the System prompt updates. The changelog adds several operational knobs (for example, x-client-request-id for timeout debugging) in the Release thread.

Claude Code reliability pain: elevated errors, login issues, and fast quota burn

Reliability (Claude Code): Following up on Rate limit bug—Max plan users reporting quota/accounting weirdness—today’s feed shows more acute disruption: Claude Status screenshots show major outage/partial outage conditions across claude.ai and Claude Code, including “elevated errors on Claude Opus 4.6” and Cowork connection resets, as captured in the Status page screenshot.

Multiple builders also report rapidly exhausting weekly/daily limits (“tapped out their whole usage limits on Mon/Tue”) with frustration at lack of acknowledgement, as shown in the Usage limits thread; others mention being unable to log in at all, per the Login issue post. Downstream behavior includes switching away from Claude Code “all day” due to rate limits/outages, according to the Switched due to limits, alongside quota-hit reactions in the Quota meme.

Anthropic explains Claude Code Auto mode’s classifier approvals and injection probe design

Auto mode (Anthropic): Following up on Auto mode launch—skipping permission prompts safely—Anthropic published an engineering breakdown of how Auto mode decides when to approve tool actions using classifiers, including a prompt-injection probe over tool outputs and a transcript classifier to allow/deny actions, as described in the Engineering blog announcement and expanded in the Engineering post.

Auto mode also got a practical “how to turn it on” workflow callout: it’s available to Claude for Team users via claude --enable-auto-mode, then Shift+Tab to enter the mode, per the Enablement instructions.

Claude Code 2.1.83 adds managed-settings.d policy fragments and subprocess credential scrubbing

Claude Code 2.1.83 (Anthropic): The CLI shipped a dense ops/security release—most notably managed-settings.d/ as a drop-in directory where policy fragments merge alphabetically (useful when different teams own different controls), plus a hardening change so child processes no longer inherit Anthropic or cloud-provider credentials, reducing accidental secret exposure, as listed in the Changelog highlights.

The release also tweaks memory semantics—"ignore memory" now treats MEMORY.md as empty so stored lines don’t get pulled back into context, per the same Changelog highlights. Additional user-visible ergonomics include transcript search ("/" in transcript mode) and new hook events like CwdChanged/FileChanged, as detailed in the Changelog highlights.

ClaudeCodeLog’s prompt-diff notes add that deferred-tool availability now comes via a system reminder (vs an explicit list) and that an explicit skill catalog got injected, per the System prompt updates.

Claude Code cheat sheet (v2.1.81-era) circulates as the de facto shortcuts and commands reference

Claude Code cheat sheet (community): A one-page reference card for keyboard shortcuts, slash commands, MCP server setup, skills/agents frontmatter, and CLI flags is getting recirculated as a practical “muscle memory” aid, per the Cheat sheet share.

The sheet is explicitly labeled v2.1.81 (last updated March 24, 2026) and includes guidance around /compact, worktrees, “effort/ultrathink,” and permissions mode switching, as visible in the Cheat sheet share.

Claude Code ToolSearch lazy-loading sparks complaints about added latency and error surface

ToolSearch ergonomics: A user complaint notes that Claude Code now “lazily loads all tools,” arguing that relying on ToolSearch adds latency and more failure modes than keeping tool schemas in-context, as stated in the Tool loading complaint.

The criticism lands alongside the 2.1.83+ churn where ToolSearch and deferred-tool discovery keep getting reshaped, as shown by the broader 2.1.83 release notes in the Changelog highlights.

Anthropic schedules “What We Shipped” Claude Code release-and-tips webinar (Apr 7)

Claude Code team webinar (Anthropic): Anthropic is starting a monthly stream, “What We Shipped,” positioned as a live walkthrough of recent Claude Code feature updates plus tips and Q&A; the first session is April 7, and registration is available via the Signup post and the Webinar page.

The practical signal is that Claude Code’s shipping cadence is now high enough that official “what changed and why” briefings are being productized, per the Signup post.

🧑💻 Codex momentum: product commitment, student challenge, and power-user workflows

OpenAI’s coding track stays noisy: reassurance the Codex app persists, student-focused build challenges, and more day-to-day reports of teams swapping between Codex and Claude Code based on limits/reliability.

Codex App commitment: “here to stay” with increased investment

Codex App (OpenAI): A core product-direction signal landed when a Codex team member said the Codex App is “here to stay” and that OpenAI is “investing way more into it than before,” per the product commitment.

This is one of the cleaner “don’t migrate away” statements in a week where builders are actively hedging across coding agents due to reliability and quota volatility elsewhere.

Developers route work to Codex when Claude Code quotas/outages hit

Codex agents (OpenAI): A visible day-to-day pattern is builders switching active coding work to GPT-5.4 High agents inside Codex after running into Claude Code limits or degraded service, as described in the agent switch report.

• Reliability trigger: The same day included reports of Claude service instability, including “elevated errors on Claude Opus 4.6 and Claude Code,” shown in the status incident screenshot.

The practical takeaway is not “one tool wins,” but that many teams are operating with an explicit fallback path across subscriptions when an agent becomes unusable mid-loop.

Codex-assisted profiling loop: instrument, find bottleneck, cache, repeat

Codex in-the-loop performance work: Robert “Uncle Bob” Martin described a concrete workflow where he asked Codex to instrument a game loop after it exceeded 500ms, then iteratively locate bottlenecks and apply caching-based fixes over several hours, per the profiling workflow writeup.

He reports a repeated pattern of “fix one bottleneck, then the next,” with human oversight mostly reduced to approving steps, as described in the profiling workflow writeup and reinforced by a follow-on note about running trials of strategy changes in the trial supervision note.

OpenAI x Handshake launches Codex Creator Challenge with $10K credits pool

Codex Creator Challenge (OpenAI Devs): OpenAI is running a student build challenge powered by Handshake—$10K in OpenAI API credit prizes, plus $100 in Codex credits for eligible U.S./Canada university students to start, as described in the challenge announcement and detailed on the challenge page.

The framing is explicitly “build something real,” which makes this more of a usage-onramp than a hackathon-style one-off.

Strategy narrative: OpenAI “refocus around coding and business users”

OpenAI product focus (signal): The “strategy shift” narrative recirculated with a WSJ-attributed claim that OpenAI leadership is finalizing plans to refocus around coding and business users, as summarized in the WSJ-cited claim.

This lines up with the day’s more direct Codex product signal (Codex app commitment) and the steady drip of Codex-centered adoption stories, but the tweet itself doesn’t include a primary WSJ excerpt—treat it as secondhand reporting unless corroborated elsewhere.

Codex App Server highlighted as “100% open source”

Codex App Server (OpenAI ecosystem): A community reminder emphasized that the Codex App Server is open source and positioned it as a base for building “richer experiences” on top of Codex, per the open source reminder.

This is a distinct signal from “Codex app is here to stay”: it’s about how extensible the stack is for teams that want to run their own wrapper UI, orchestration, or integrations.

Codex app adds thread search for navigating long histories

Codex App (OpenAI): A small but high-frequency UX upgrade—thread search in the Codex app—was called out as a navigation improvement in the QoL thread search note.

For heavy Codex users, this targets the common “too many long sessions” failure mode: you remember you solved something, but can’t find the thread quickly.

✅ Anti-slop engineering: browser QA, review modes, and spec-first validation

Quality tooling focuses on catching failures agents create: automated browser testing with replay video, structured review modes, and arguments for specs/tests defined before code so validation isn’t biased by implementation.

Expect CLI lets Claude Code/Codex QA your app in a real browser and records every failure

Expect (open source): Aiden Bai released Expect, a CLI (and agent skill) that hands your current coding agent a real browser to test against, then produces a video “highlight reel” of bugs it found, so you can fix and rerun until green, as shown in the Launch demo and Highlight reel clip.

• How it fits into existing loops: It’s explicitly positioned to run under Claude Code/Codex/Cursor “under the hood,” with a one-command bootstrap (npx -y expect-cli@latest init) described in the Launch demo.

• Why this matters: It’s trying to make “agent wrote it” code shippable by attaching repro artifacts (browser recordings) to each failure, which is the missing piece in a lot of agentic QA.

More details and entry points are collected on the Project site.

Spec-first validation: agent-written tests after implementation mostly “confirm decisions”

Spec-first validation (pattern): A builder framing from Factory AI argues that tests written after an agent implements a feature tend to reflect the code’s choices (“Everything passes. Full coverage.”) rather than the original intent, and that specs set the validation criteria before the first line of code, as laid out in the Spec-first critique.

• Why this shows up now: As agents generate more code, the failure mode shifts from “no tests” to “tests that validate the wrong thing,” because the implementation influences what gets asserted, per the Spec-first critique.

The core claim is about bias in post-hoc validation, not about any one tool.

Bombadil Inspect lands on main: action traces with state-before/state-after diffs

Bombadil Inspect (Antithesis/Bombadil): A first version of Bombadil Inspect landed on main, exposing a debugging UI that pairs action logs with “state before” vs “state after” snapshots, according to the Inspect screenshot.

• What’s concrete in the UI: The screenshot shows a left rail of actions (type/click/reload), two panels rendering the UI state before/after, and a violations counter plus CPU/heap graphs in the same view, per the Inspect screenshot.

This is aimed at the failure mode where agents do many UI actions but you can’t quickly see what changed.

Polyscope 0.14 ships Review Mode for in-depth PR/workspace reviews (and per-step model choice)

Polyscope 0.14 (Polyscope): Polyscope shipped a dedicated Review Mode for “in-depth code review of all changes” in a workspace/PR, and it also added a workflow where you can plan with one model and implement/review with another, as described in the Release notes and shown in the Model switch UI.

• Review as a first-class action: The product is framing review as a separate phase with its own UX, rather than “ask the agent to review” buried in chat.

• Planning vs implementation separation: The thread explicitly calls out “Plan with Claude; implement with Cursor; review with GPT 5.4,” per the Model switch UI.

The signal here is review tooling becoming a surface, not a prompt.

Cognition collaborates on Code Review Bench v0.3, focusing on precision vs latency

Code Review Bench v0.3 (Cognition): Cognition announced a collaboration with Martian on Code Review Bench v0.3, explicitly focusing on the tradeoff between precision and latency in code review evaluation, per the Collaboration note.

This is small but specific. It’s about measuring review quality under real-time constraints.

What’s missing in the tweets is the updated task set and scoring details (no paper or dashboard link was included in the shared post).

📱 Claude as a work hub on mobile: app integrations and adoption signals

New Claude mobile capability centers on using work tools (design/analytics) from a phone; alongside this are adoption/usage visuals and “Claude as the super‑app” sentiment. Excludes Claude Code CLI updates (covered separately).

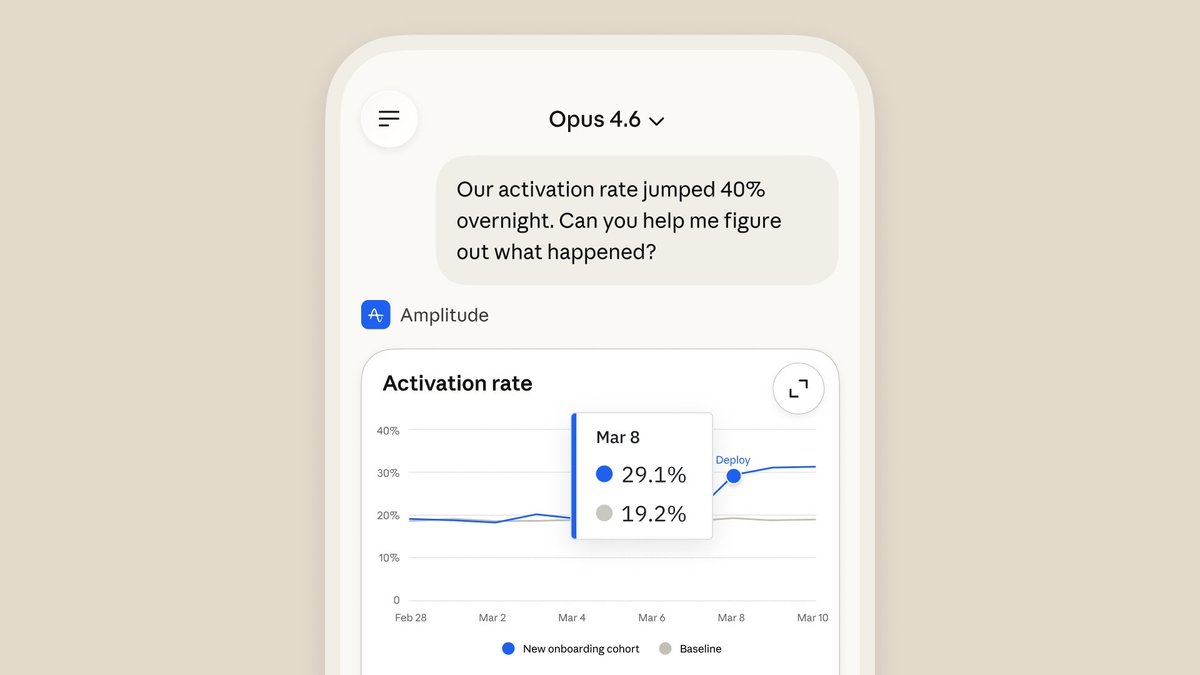

Claude mobile adds Work Tools for Figma, Canva, and Amplitude

Claude mobile (Anthropic): Anthropic says Claude’s “work tools” are now available on mobile—positioned as letting you open Figma designs, edit Canva slides, and view Amplitude dashboards from your phone, as announced in the mobile tools rollout and reiterated in the tools recap.

• Surfaces: The rollout points users to the mobile apps via the download page, implying this is a first-class capability across iOS/Android rather than a web-only feature.

The practical change is that “work-app context” is no longer desktop-gated for Claude sessions, which shifts when/where teams can do quick design/analytics lookups.

Chart shows Claude leading Gemini, Grok, and DeepSeek on mobile DAUs

Claude mobile adoption: A Similarweb-style plot circulating in the mobile DAU chart shows Claude rising to roughly ~17M daily active users by ~Mar 20, 2026, overtaking Gemini, Grok, and DeepSeek over late Feb–Mar.

This is being interpreted as a distribution signal: Claude’s mobile presence looks less like a “companion app” and more like a primary interface for a chunk of users, which matters if you’re betting on mobile-first agent workflows.

Claude usage intensity map highlights Israel, Singapore, and Australia

Claude usage geo signal (Anthropic Economic Index): A Visual Capitalist-style map of “Claude AI usage by country” is being reshared, showing usage intensity normalized by working-age population share—e.g., Israel at 4.90×, Singapore at 4.19×, Australia at 3.27×, and the U.S. at 3.69×, as shown in the country usage map.

The main analytical value is that it’s one of the few public, quantified glimpses of where Claude adoption clusters outside the U.S., and it’s being discussed as a proxy for where “Claude-first” workflows may be emerging.

Builders frame Claude as the “super app” aggregator for work

Claude positioning: A recurring take is that Anthropic is turning Claude into a consolidated work hub—captured bluntly as “They are developing Claude into the app that ChatGPT wanted to be” in the super app take, with similar “Claude is becoming a super app” sentiment echoed in the super app shorthand.

This framing is being used less as model commentary and more as a product-architecture claim: Claude as the place where tool integrations accumulate, not an assistant you bounce between apps.

Perplexity Computer UI hints at an upcoming Memory feature

Perplexity Computer (Perplexity): A screenshot shared by TestingCatalog suggests Perplexity is working on a Memory feature for “Perplexity Computer,” with “Memory” appearing as a sidebar nav item in the UI screenshot.

The key detail is the product direction: “computer that works for you” plus persistent state, which is the missing piece for longer-running agent workflows compared to stateless chat sessions.

Copilot Tasks is being described as already usable on mobile

Copilot Tasks (Microsoft): One thread claims “Copilot Tasks is already on mobile,” framing it as effectively carrying a “cloud computer” style workflow in your pocket in the mobile mention.

It’s a light-data-point (no official release detail in the tweet), but it’s being used as a comparison point to Claude’s push toward mobile-controlled work execution.

🧱 Self-hosted & sandboxed agent execution: keep code inside your network

A cluster of updates about where agents run: self-hosted cloud agents, distributed sandboxes that survive laptop close, and ‘safe execution’ primitives. This is about execution environments, not model quality.

Cursor Cloud Agents can now run on your infrastructure

Cursor Cloud Agents (Cursor): Cursor added a self-hosted option so the same cloud-agent harness can execute tools and builds entirely inside your network, targeting teams that can’t send code or artifacts outside the perimeter, as announced in self-hosted agents announcement and detailed in the self-hosted agents post. The pitch is “cloud UX, local execution”: isolated environments and parallel task handling, but with enterprise network/compliance constraints preserved.

• Operational detail: Cursor frames this as avoiding inbound connectivity requirements (no inbound ports / no VPN) while still orchestrating agent runs, according to the self-hosted agents post.

The core change is where commands run and where artifacts land; model choice and editor experience are intended to stay the same.

Cloudflare opens Dynamic Workers beta for sandboxing AI-generated code

Dynamic Workers (Cloudflare): Cloudflare announced an open beta of Dynamic Workers, positioning it as an infra primitive to securely execute AI-generated code at scale, as shared in beta announcement.

The framing is aimed at agentic systems that need to run untrusted generated code frequently; the concrete engineering question it’s addressing is sandbox overhead (how fast you can spin up isolated execution) rather than model capability.

Imbue ships Keystone to auto-generate devcontainers in a sandbox

Keystone (Imbue): Imbue introduced Keystone, a sandboxed agent that runs in a Modal container and tries to produce a working dev environment for arbitrary repos—generating a Dockerfile, devcontainer.json, and a test runner that passes—per the Keystone launch and the product page.

• Sandboxing stance: The product writeup emphasizes doing sysadmin-style setup inside a sandboxed environment (rather than on developer machines), as described in the product page and reinforced by Modal’s callout in Modal mention.

The open question is how broadly it generalizes across polyglot repos and nonstandard test harnesses, but the shipped unit is clear: “make this repo runnable, reproducibly.”

OpenCode shows “distributed” agent runs that survive laptop close

OpenCode (OpenCode): A demo claims agents can run on a laptop, a remote server, or a cloud sandbox provider; you can close the laptop and work continues, then reopen and sync state back locally, per the distributed opencode demo and the follow-up on multi-device “home server” configurations in device topology notes. The design goal is execution-location flexibility without losing session data when sandboxes are deleted.

• Device/controller split: The same thread sketches patterns where a laptop or cloud node acts as the “home” runtime while phones act as remote controllers, as described in device topology notes.

What’s still unclear from the tweets is the durability mechanism (where state is stored, conflict behavior, and what is sync’d vs re-derived).

Sandcastle runs parallel Claude sandboxes on worktrees and merges back

Sandcastle (Matt Pocock): Sandcastle can take a backlog of issues, spawn N Claude instances in Docker sandboxes on separate git worktrees, and merge resulting changes back to a target branch—all locally—per the Sandcastle capability post.

• Scaling friction: The same author notes hitting rate limits when firing multiple Opus instances in parallel, which becomes an immediate bottleneck for this style of “many sandboxes at once,” as shown in parallel run rate limit.

This is a concrete execution pattern: isolate work per worktree, run agents in parallel, then reconcile via git.

OpenCode is moving core features into plugins to force a better plugin API

OpenCode (OpenCode): The maintainer says they’re refactoring existing features into plugins, then building new features as internal plugins so the plugin API has to be “good,” as stated in plugin decomposition note and echoed via a plugin-first framing in internal plugins retweet. This is a product/architecture signal: extensibility becomes a first-class constraint, not an add-on.

• Why it matters for execution: In a sandboxed/distributed runtime, plugins often become the unit of capability delivery (tools, connectors, sandboxes, UI panels); a “dogfooded” internal plugin surface tends to converge on clearer capability boundaries, per the intent described in plugin decomposition note.

🕹️ Agent runners & harnesses: OpenClaw release and “run it from chat” ops

Ops tooling for coordinating agents stays active, led by OpenClaw’s new release and examples of chat-driven personal automation. Excludes general MCP protocol items (covered separately).

OpenClaw 2026.3.24 broadens OpenAI API compatibility for clients and RAG stacks

OpenClaw 2026.3.24 (OpenClaw): OpenClaw expanded its OpenAI-compatible surface by adding /v1/models and /v1/embeddings, and it now forwards explicit model overrides through both /v1/chat/completions and /v1/responses, as detailed in the GitHub release notes and summarized in the release thread. That matters for teams using OpenClaw as a “one runtime” gateway, because embeddings and model-override propagation are common breakpoints when plugging in off-the-shelf OpenAI clients.

• Sub-agent routing via OpenWebUI: The release also calls out improved ability to “talk to sub-agents” with OpenWebUI in the release thread, which is the kind of client-compat surface area that tends to rot quickly without explicit passthrough support.

A chat-based CRM built on OpenClaw replaces a $300/mo SaaS CRM with a Google Sheet

OpenClaw CRM workflow (OpenClaw): A builder showed an OpenClaw “chat CRM” that uses a Google Sheet as the system of record while the agent auto-updates leads, sends follow-ups, and answers questions across Gmail/WhatsApp/calendar integrations—framed as replacing a $300/mo CRM in the CRM breakdown. This is a concrete “run it from chat” pattern: spreadsheet as database, chat as UI, agent as operator.

OpenClaw 2026.3.24 ships native Microsoft Teams integration via the official SDK

OpenClaw Microsoft Teams (OpenClaw): OpenClaw 2026.3.24 migrates to the official Teams SDK and adds Teams-native behaviors (streaming 1:1 replies, welcome cards with prompt starters, feedback, typing indicators, native AI labeling, plus message edit/delete support), as described in the GitHub release notes and echoed by the beta post. For AI ops, this is about keeping agent execution inside an enterprise’s existing comms surface.

OpenClaw 2026.3.24 updates Control UI for tool/skill management visibility

OpenClaw Control UI (OpenClaw): In 2026.3.24, the Control UI now shows only the tools available to the active agent, adds a live “Available Right Now” section, and offers compact vs detailed views, per the GitHub release notes and the beta mention. The point is operational clarity: when tools vary by agent, credentials, or environment, the UI becomes the fastest way to understand what the harness can actually do before you burn tokens.

OpenClaw 2026.3.24 adds one-click install recipes for bundled skills

OpenClaw skills (OpenClaw): OpenClaw 2026.3.24 adds install “recipes” for bundled skills (and prompts to install missing dependencies via CLI/Control UI), according to the GitHub release notes. This is a distribution move: it makes skillpacks closer to reproducible units you can roll out across chat endpoints, instead of tribal-knowledge setup steps.

OpenClaw 2026.3.24 adds Slack interactive reply buttons for faster chat loops

OpenClaw Slack (OpenClaw): OpenClaw 2026.3.24 adds interactive reply buttons in Slack, as listed in the release thread. This is a harness-level UX primitive: it lets an agent present constrained choices (approve/deny, pick an option, confirm an action) without pushing users back into CLI commands.

OpenClaw ops gotcha: sessions “poof at 4am,” causing overnight amnesia

OpenClaw session ops: A recurring operational gotcha is that OpenClaw sessions “poof at 4am,” creating an overnight amnesia mode unless state is externalized, as called out in the community tip. For teams relying on chat-driven personal automation, this becomes a boundary between “conversation as state” and “external memory as state.”

OpenClaw 2026.3.24 adds smart Discord auto-thread naming

OpenClaw Discord (OpenClaw): OpenClaw 2026.3.24 introduces smart Discord auto-thread naming, per the release thread and the deeper notes in the GitHub release notes. For chat-native agent ops, thread naming ends up being an indexing mechanism for long-running work, especially when you route many tasks through a shared Discord server.

RunClaw pitches Telegram as an ops console for OpenClaw-style agent work

RunClaw (OpenClaw-adjacent): A sponsored comparison claims people run OpenClaw on a ~$700 Mac mini 24/7 for inbox ops, while “RunClaw” can do similar work “from Telegram for $1,” plus expand into building websites, slide decks, and media from chat surfaces like Slack, per the sponsored comparison. Treat it as directional rather than verified benchmarking, but it signals continued pressure toward chat-first agent execution plus aggressive cost packaging.

🔌 MCP & agent interoperability: channels, web actions, and UI streaming

Interoperability plumbing shows up across messaging channels, web-automation endpoints, and UI streaming protocols—useful for teams standardizing tool access across assistants.

Claude channels add iMessage support for agent conversations

Claude channels (Anthropic): iMessage is now supported as a channel, per the channel announcement, extending the “agent in chat apps” pattern beyond Slack/Discord-style surfaces.

• What it enables: the channel surface is being framed as a way to interact with an agent through Messages—see the iMessage UI example in screenshot framing.

• Why it matters: it makes “on-the-go” control/monitoring viable for long-running workflows (agents that keep running somewhere else), without requiring a dedicated mobile app UI.

Operational details (auth model, how sessions map to an agent runtime, and whether this is Claude Code-specific or broader) aren’t spelled out in the tweets, so treat the first wave as a channel primitive rather than a fully-specified remote-control stack.

Firecrawl adds /interact for natural-language or Playwright web actions

Firecrawl (/interact): Firecrawl introduced an /interact endpoint that follows /scrape, letting an agent take actions on a live page via natural language, as described in the endpoint announcement.

• Action interface: alongside natural-language instructions, Firecrawl is explicitly positioning “need full browser control” as writing Playwright code in-session, with an example shown in Playwright snippet.

• Agent harness implication: this is a clean split between extraction (/scrape) and stateful manipulation (/interact), which fits teams trying to standardize web automation across multiple assistants without committing to full “computer use” UIs.

The tweets don’t include latency, pricing, or reliability claims; the concrete shipping artifact here is the endpoint and the code-level control surface.

AWS Bedrock AgentCore adds an AG‑UI endpoint for streaming agent UIs

AgentCore AG‑UI (AWS Bedrock): AWS added a dedicated AG‑UI endpoint inside AgentCore so agents can stream interactive UI components and human-in-the-loop workflows, according to the AG‑UI announcement.

• Integration framing: the post explicitly places AG‑UI alongside MCP (“agent to tools”) and A2A (“agent to agent”), per the AG‑UI announcement.

• Build surface: the claim is that an AgentCore agent can stream UI by setting an AG‑UI flag and deploying, as shown in the AG‑UI announcement.

This is an interoperability move: a standardized way to ship agent UX without building a bespoke front-end for every agent runtime.

OpenBlock ships Connections to reuse SaaS auth across agent sessions

Connections (OpenBlock): OpenBlock announced “Connections,” a one-time setup flow so any OB‑1 session/CLI/agent can securely use linked SaaS accounts (individual + org), powered by WorkOS, as shown in the feature announcement.

• Surface area: examples name Linear and Sentry explicitly, and imply broader coverage (GitHub/Stripe and others), per the feature announcement.

• Interoperability value: this aims to decouple auth bootstrap from per-agent setup—one configured connection can be reused across multiple agent entrypoints, per the feature announcement.

The post is light on the underlying trust model (scopes, revocation, auditability), but the concrete new primitive is centralized credential plumbing for agent sessions.

BrowserEnv pitches training on custom web workflows to improve agents

BrowserEnv: BrowserEnv is being positioned as a response to current “computer use” agents being slow and inaccurate on real sites, with a pitch that teams can train models on their own esoteric web workflows, per the BrowserEnv pitch.

The key interoperability angle is that it treats web automation as a trainable environment layer (workflows as data) rather than a one-off UI-driving feature. The tweet doesn’t include benchmarks, supported browsers, or how environments are specified, so the only solid claim here is the direction: training infrastructure targeted at the long tail of site-specific web tasks.

🛡️ Safety & misuse: Model Spec, voice guardrails, and jailbreak tooling

Security/safety discourse clusters around explicit behavior specs, stronger guardrails for agents (especially voice), and the emergence of open jailbreak interfaces that invert post-training safety layers.

G0DM0D3 launches a refusal-penalizing, multi-model “jailbroken” chat UI

G0DM0D3 (elder_plinius): A new open-source web UI positions itself as “fully jailbroken AI chat” with “no guardrails,” routing prompts to many models in parallel and using a “Tastemaker” scorer that explicitly penalizes refusals/hedging and amplifies the most direct answer, per the launch thread and the accompanying GitHub repo.

The README-style framing (“cognition without control”) is visible in the

, and the core operational claim is that post-training safety layers can be competed away by multi-model selection pressure rather than bypassed in a single model.

ElevenLabs ships Guardrails 2.0 to curb voice-agent drift and manipulation

Guardrails 2.0 (ElevenLabs): ElevenLabs rolled out an updated safety/control layer for ElevenAgents aimed at preventing real-time voice agents from hallucinating, drifting off-task, or being steered by users, as shown in the Guardrails 2.0 demo.

This is specifically framed as in-the-loop control for conversational voice workflows (where “keep it on-script” and “don’t get socially engineered” failures are common), rather than a general model capability update.

OpenAI spotlights Model Spec as a public instruction hierarchy and behavior contract

Model Spec (OpenAI): OpenAI published a long-form conversation on how the Model Spec is supposed to work in practice—resolving conflicting instructions via a hierarchy and evolving through real-world feedback—framing it as the “what it should and shouldn’t do” layer as models gain capability, as described in the podcast segment.

The same push is reinforced by OpenAI’s written explainer on how they maintain and iterate the spec, detailed in the Model Spec article.

English Wikipedia bans LLM-written article prose with two narrow exceptions

Wikipedia policy (English Wikipedia): Wikipedia is drawing a bright line against using LLMs to generate or rewrite article prose, while allowing two constrained uses—grammar/style help on human-written text (with the editor responsible for meaning fidelity) and translation assistance as a first draft—summarized in the policy recap.

For analysts tracking content provenance and integrity, this is a governance signal: “fluent” isn’t the acceptance criterion; traceability to sources is.

OpenAI launches a Safety Bug Bounty focused on AI abuse and safety risks

Safety bug bounty (OpenAI): OpenAI is launching a program explicitly aimed at finding “AI abuse and safety risks” across OpenAI products, according to the program announcement.

The tweet doesn’t include payout ranges, scope details, or submission mechanics, so treat this as the headline only until the full program page is referenced in follow-ups.

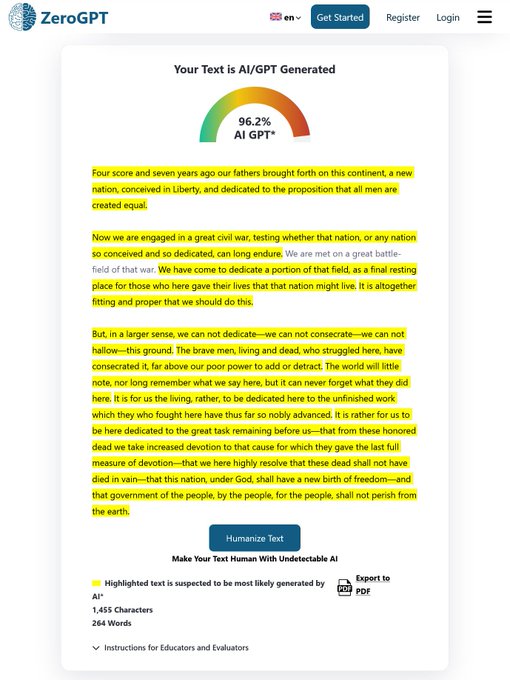

AI detector false-positive: Gettysburg Address flagged as AI-generated

AI detection reliability: A widely shared example shows ZeroGPT flagging Lincoln’s Gettysburg Address as AI-generated at 96%+, illustrating how detectors can misclassify canonical historical text and why “AI-written” claims based on detectors alone remain brittle, per the detector screenshot.

This keeps resurfacing as practical ammo in policy and academic settings where detector outputs are treated as evidence rather than as noisy heuristics.

🛠️ Dev utilities for agent era: repo context, doc freshness, and parsing primitives

Open-source tools aim at the core bottleneck: getting correct, current context into agents (and cutting token waste) via doc fetchers, structural repo graphs, and better document parsing.

code-review-graph builds a Tree-sitter repo map to shrink review context

code-review-graph (AlphaSignalAI/community): An open-source utility called code-review-graph indexes a repository into a Tree-sitter-derived structural graph stored in SQLite, then uses “blast radius” tracing to pull only the minimal impacted set of files during reviews—framed as a way to stop agents from rereading huge repos and guessing the missing parts, per the Token reduction claim screenshot.

The post’s concrete performance claims include 6.8× fewer tokens on typical reviews and 49× fewer tokens on large monorepos by reading ~15 files instead of scanning ~27,000, with “2,900 files reindex in 2 seconds” and “12 languages” support, as shown in the Token reduction claim.

Context Hub (chub) open-sources a “doc freshness” CLI for coding agents

Context Hub (AlphaSignalAI/community): A new open-source CLI called Context Hub (“chub”) is positioned as a fix for the common agent failure mode of coding against stale SDKs—by fetching versioned docs on demand and letting agents persist local annotations when docs are missing or wrong, as described in the Context Hub overview screenshot.

The core claim is that this is an information problem more than a model problem—agents “invent parameters and call dead functions” when they don’t have current specs, and chub turns “search → fetch → use” into a repeatable pre-step for any coding session, using a growing markdown doc corpus that can be contributed back to (the post cites “6K+ GitHub stars in a week” and “1,000 API documents,” per the Context Hub overview).

LlamaParse improves Word table parsing by aligning XML structure to rendered pages

LlamaParse (LlamaIndex): LlamaParse shipped a Word/.docx table-parsing improvement that links source Word XML table structure (including merged cells and formatting) to the final rendered markdown while preserving page positions, addressing the practical “renderer decides pagination” problem noted in the announcement Docx parsing update.

• What changed: The pipeline can now map “source XML tables/table elements” to “rendered markdown output,” enabling direct interpretation of merged cells plus bold/italic/sub/sup/strikethrough, as explained in the Docx parsing update and illustrated by the renderer mismatch graphic.

• Why it matters for agent context: For RAG and doc-to-plaintext workflows, this makes citations and table extraction more stable when agents need “what was on page N” semantics, with more detail in the Parsing blog and entry points in the LlamaParse page.

dev-browser CLI frames browser use as “agent writes code” instead of click-driving

dev-browser CLI (workflow pattern): A “dev-browser” command-line tool is introduced with the premise that “the fastest way for an agent to use a browser is to let it write code,” suggesting a shift away from slow, error-prone GUI driving toward code-authored browser actions, per the Dev-browser CLI intro.

The tweet doesn’t include a full spec, but the framing implies an agent loop where the model generates automation code (e.g., Playwright-style scripts) rather than interpreting pixels/click targets, as stated in the Dev-browser CLI intro.

⚙️ Runtime & inference engineering: TurboQuant integrations, KV-cache economics, and resilience

Inference-side progress is dominated by KV-cache compression discourse plus serving reliability work (MoE resiliency) and “auto configuration” improvements for local runtimes.

vLLM lands TurboQuant KV-cache compression, with “4M+” cached tokens on small boxes

vLLM (vLLM Project): Following up on TurboQuant—KV-cache compression claims—vLLM contributors are now wiring TurboQuant into vLLM, with the project highlighting “4M+ KV-cache tokens” on a “USB-charger-sized” machine in the integration note.

This matters because it turns TurboQuant from a paper into something agent runtimes can actually ship, pushing long-context serving toward cheaper, smaller deployments (especially for long-running chat/agent sessions where KV cache dominates memory), as described in the TurboQuant summary and reinforced by the “tiny box” vLLM claim in integration note.

SGLang adds Elastic EP for MoE resiliency with <10s recovery from GPU faults

SGLang Elastic EP (LMSYS): SGLang announced “Elastic EP,” targeting the failure mode where wide expert-parallel MoE serving crashes an entire instance on a single GPU fault; it claims <10s recovery from rank failures versus 2–3 minutes for a full restart, with “zero static overhead” under non-failure conditions in the release post.

This is a very specific operational improvement for MoE serving (DeepSeek-V3-class deployments were name-checked), turning “a GPU died” from a multi-minute brownout into a short blip if the rank masking and weight redistribution path works as described in the release post.

TurboQuant triggers a split: “6× less KV memory” vs “you’ll use more anyway”

TurboQuant (Google Research): The day-after conversation has split into (1) the technical claim that TurboQuant compresses KV cache by ~6× with up to ~8× speedups on H100s, as summarized with charts in the TurboQuant thread and (2) pushback that the “memory footprint shrinks” narrative misses second-order effects—one critic argues “TurboQuant will lead to MORE memory usage not less” in the critique, while another frames the hardware-market reaction as misunderstanding Jevons-style demand expansion in the Jevons reply.

Separately, at least one market recap claims memory/storage stocks dropped 4–6% after TurboQuant coverage (Micron -4%, Seagate -5.6%) in the market note, which is a concrete signal that KV-cache economics are becoming legible outside infra teams—even if deployment reality is still uncertain.

Unsloth Studio removes manual llama.cpp parameter tuning via auto-configuration

Unsloth Studio (UnslothAI): Unsloth says llama.cpp can now auto-pick “correct model settings” based on context length and the user’s local compute, and Unsloth Studio applies these settings automatically—plus it now ships with precompiled llama.cpp binaries, per the feature demo and the GitHub repo.

The main workflow change is that local inference setup shifts from hand-tuning (threads, batch sizes, etc.) toward “context length + hardware → settings,” which can reduce the trial-and-error tax when swapping models and quantizations across machines, as shown in feature demo.

RL inference stack chatter points to a large Fireworks vs TRT/SGLang throughput gap

Fireworks inference (stack comparison): A thread about an RL-focused “Composer v2” report claims Fireworks was used for RL inference because it’s “much more efficient,” pointing to a TRT vs SGLang gap—and alleging another similar-sized gap from TRT to Fireworks—per the throughput comment.

The concrete artifact here is the latency/throughput chart in throughput comment; it’s not a standardized benchmark, but it’s a signal that “which inference runtime” is being treated as a first-order variable in RL-style pipelines, not just a serving detail.

🧩 Skills & extensions: make agents domain-competent (docs, office files, vector DBs)

Today’s plugin/skill story is about packaging repeatable capability: office-document skills, domain-specific infra skills, and install/update flows that keep teams from duplicating prompt glue.

LiteParse ships an open-source PDF-to-text skill for Claude Code and other agents

LiteParse (LlamaIndex ecosystem): LiteParse is positioned as an open-source, model-free PDF parser that turns documents into plaintext “in seconds,” with a one-line skill install meant to feed cleaner context into Claude Code and “40+ other agents,” as described in launch thread.

• Why it matters for agent quality: the pitch is that coding agents are strong on plaintext but brittle on PDFs/Office formats, so extracting text deterministically upstream becomes the reliability lever, per launch thread.

Weaviate publishes “Agent Skills” to keep coding agents on current APIs

Weaviate Agent Skills (Weaviate): Weaviate is promoting a skills repository intended to stop the common “vibe coding” failure mode where agents hallucinate vector DB APIs (legacy syntax, wrong params); the repo is framed as an installable bridge via npx skills add weaviate/agent-skills, and includes workflows for schema inspection, imports, and hybrid/semantic search, as laid out in repo overview.

• What’s in scope: the post claims two tiers—focused scripts (“skills”) plus end-to-end “cookbooks” (FastAPI/Next.js blueprints)—and a small command surface (ask/collections/explore/fetch/query/search), per repo overview.

MiniMax open-sources office agent skills (PDF, Excel, PPT, Word)

Office agent skills (MiniMax): MiniMax says it has open-sourced a bundle of “office agent skills” covering common document formats like PDF, Excel, PowerPoint, and Word, positioning them as reusable building blocks for agent workflows rather than one-off integrations, as announced in open-source note.

The post doesn’t include packaging details (install path, schema, or skill runner) in the tweet itself, but the intent is clear: reducing the repeated glue work teams do to make agents competent with everyday office artifacts.

Laravel Boost ships a laravel-best-practices skill for agents

Boost skill: laravel-best-practices (Laravel): Taylor Otwell says a new Boost skill called laravel-best-practices shipped, described as “100+ curated Laravel best practices” intended to equip an AI agent with more consistent, framework-native guidance, as relayed in release repost.

This is another data point that “skills” are turning into a portability layer for domain constraints (framework conventions) rather than relying on model priors alone.

A simple skills hygiene loop is emerging: npx skills update

Skills upkeep pattern: A small but telling ops habit is being normalized: running npx skills update to keep installed skillpacks current, shown in terminal screenshot where two skills are detected and updated in one run.

The implicit operational model is “skills drift like dependencies,” so updates become a routine maintenance loop rather than a one-time install, per terminal screenshot.

🎨 Generative media: music models, video arenas, and creator pipelines

Creative-gen is unusually active: Google’s music models, image-to-video arena rankings, and open creative toolchains (ComfyUI/LTX). This is kept separate so it doesn’t get dropped behind agent tooling.

Lyria 3 Pro and Clip ship in Gemini API with structured 3-minute tracks

Lyria 3 Pro + Lyria 3 Clip (Google DeepMind/Google): Google rolled out two music-generation models—Lyria 3 Pro for full songs and Lyria 3 Clip for ~30-second clips—available via the Gemini API and a new “music experience” in Google AI Studio, as announced in the launch post.

The product angle is longer-horizon composition control: Lyria 3 Pro supports building tracks up to 3 minutes, and prompts can explicitly target song sections (intro/verse/chorus/bridge) using a timeline UI, as shown in the longer tracks demo and reiterated in the launch recap. Pricing surfaced for API builders in the API pricing note, which calls out $0.08/song for Pro and $0.04/song for Clip plus knobs like tempo control and time-aligned lyrics.

• Surfaces and availability: the same rollout is positioned for developers (Gemini API + AI Studio) and consumers (Gemini app tiers), with the consumer rollout mentioned in the Gemini app update.

Early usage signal is still thin in the dataset, but the “structure-aware, minutes-long” framing is a concrete shift from short-loop music samplers toward something closer to rough arrangement.

LTX-2.3 opens weights for audio-video generation

LTX-2.3 (Lightricks): Lightricks’ LTX-2.3 audio-video model is being promoted as “now open,” with open weights and multiple checkpoints (including distilled variants and upscalers) described on the model page, as highlighted in the open release post.

The practical hook for builders is local/own-infra experimentation: the model page positions it for both local runs and tool integration (e.g., ComfyUI), with synchronized audio+video generation as the headline capability.

ComfyUI pitches Dynamic VRAM optimization for running local models on tight hardware

Dynamic VRAM optimization (ComfyUI): ComfyUI is pitching a “Dynamic VRAM optimization” feature aimed at making local model runs feasible on memory-constrained machines, framing it as reducing the need for RAM upgrades in the optimization note.

The same post pairs this with an “LTX 2.3 AI Film Studio” deep dive, suggesting the optimization work is intended to support heavier local video pipelines without requiring high-end GPUs.

Google embeds SynthID in Lyria outputs and lets Gemini verify it

SynthID for Lyria (Google): Google says all Lyria 3/Lyria 3 Pro generations now ship with an embedded SynthID watermark, and the intended workflow is verification by uploading an audio file and asking Gemini whether SynthID is present, as described in the watermark walkthrough and echoed in the API release note.

This matters operationally because watermarkability becomes a default property of the output artifact, not an app-level toggle—useful for platforms that need provenance checks without carrying full generation logs.

Grok Imagine takes #1 on Multi-Image-to-Video Arena by Elo

Grok Imagine (xAI): An Elo chart circulating from the “Multiple Images to Video Arena” places Grok Imagine at #1 overall with an Elo of 1342, ahead of several Kling variants (e.g., Kling v2.6 Pro at 1294), as shown in the arena leaderboard screenshot.

The supporting discussion in this dataset is mostly amplification—e.g., “takes gold” in the repost—so treat it as a leaderboard snapshot rather than a full methodology writeup.

Lyria 3 prompting is converging on timestamps and reference-context tricks

Lyria 3 Pro prompting (Google): Builders are quickly converging on a few prompt patterns that make the longer-form control actually usable—separating “style” from “lyrics,” setting lyric context explicitly, and using timestamps like “chorus at 1min” for steering, as outlined in the prompting tips thread.

A second pattern is giving the model non-text context: the Gemini team notes that attaching a reference image can influence both lyrics context and cover art in Lyria 3 Pro, according to the reference image tip. And there’s early “try weird things” exploration—e.g., forcing a literature-to-genre transform (“Rilke… ‘more 1990s boy band’”) in the user example.

One pragmatic positioning detail shows up in the API vs Suno comparison: the claim is that Suno may still win on output quality in some cases, but Lyria’s API availability and perceived licensing posture makes it easier to integrate into products.

Seedance 2.0 shows a practical 16:9 to 9:16 repurposing workflow

Seedance 2.0 (creator workflow): A creator demo shows video-to-video aspect ratio conversion (16:9 → 9:16) framed as the fastest way to repurpose existing video content, with the before/after output shown in the conversion demo.

The thread claims the prompt can be as short as “create a portrait mode version of the video,” while also noting post-steps like minor upscaling to fix stutter and even swapping the tail of the clip with another model output, per the conversion demo.

📦 Model updates & open-weight watch: minis/nanos and next-base rumors

Model news today is a mix of smaller/cheaper variants and open-weight/rival-lab signals. This category excludes creative media generators (covered separately).

GPT-5.4 mini and nano arrive: cheaper 5.4-tier reasoning, but very token-hungry at xhigh

GPT-5.4 mini + nano (OpenAI): Artificial Analysis reports OpenAI has released GPT-5.4 mini and GPT-5.4 nano as cheaper variants of GPT-5.4 with the same reasoning modes (including xhigh) and 400K context, with pricing called out at $0.75/$4.50 per 1M input/output tokens for mini and $0.20/$1.25 for nano, versus GPT-5.4 at $2.50/$15 per the benchmarks and pricing thread. One immediate engineering implication is cost predictability: their own evaluation run notes 200M+ output tokens for the Intelligence Index in xhigh settings, which can dominate spend even at lower per-token rates, as detailed in the benchmarks and pricing thread.

• Where nano looks strong: in the same writeup, GPT-5.4 nano (xhigh) is described as leading peers like Claude Haiku 4.5 and Gemini 3.1 Flash-Lite Preview on agentic/coding-ish evaluations including τ²-Bench (81%) and TerminalBench (42%), according to the benchmarks and pricing thread.

• Where both look weak: the thread also flags lower “AA-Omniscience” results driven by high hallucination rates (mini cited at 90%; nano at 74%), attributing part of the gap to answering more often instead of abstaining, as stated in the benchmarks and pricing thread.

A separate note from the same source emphasizes that xhigh verbosity is unusually high versus competitors (mini ~235M output tokens; nano ~210M), reinforcing that “cheaper” SKUs can still be expensive in long agent loops if they spill tokens, as quantified in the token usage comparison and agentic task score note.

DeepSeek rumor mill: API model is bigger than web, and an even larger base is ‘soon’

DeepSeek (model roadmap signal): A screenshot attributed to a DeepSeek employee account claims the API and web/app serve different model sizes, with the 3.2 API base model larger than the web model, and says an even larger base model will be released soon—including the explicit follow-up that it’s “much bigger than 3.2,” as shown in the staff-linked comments screenshot.

A translated recap reiterates the same point—“web/app and API are two different models” plus “a bigger base model will be released soon”—in the translated excerpt. The thread context around this rumor also includes speculation that DeepSeek’s next release may not land with the same surprise as prior drops (expectations management rather than specs), per the prediction post.

MiniMax M2.7 is being teased as “open source soon”

MiniMax M2.7 (open-weight watch): Multiple posts claim MiniMax 2.7 / M2.7 is expected to be released as open source “soon,” with one of the more direct statements being “We are getting MiniMax 2.7 as open source soon,” as written in the open source claim.

A related repost suggests a Hugging Face model card is being prepared (“started preparing the model card… M2.7 coming soon!”) in the model card repost, and another post frames the state as “clicking refresh everyday, m2.7 on HF soon,” pointing to the MiniMax organization page in the HF org watch.

📈 Adoption & unit economics: Jevons effects, token costs, and GTM hiring shift

Business-side signals focus on why adoption accelerates: AI makes new software projects affordable (Jevons), but token costs still matter; plus job-posting analysis shows labs shifting headcount toward GTM and customer adoption roles.

Jevons paradox is showing up in AI-enabled software spend and staffing

Jevons paradox (Box / Levie): As AI makes software work cheaper, more organizations (including non-tech) greenlight projects they previously couldn’t justify—so total software demand rises rather than falls, per the Jevons paradox thread. This frames near-term labor impact as “more people managing/maintaining agent-built systems,” not a clean substitution story.

The claim is directional, but it matches what many teams are seeing in practice: once prototyping and internal tooling get cheaper, the backlog expands faster than headcount shrinks.

Job-posting data suggests frontier labs are scaling GTM and customer adoption

Frontier lab hiring mix (Epoch AI): An analysis of open roles suggests sales and go-to-market work is now the largest hiring slice at OpenAI and Anthropic (rising to ~28–31% of postings), while research is a smaller share (cited as 7% at OpenAI and 12% at Anthropic) in the job-posting summary and detailed in the full post.

The same writeup highlights growth in “AI Success Engineer” / “Forward Deployed Engineer” roles—signals that deployment friction, not model demos, is the limiting factor for revenue expansion.

Token costs keep humans competitive on many tasks even when AI is stronger

Labor vs tokens (E. Mollick): A recurring constraint is that per-token spend isn’t trivial, so for many tasks—especially skilled, routine work—humans can remain cheaper than running models at scale, even if AI quality is higher, as argued in the token costs note. This is an adoption brake that pushes teams toward selective automation (high-leverage steps) instead of blanket replacement.

It also implies unit-economics work (routing, caching, narrower context) matters as much as model choice.

CFOs expect modest AI-driven headcount reductions, concentrated in admin roles

CFO survey (WSJ): U.S. CFOs projected AI would reduce company headcount in 2026 by about 0.4% versus baseline, with cuts focused on clerical/admin roles rather than broad workforce displacement, as summarized in the WSJ survey excerpt.

This is one of the clearer “adoption reality checks” showing up in mainstream business data: narrow reductions in routine work alongside demand for more technical roles.

Marketing is being reframed as an agent-run workflow problem

Marketing ops shift (OMO): A blunt prediction is that marketing is about to move from “marketing to people” toward “marketing to AI agents,” as stated in the marketing agents claim.

For AI product leaders, this is less about ads and more about org design: if agents become the primary operators for campaign generation, QA, and iteration, then governance, evaluation, and spend controls become first-class product surfaces.

🏗️ Compute & industrial scale: data center power, chips, and ‘AI for nuclear’

Infra signals center on power as the bottleneck: mega-watt class data center leases, projected multi‑trillion buildouts, and new processor and nuclear initiatives framed explicitly around AI workloads.

Microsoft steps into 700MW Abilene data center project after Oracle/OpenAI talks fell through

Abilene data center lease (Microsoft): Microsoft reportedly moved to lease a ~700MW data center development in Abilene, Texas that had been discussed for Oracle and OpenAI, per the report summary; it’s a reminder that AI scaling is now constrained by power procurement and delivery timelines, not just GPUs. Power is the product.

• Why engineers care: 700MW is “campus-scale” capacity; it changes who can reliably run large inference/training fleets and who gets stuck in queueing/rationing dynamics described in the report summary.

• Why analysts care: the counterparty swap (Oracle/OpenAI → Microsoft) signals that stalled mega-projects can still clear fast when a buyer can commit to long-term AI load and has integration paths for the capacity, as described in the report summary.

Eric Schmidt frames AI scale as a 100GW buildout problem, not a model problem

Data center power math (Eric Schmidt): a circulating Schmidt estimate puts 1GW at ~$50B, implying ~$5T to reach 100GW over ~5 years and “~10% of US electricity going to data centers,” as summarized in the clip excerpt. This is a capex-and-grid thesis.

• Why it matters to AI leaders: this kind of framing turns model roadmaps into “can we site and power it” roadmaps; it also clarifies why long-horizon capacity deals (leases, power purchase agreements, on-site generation) are showing up as strategic differentiators in infra narratives like the clip excerpt.

Unclear parts: these are order-of-magnitude claims in social circulation, not a published cost model in the tweets.

Meta and Arm announce a datacenter processor collaboration aimed at “agentic AI” workloads

Datacenter CPU partnership (Meta × Arm): Meta and Arm are described as co-developing a new class of datacenter processors explicitly for “agentic AI,” with Arm selling the resulting chip commercially to other buyers (OpenAI, SAP, Cloudflare mentioned) in the partnership claim. This is a supply-chain strategy.

• Procurement implication: if Arm is commercializing the design beyond Meta, it suggests this is meant to be broadly deployable silicon (not just an internal accelerator), as claimed in the partnership claim.

• Workload implication: “agentic AI” language usually maps to many concurrent tool-using sessions and long-lived runtimes; if that’s the target, the interesting question is which bottlenecks the chip is meant to address (memory bandwidth, interconnect, context serving), which isn’t detailed in the partnership claim.

Microsoft and Nvidia launch “AI for Nuclear” effort to reduce nuclear build bottlenecks

AI for Nuclear (Microsoft × Nvidia): Microsoft and Nvidia announced a joint initiative framed around using AI tooling to reduce the permitting/logistics friction that slows nuclear plant construction, shifting from bespoke engineering to repeatable reference-based delivery per the Axios-style summary. The near-term claim cited is that Aalo Atomics used generative tools to reduce permitting timeline by 92%, per the same Axios-style summary.

• Why this lands in AI infra: if power is the binding constraint, then programs that compress time-to-power (even partially) become relevant to model deployment timelines; that’s the explicit causal chain in the Axios-style summary.

The tweets don’t include technical details on what models/workflows were used for the 92% permitting claim beyond “generative AI,” so treat the mechanism as unspecified.

🧭 Builder sentiment: bot slop, disappointment with AI, and workflow fatigue

Culture is news today because practitioners are reacting to degraded signal quality (reply bots/slop), mixed feelings from veteran engineers, and the ‘agentic workflows everywhere’ meme becoming a trope.

AI reply-bot pollution is breaking feedback signals on X

Reply bots (X): A small cluster of posts shows builders explicitly probing for reply bots and describing the replies feed as increasingly synthetic. Levelsio posts “WHERE ARE YOU AI REPLY BOTS” while inviting replies in Reply-bot bait, and another viral retweet claims the bot problem got bad enough to mute “about 17,500” accounts in Muting numbers. Separately, benhylak says “almost every reply… is AI generated slop” in Slop in replies.

Noise kills feedback.

For AI engineers and leaders, the operational implication is that social feedback loops (feature requests, bug reports, sentiment) are becoming less trustworthy without stronger identity, provenance, and filtering layers.

LLM-generated “official” spam is becoming an engineering tax

Misinformation ops (LLMs): thdxr argues LLMs have made clout-chasing spam harder to deal with, including people spinning up “official looking websites” with made-up security/privacy issues that spread and waste engineering time, as described in Spam sites. This is not a model-quality debate; it’s an operations problem.

Trust is the bottleneck.

For engineering leaders, it points to growing need for lightweight verification norms (signed advisories, canonical status pages, provenance) to reduce time lost to fabricated claims.

Wozniak’s AI skepticism frames a product gap beyond accuracy

Steve Wozniak (Apple): Wozniak reportedly says AI “keeps disappointing him” and that he “barely uses it,” with the core critique being that human value is judgment, tone, and emotional context—not only correctness, as summarized in Wozniak report. This is a useful reminder that “too perfect” and “too dry” outputs can read as a lack of understanding, even when they’re accurate. It’s a UX problem.

The signal for builders is that assistant quality will keep getting judged on how well models track intent, stakes, and social context, not whether they can produce a clean answer.

AI discourse burnout shows up as self-curation behavior

AI discourse (X): mattpocockuk says he disabled his X home feed via CSS for 6 months, then briefly scrolled on a new laptop and concluded “the AI discourse is so dumb,” per Home feed reset. That’s a behavioral signal: practitioners are increasingly opting out of ambient timelines to stay productive.

Attention is scarce.

For analysts, this reads as a distribution shift: more discussion and discovery may move to smaller, higher-trust channels (docs, repos, private communities) as general feeds degrade.

“Agentic workflows” has turned into a mainstream builder trope

Agentic workflows (culture): The line “men in their 40s used to have cool midlife crisis but now they just have agentic workflows” is being repeated as a meme via retweets like Agentic workflows meme. It’s a small joke, but it captures a real normalization: “agentic workflows” is no longer niche jargon, it’s an identity marker for how people talk about work.

This sets expectations.

For product leaders, it implies rising tolerance for automation-first workflows—and rising impatience with tools that feel non-agent-native.

🤖 Robotics signals: humanoid PR moments and industrial Gemini partnerships

Robotics appears as adoption + partnerships rather than research novelty: high-visibility humanoid appearances and DeepMind’s industrial integration efforts (factory/logistics focus).

DeepMind and Agile Robots partner to deploy Gemini Robotics into industrial settings

Gemini Robotics (Google DeepMind) x Agile Robots: DeepMind announced a partnership with Munich-based Agile Robots to integrate Gemini Robotics foundation models into Agile’s industrial platforms—targeting automotive manufacturing, electronics assembly, data centers, and logistics, according to the key-points excerpt in Partnership key points. This is positioned as a deployment-and-feedback loop: field data from robots improves models, which then ship back into hardware, per the longer breakdown in Partnership key points.

• Adjacent deployment signal: DeepMind advocates are highlighting a broader “partnership surface area” (Gemma local control; Gemini APIs for interaction and perception), as shown in Robotics partnerships demo, which fits the same industrialization arc.

Humanoid robot appearance at the White House becomes an AI adoption signal

Figure-style humanoid PR moment: Posts circulated a clip of a humanoid robot standing beside the First Lady at a White House reception, with the robot introducing itself ("Hello, I am AMBER") in a ~178-second video, as shown in White House clip. It’s being framed as a “turning point” optics moment rather than a capability reveal, per the commentary in White House clip and the “first humanoid robot in the White House” claim in Claim text.

• Narrative extension: The same event thread also amplified a “humanoids as utility” framing (moving from phones to robots; “humanoid educator named Plato”), as captured in Speech clip.

Tesla refreshes Optimus progress story with an engineering-focused video

Optimus (Tesla): Tesla released a new engineering/builders video for Optimus, leaning on lab footage and “who’s building it” storytelling rather than new specs, as shared in Optimus video share. It’s a ~123-second montage and is being recirculated as a progress signal in robotics timelines, as echoed by reposts like Reshare.

The update is PR-forward, but it’s also a reminder that humanoid work is increasingly being communicated as an ongoing manufacturing/ops program, not a one-off demo.

Robotics “early market” prediction centers on open-source hacker stacks

Robotics adoption path: A builder take argues robotics is likely to start with open-source hackers and 3D printers, and that value capture will be fragmented rather than winner-take-all, as stated in Builder prediction. It’s a weak-signal but relevant framing for AI engineers evaluating where tooling, simulation, and agentic control layers might standardize first.

The claim is directional, not evidence-backed in these tweets. It aligns with the broader “agentic workflows meet physical tooling” zeitgeist, but no concrete adoption metrics were shared.